"My agent understands me better": Integrating Dynamic Human-like Memory Recall and Consolidation in LLM-Based Agents

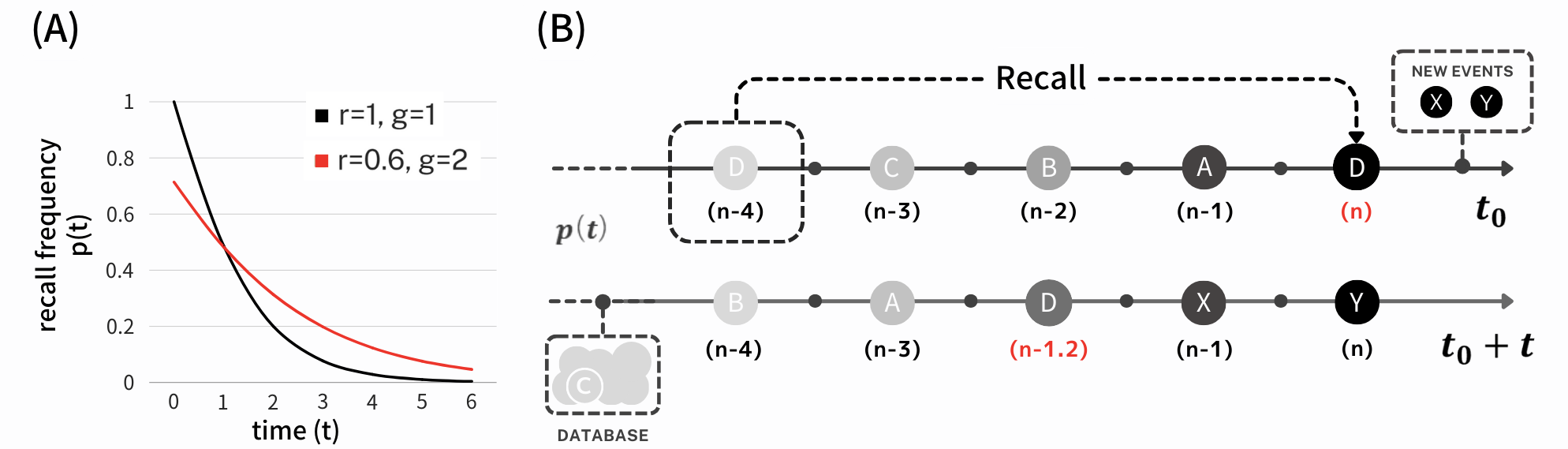

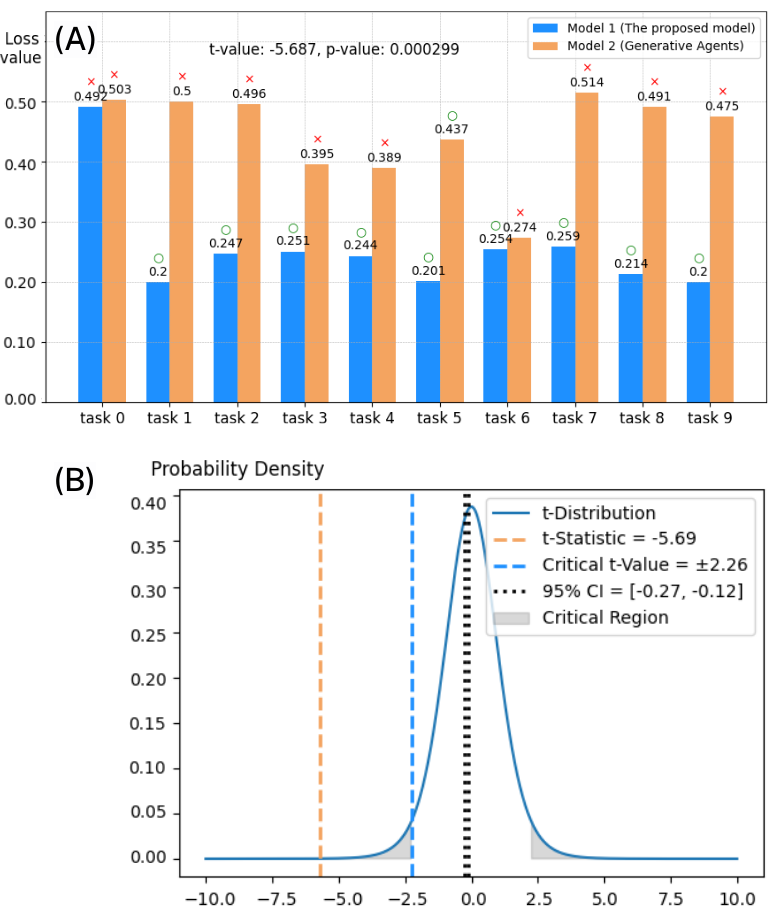

Abstract: In this study, we propose a novel human-like memory architecture designed for enhancing the cognitive abilities of LLM based dialogue agents. Our proposed architecture enables agents to autonomously recall memories necessary for response generation, effectively addressing a limitation in the temporal cognition of LLMs. We adopt the human memory cue recall as a trigger for accurate and efficient memory recall. Moreover, we developed a mathematical model that dynamically quantifies memory consolidation, considering factors such as contextual relevance, elapsed time, and recall frequency. The agent stores memories retrieved from the user's interaction history in a database that encapsulates each memory's content and temporal context. Thus, this strategic storage allows agents to recall specific memories and understand their significance to the user in a temporal context, similar to how humans recognize and recall past experiences.

- Hafeez Ullah Amin and Aamir Malik. 2014. Memory Retention and Recall Process. 219–237. https://doi.org/10.1201/b17605-11

- The human hippocampus and spatial and episodic memory. Neuron 35, 4 (2002), 625–641.

- S.D.L.R.S.P.P.U. California. 1987. Memory and Brain. Oxford University Press, USA. https://books.google.co.jp/books?id=WH-HF5E9XSsC

- Antonio Chessa and Jaap Murre. 2007. A Neurocognitive Model of Advertisement Content and Brand Name Recall. Marketing Science 26 (01 2007), 130–141. https://doi.org/10.1287/mksc.1060.0212

- Xuan-Quy Dao. 2023. Performance comparison of large language models on vnhsge english dataset: Openai chatgpt, microsoft bing chat, and google bard. arXiv preprint arXiv:2307.02288 (2023).

- GPT-4 Technical Report. arXiv:2303.08774 [cs.CL]

- Firebase. 2023. Firestore. https://firebase.google.com/docs/firestore?hl=ja. (Accessed on 01/18/2024).

- Transformer Feed-Forward Layers Are Key-Value Memories. arXiv:2012.14913 [cs.CL]

- Suppression lateralise du materiel verbal presente dichotiquement lors d’une destruction partielle du corps calleux. Neuropsychologia 16, 2 (1978), 233–237.

- Anthony Holtmaat and Pico Caroni. 2016. Functional and structural underpinnings of neuronal assembly formation in learning. Nature neuroscience 19, 12 (2016), 1553–1562.

- A Machine With Human-Like Memory Systems. arXiv:2204.01611 [cs.AI]

- J. F. C. Kingman. 1993. Poisson Processes. Oxford University Press.

- Beatrice G Kuhlmann. 2019. Metacognition of prospective memory: Will I remember to remember? Prospective memory (2019), 60–77.

- A survey of transformers. AI Open (2022).

- Danilo P Mandic and Jonathon Chambers. 2001. Recurrent neural networks for prediction: learning algorithms, architectures and stability. John Wiley & Sons, Inc.

- Altering memory through recall: The effects of cue-guided retrieval processing. Memory & Cognition 17, 4 (1989), 423–434.

- OpenAI. 2023. ChatGPT. https://chat.openai.com/. (November 22 version) [Large language model].

- Generative Agents: Interactive Simulacra of Human Behavior. arXiv:2304.03442 [cs.HC]

- Lloyd Peterson and Margaret Jean Peterson. 1959. Short-Term Retention of Individual Verbal Items. Journal of Experimental Psychology 58, 3 (1959), 193. https://doi.org/10.1037/h0049234

- Qdrant. 2023. Vector Database. https://qdrant.tech/. (Accessed on 01/17/2024).

- Henry Roediger and Jeffrey Karpicke. 2006. Test-Enhanced Learning Taking Memory Tests Improves Long-Term Retention. Psychological science 17 (04 2006), 249–55. https://doi.org/10.1111/j.1467-9280.2006.01693.x

- How to fine-tune bert for text classification?. In Chinese Computational Linguistics: 18th China National Conference, CCL 2019, Kunming, China, October 18–20, 2019, Proceedings 18. Springer, 194–206.

- LSTM neural networks for language modeling. In Thirteenth annual conference of the international speech communication association.

- Endel Tulving. 2002. Episodic Memory: From Mind to Brain. Annual Review of Psychology 53, 1 (2002), 1–25. https://doi.org/10.1146/annurev.psych.53.100901.135114

- Endel Tulving et al. 1972. Episodic and semantic memory. Organization of memory 1, 381-403 (1972), 1.

- Guido Van Rossum and Fred L. Drake. 2009. Python 3 Reference Manual. CreateSpace, Scotts Valley, CA.

- Atsushi Yamadori. 2002. Frontiers of Human Memory : a collection of contributions based on lectures presented at Internationl Symposium, Sendai, Japan, October 25-27, 2001. Tohoku University Press. https://ci.nii.ac.jp/ncid/BA57511014

- MemoryBank: Enhancing Large Language Models with Long-Term Memory. arXiv:2305.10250 [cs.CL]

- Hubert A. Zielske. 1959. The Remembering and Forgetting of Advertising. Journal of Marketing 23 (1959), 239 – 243. https://api.semanticscholar.org/CorpusID:167354194

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.