LLMvsSmall Model? Large Language Model Based Text Augmentation Enhanced Personality Detection Model

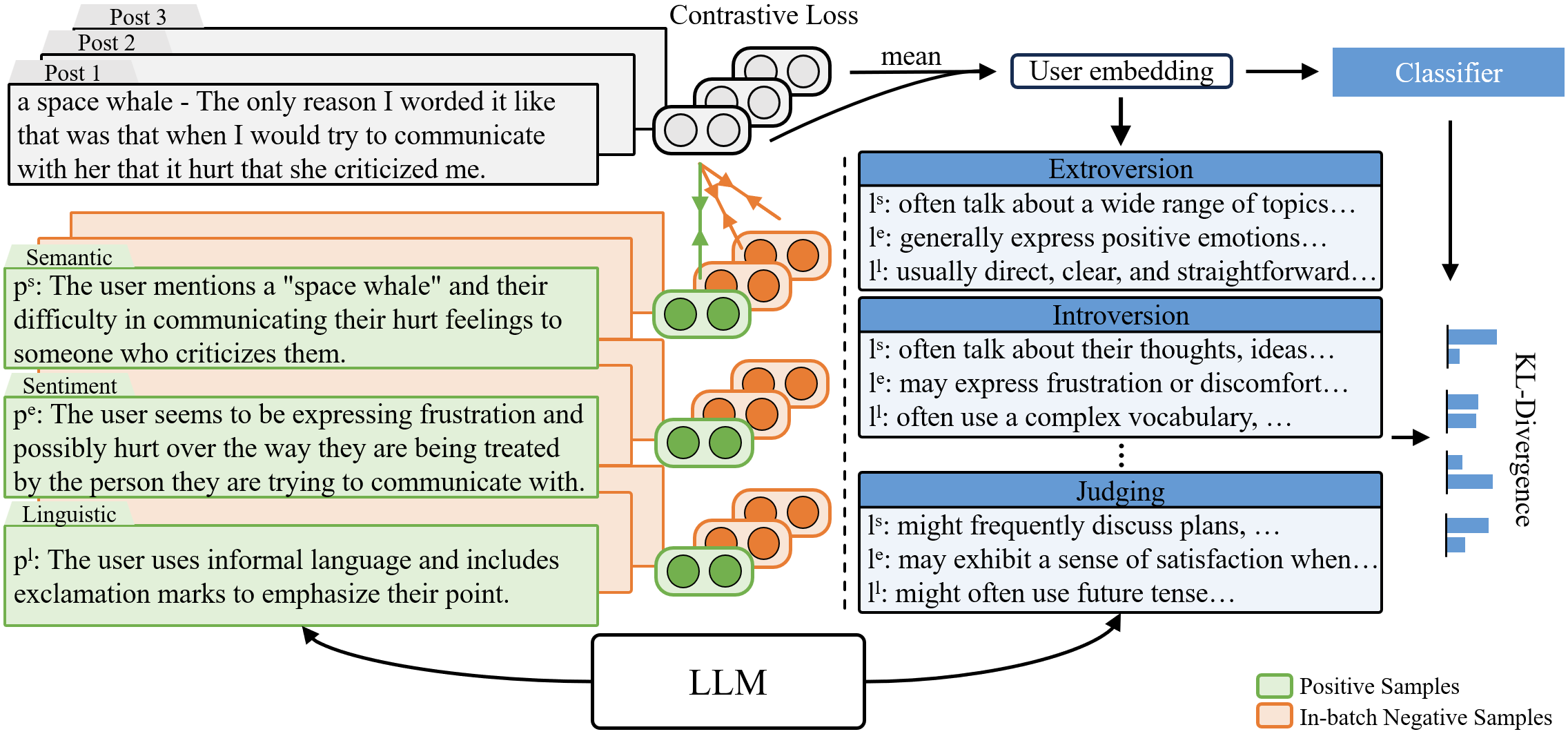

Abstract: Personality detection aims to detect one's personality traits underlying in social media posts. One challenge of this task is the scarcity of ground-truth personality traits which are collected from self-report questionnaires. Most existing methods learn post features directly by fine-tuning the pre-trained LLMs under the supervision of limited personality labels. This leads to inferior quality of post features and consequently affects the performance. In addition, they treat personality traits as one-hot classification labels, overlooking the semantic information within them. In this paper, we propose a LLM based text augmentation enhanced personality detection model, which distills the LLM's knowledge to enhance the small model for personality detection, even when the LLM fails in this task. Specifically, we enable LLM to generate post analyses (augmentations) from the aspects of semantic, sentiment, and linguistic, which are critical for personality detection. By using contrastive learning to pull them together in the embedding space, the post encoder can better capture the psycho-linguistic information within the post representations, thus improving personality detection. Furthermore, we utilize the LLM to enrich the information of personality labels for enhancing the detection performance. Experimental results on the benchmark datasets demonstrate that our model outperforms the state-of-the-art methods on personality detection.

- The Nature and Structure of Correlations Among Big Five Ratings: The Halo-Alpha-Beta Model. Journal of personality and social psychology, 97: 1142–56.

- The Role of the Five-Factor Model in Personality Assessment and Treatment Planning. Clinical Psychology: Science and Practice, 23(4): 365–381.

- A large annotated corpus for learning natural language inference. In Proceedings of the 2015 Conference on Empirical Methods in Natural Language Processing, 632–642.

- Language Models are Few-Shot Learners. In Larochelle, H.; Ranzato, M.; Hadsell, R.; Balcan, M.; and Lin, H., eds., Advances in Neural Information Processing Systems, volume 33, 1877–1901.

- Improving Contrastive Learning of Sentence Embeddings from AI Feedback. In Findings of the Association for Computational Linguistics: ACL 2023, 11122–11138.

- Survey Analysis of Machine Learning Methods for Natural Language Processing for MBTI Personality Type Prediction. Technical report, Stanford University.

- Transformer-XL: Attentive Language Models beyond a Fixed-Length Context. In Korhonen, A.; Traum, D.; and Màrquez, L., eds., Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, 2978–2988.

- BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding. In Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long and Short Papers), 4171–4186.

- On Text-based Personality Computing: Challenges and Future Directions. In Findings of the Association for Computational Linguistics: ACL 2023, 10861–10879.

- SimCSE: Simple Contrastive Learning of Sentence Embeddings. In Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 6894–6910.

- PANDORA Talks: Personality and Demographics on Reddit. In Proceedings of the Ninth International Workshop on Natural Language Processing for Social Media, 138–152.

- Dimensionality Reduction by Learning an Invariant Mapping. In 2006 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’06), volume 2, 1735–1742.

- Distilling the Knowledge in a Neural Network. arXiv:1503.02531.

- Distilling Step-by-Step! Outperforming Larger Language Models with Less Training Data and Smaller Model Sizes. In Findings of the Association for Computational Linguistics: ACL 2023, 8003–8017.

- Weighted Distillation with Unlabeled Examples. In Koyejo, S.; Mohamed, S.; Agarwal, A.; Belgrave, D.; Cho, K.; and Oh, A., eds., Advances in Neural Information Processing Systems, volume 35, 7024–7037.

- Is ChatGPT a Good Personality Recognizer? A Preliminary Study. arXiv:2307.03952.

- Automatic Text-based Personality Recognition on Monologues and Multiparty Dialogues Using Attentive Networks and Contextual Embeddings. arXiv:1911.09304.

- Automatic Text-Based Personality Recognition on Monologues and Multiparty Dialogues Using Attentive Networks and Contextual Embeddings (Student Abstract). volume 34, 13821–13822.

- The Relationship Between Personality Traits and the Processing of Emotion Words: Evidence from Eye-Movements in Sentence Reading. Journal of Psycholinguistic Research, 1–27.

- Myers-Briggs Personality Classification and Personality-Specific Language Generation Using Pre-trained Language Models. arXiv:1907.06333.

- Construction of MBTI Personality Estimation Model Considering Emotional Information. In Proceedings of the 35th Pacific Asia Conference on Language, Information and Computation, 262–269. Shanghai, China.

- RoBERTa: A Robustly Optimized BERT Pretraining Approach. arXiv:1907.11692.

- Hierarchical Modeling for User Personality Prediction: The Role of Message-Level Attention. In Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, 5306–5316.

- Training language models to follow instructions with human feedback. In Koyejo, S.; Mohamed, S.; Agarwal, A.; Belgrave, D.; Cho, K.; and Oh, A., eds., Advances in Neural Information Processing Systems, volume 35, 27730–27744.

- Personality, Gender, and Age in the Language of Social Media: The Open-Vocabulary Approach. PLOS ONE, 8(9): 1–16.

- A Survey of Automatic Personality Detection from Texts. In Proceedings of the 28th International Conference on Computational Linguistics, 6284–6295.

- ERNIE 3.0: Large-scale Knowledge Enhanced Pre-training for Language Understanding and Generation. arXiv:2107.02137.

- Personality Predictions Based on User Behavior on the Facebook Social Media Platform. IEEE Access, 6: 61959–61969.

- Personality Prediction System from Facebook Users. Procedia Computer Science, 116: 604–611. Discovery and innovation of computer science technology in artificial intelligence era: The 2nd International Conference on Computer Science and Computational Intelligence (ICCSCI 2017).

- The Psychological Meaning of Words: LIWC and Computerized Text Analysis Methods. Journal of Language and Social Psychology, 29(1): 24–54.

- Want To Reduce Labeling Cost? GPT-3 Can Help. In Findings of the Association for Computational Linguistics: EMNLP 2021, 4195–4205.

- Self-Instruct: Aligning Language Models with Self-Generated Instructions. In Proceedings of the 61st Annual Meeting of the Association for Computational Linguistics (Volume 1: Long Papers), 13484–13508.

- Is ChatGPT a Good Sentiment Analyzer? A Preliminary Study. arXiv:2304.04339.

- Automatically Select Emotion for Response via Personality-affected Emotion Transition. In Findings of the Association for Computational Linguistics: ACL-IJCNLP 2021, 5010–5020.

- A Broad-Coverage Challenge Corpus for Sentence Understanding through Inference. In Proceedings of the 2018 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Volume 1 (Long Papers), 1112–1122.

- Deep learning-based personality recognition from text posts of online social networks. Applied Intelligence, 48.

- ConSERT: A Contrastive Framework for Self-Supervised Sentence Representation Transfer. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), 5065–5075.

- Multi-Document Transformer for Personality Detection. volume 35, 14221–14229.

- Improving Dialog Systems for Negotiation with Personality Modeling. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), 681–693.

- Orders Are Unwanted: Dynamic Deep Graph Convolutional Network for Personality Detection. In Proceedings of the AAAI Conference on Artificial Intelligence, volume 37, 13896–13904.

- Psycholinguistic Tripartite Graph Network for Personality Detection. In Proceedings of the 59th Annual Meeting of the Association for Computational Linguistics and the 11th International Joint Conference on Natural Language Processing (Volume 1: Long Papers), 4229–4239.

- Yarkoni, T. 2010. Personality in 100,000 Words: A large-scale analysis of personality and word use among bloggers. Journal of Research in Personality, 44(3): 363–373.

- OPT: Open Pre-trained Transformer Language Models. arXiv:2205.01068.

- Benchmarking Large Language Models for News Summarization. arXiv:2301.13848.

- Understanding bag-of-words model: a statistical framework. International Journal of Machine Learning and Cybernetics, 1: 43–52.

- Contrastive Graph Transformer Network for Personality Detection. In Raedt, L. D., ed., Proceedings of the Thirty-First International Joint Conference on Artificial Intelligence, IJCAI-22, 4559–4565.

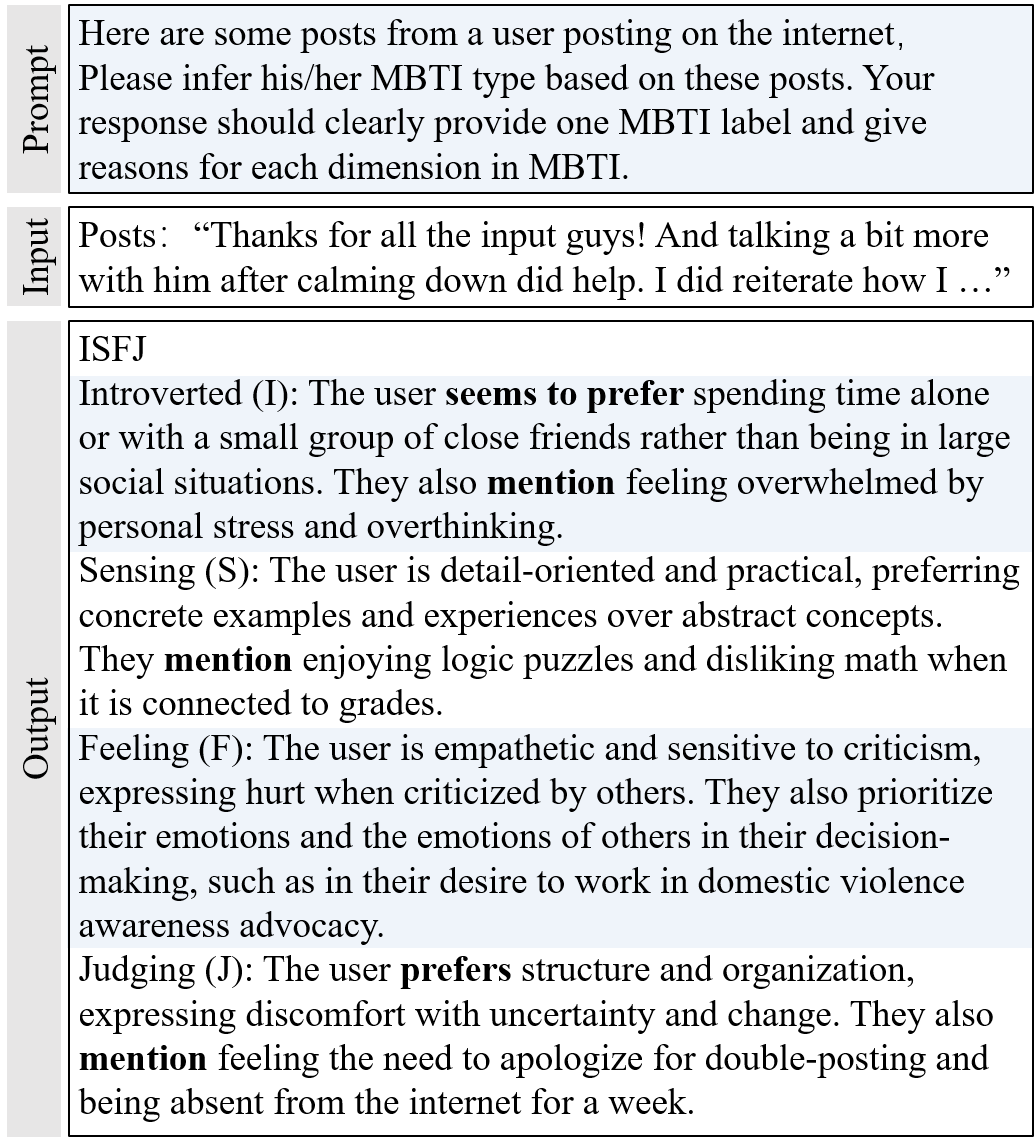

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.