UniDoorManip: Learning Universal Door Manipulation Policy Over Large-scale and Diverse Door Manipulation Environments

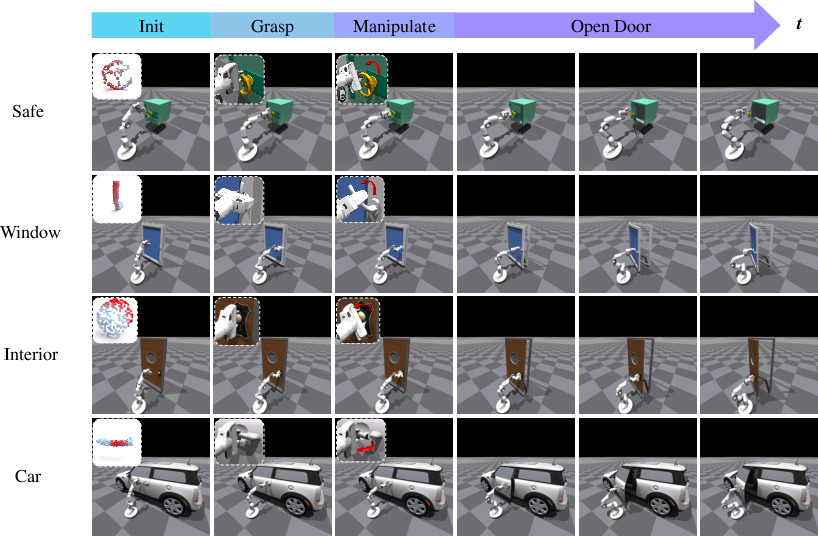

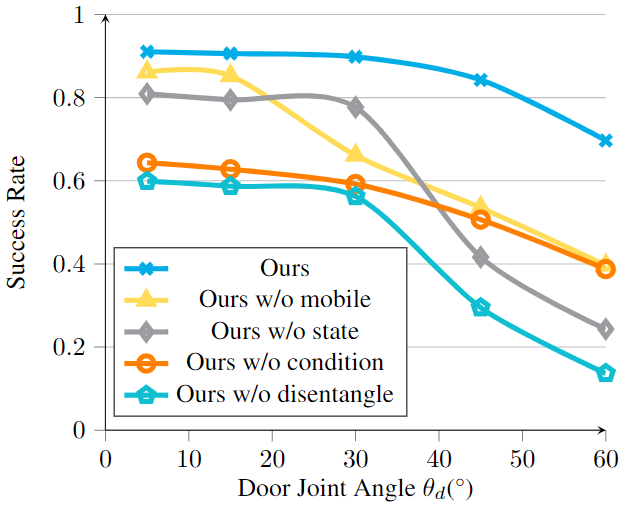

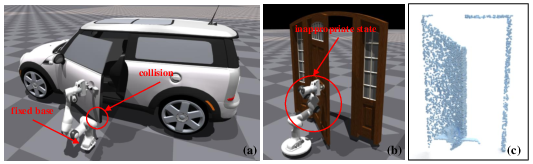

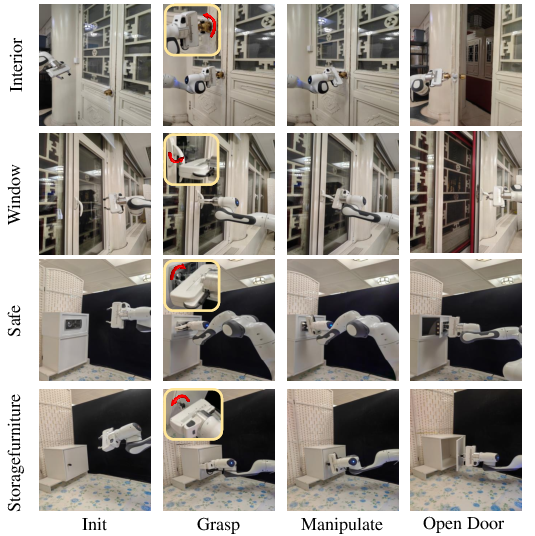

Abstract: Learning a universal manipulation policy encompassing doors with diverse categories, geometries and mechanisms, is crucial for future embodied agents to effectively work in complex and broad real-world scenarios. Due to the limited datasets and unrealistic simulation environments, previous works fail to achieve good performance across various doors. In this work, we build a novel door manipulation environment reflecting different realistic door manipulation mechanisms, and further equip this environment with a large-scale door dataset covering 6 door categories with hundreds of door bodies and handles, making up thousands of different door instances. Additionally, to better emulate real-world scenarios, we introduce a mobile robot as the agent and use the partial and occluded point cloud as the observation, which are not considered in previous works while possessing significance for real-world implementations. To learn a universal policy over diverse doors, we propose a novel framework disentangling the whole manipulation process into three stages, and integrating them by training in the reversed order of inference. Extensive experiments validate the effectiveness of our designs and demonstrate our framework's strong performance. Code, data and videos are avaible on https://unidoormanip.github.io/.

- Learning to generalize kinematic models to novel objects. In Proceedings of the 3rd Conference on Robot Learning, 2019.

- Affordance learning from play for sample-efficient policy learning. In 2022 International Conference on Robotics and Automation (ICRA), pages 6372–6378. IEEE, 2022.

- Learning environment-aware affordance for 3d articulated object manipulation under occlusions. arXiv preprint arXiv:2309.07510, 2023.

- Flowbot3d: Learning 3d articulation flow to manipulate articulated objects. arXiv preprint arXiv:2205.04382, 2022.

- Partmanip: Learning cross-category generalizable part manipulation policy from point cloud observations. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 2978–2988, 2023a.

- Gapartnet: Cross-category domain-generalizable object perception and manipulation via generalizable and actionable parts. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 7081–7091, 2023b.

- End-to-end affordance learning for robotic manipulation. arXiv preprint arXiv:2209.12941, 2022.

- James J Gibson. The theory of affordances. Hilldale, USA, 1(2):67–82, 1977.

- Maniskill2: A unified benchmark for generalizable manipulation skills. arXiv preprint arXiv:2302.04659, 2023.

- Active articulation model estimation through interactive perception. In 2015 IEEE International Conference on Robotics and Automation (ICRA), pages 3305–3312. IEEE, 2015.

- Screwnet: Category-independent articulation model estimation from depth images using screw theory. In 2021 IEEE International Conference on Robotics and Automation (ICRA), pages 13670–13677. IEEE, 2021.

- Ditto: Building digital twins of articulated objects from interaction. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 5616–5626, 2022.

- Manipulating articulated objects with interactive perception. In 2008 IEEE International Conference on Robotics and Automation, pages 272–277. IEEE, 2008.

- Interactive segmentation, tracking, and kinematic modeling of unknown 3d articulated objects. In 2013 IEEE International Conference on Robotics and Automation, pages 5003–5010. IEEE, 2013.

- Category-level articulated object pose estimation. In Proceedings of the IEEE/CVF conference on computer vision and pattern recognition, pages 3706–3715, 2020.

- Akb-48: A real-world articulated object knowledge base. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 14809–14818, 2022.

- Nothing but geometric constraints: A model-free method for articulated object pose estimation. arXiv preprint arXiv:2012.00088, 2020.

- Sagci-system: Towards sample-efficient, generalizable, compositional, and incremental robot learning. In 2022 International Conference on Robotics and Automation (ICRA), pages 98–105. IEEE, 2022.

- Isaac gym: High performance gpu-based physics simulation for robot learning. arXiv preprint arXiv:2108.10470, 2021.

- Where2act: From pixels to actions for articulated 3d objects. In Proceedings of the IEEE/CVF International Conference on Computer Vision (ICCV), pages 6813–6823, 2021.

- Maniskill: Generalizable manipulation skill benchmark with large-scale demonstrations. arXiv preprint arXiv:2107.14483, 2021.

- Structure from action: Learning interactions for articulated object 3d structure discovery. arXiv preprint arXiv:2207.08997, 2022.

- Pointnet++: Deep hierarchical feature learning on point sets in a metric space. Advances in neural information processing systems, 30, 2017.

- Learning agent-aware affordances for closed-loop interaction with articulated objects. In 2023 IEEE International Conference on Robotics and Automation (ICRA), pages 5916–5922. IEEE, 2023.

- Proximal policy optimization algorithms. arXiv preprint arXiv:1707.06347, 2017.

- Learning structured output representation using deep conditional generative models. Advances in neural information processing systems, 28, 2015.

- Doorgym: A scalable door opening environment and baseline agent. arXiv preprint arXiv:1908.01887, 2019.

- Learning semantic keypoint representations for door opening manipulation. IEEE Robotics and Automation Letters, 5(4):6980–6987, 2020.

- Adaafford: Learning to adapt manipulation affordance for 3d articulated objects via few-shot interactions. European conference on computer vision (ECCV 2022), 2022.

- Captra: Category-level pose tracking for rigid and articulated objects from point clouds. In Proceedings of the IEEE/CVF International Conference on Computer Vision, pages 13209–13218, 2021.

- VAT-mart: Learning visual action trajectory proposals for manipulating 3d ARTiculated objects. In International Conference on Learning Representations, 2022.

- Sapien: A simulated part-based interactive environment. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 11097–11107, 2020.

- Umpnet: Universal manipulation policy network for articulated objects. IEEE Robotics and Automation Letters, 2022.

- On the continuity of rotation representations in neural networks. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 5745–5753, 2019.

- robosuite: A modular simulation framework and benchmark for robot learning. arXiv preprint arXiv:2009.12293, 2020.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.