A Multimodal Handover Failure Detection Dataset and Baselines

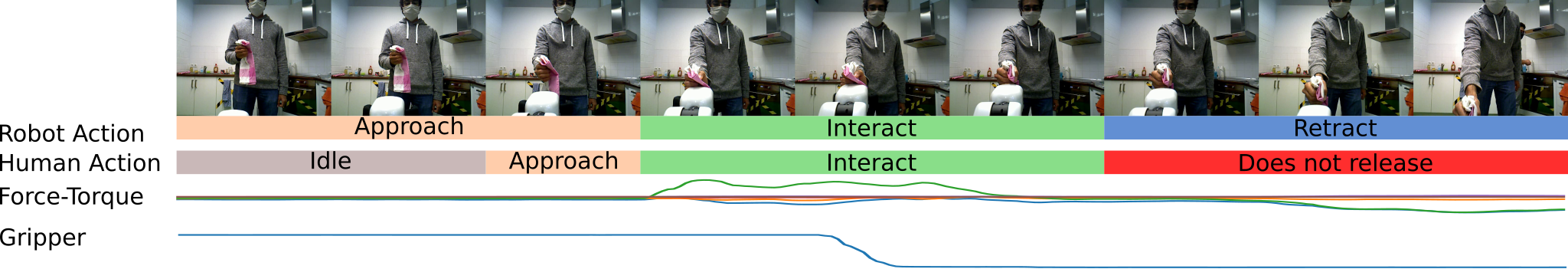

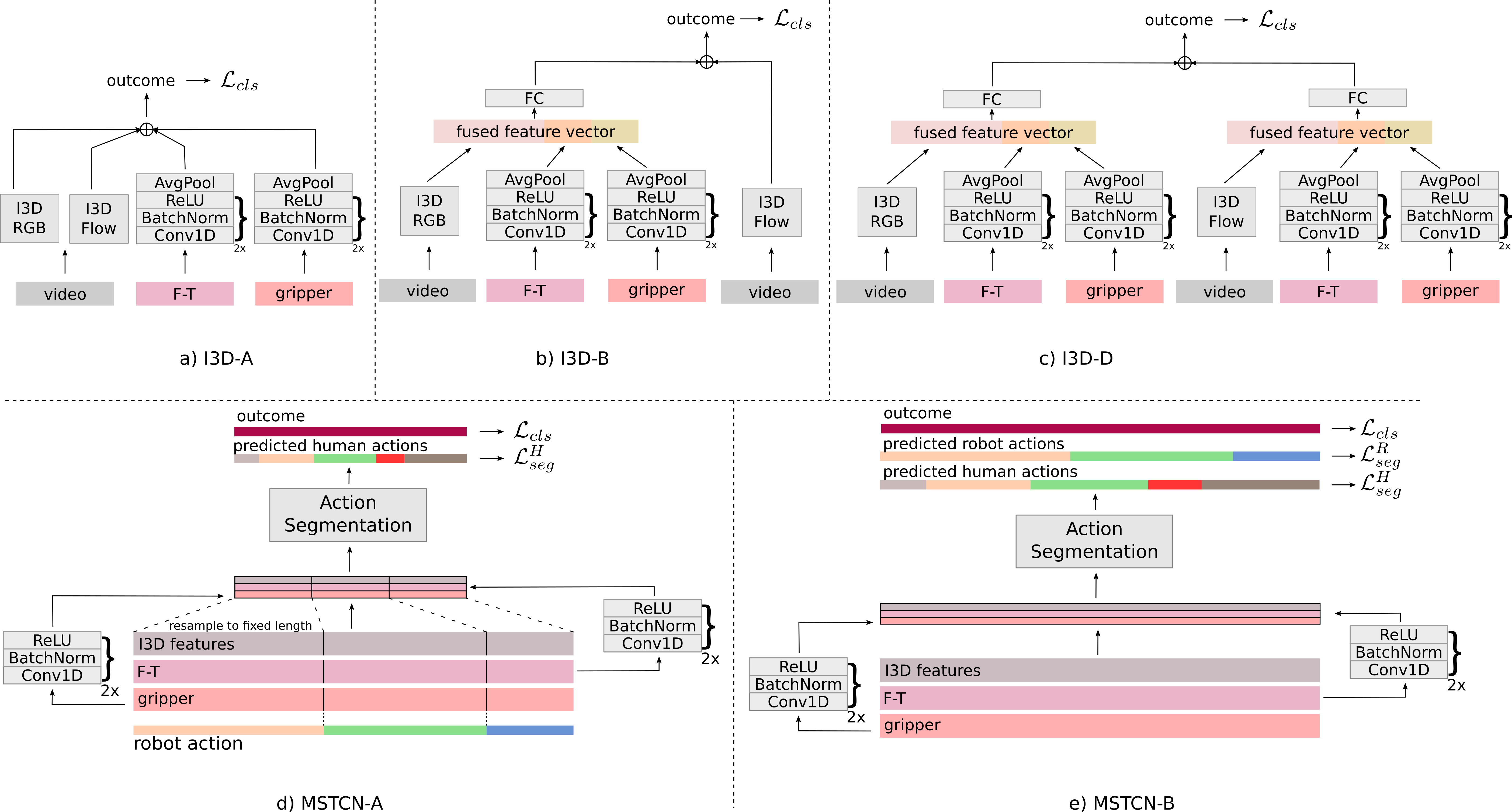

Abstract: An object handover between a robot and a human is a coordinated action which is prone to failure for reasons such as miscommunication, incorrect actions and unexpected object properties. Existing works on handover failure detection and prevention focus on preventing failures due to object slip or external disturbances. However, there is a lack of datasets and evaluation methods that consider unpreventable failures caused by the human participant. To address this deficit, we present the multimodal Handover Failure Detection dataset, which consists of failures induced by the human participant, such as ignoring the robot or not releasing the object. We also present two baseline methods for handover failure detection: (i) a video classification method using 3D CNNs and (ii) a temporal action segmentation approach which jointly classifies the human action, robot action and overall outcome of the action. The results show that video is an important modality, but using force-torque data and gripper position help improve failure detection and action segmentation accuracy.

- S. Parastegari, E. Noohi, B. Abbasi, and M. Žefran, “Failure Recovery in Robot–Human Object Handover,” IEEE Transactions on Robotics, vol. 34, no. 3, pp. 660–673, 2018.

- M.-J. Davari, M. Hegedus, K. Gupta, and M. Mehrandezh, “Identifying Multiple Interaction Events from Tactile Data during Robot-Human Object Transfer,” in 2019 28th IEEE International Conference on Robot and Human Interactive Communication (RO-MAN). IEEE, 2019, pp. 1–6.

- R. Liu, R. Chen, and C. Liu, “Task-Agnostic Adaptation for Safe Human-Robot Handover,” arXiv preprint arXiv:2209.09418, 2022.

- A. G. Eguiluz, I. Rañó, S. A. Coleman, and T. M. McGinnity, “Reliable object handover through tactile force sensing and effort control in the Shadow Robot hand,” in 2017 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2017, pp. 372–377.

- W. Yang, C. Paxton, A. Mousavian, Y.-W. Chao, M. Cakmak, and D. Fox, “Reactive Human-to-Robot Handovers of Arbitrary Objects,” in 2021 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2021, pp. 3118–3124.

- M. Mavsar and A. Ude, “RoverNet: Vision-Based Adaptive Human-to-Robot Object Handovers,” in 2022 IEEE-RAS 21st International Conference on Humanoid Robots (Humanoids). IEEE, 2022, pp. 858–864.

- Y. L. Pang, A. Xompero, C. Oh, and A. Cavallaro, “Towards safe human-to-robot handovers of unknown containers,” in 2021 30th IEEE International Conference on Robot & Human Interactive Communication (RO-MAN). IEEE, 2021, pp. 51–58.

- S. Thoduka and N. Hochgeschwender, “Benchmarking Robots by Inducing Failures in Competition Scenarios,” in Digital Human Modeling and Applications in Health, Safety, Ergonomics and Risk Management. AI, Product and Service: 12th International Conference, DHM 2021, Held as Part of the 23rd HCI International Conference, HCII 2021, Virtual Event, July 24–29, 2021, Proceedings, Part II. Springer, 2021, pp. 263–276.

- Z. Xu, S. Escalera, A. Pavão, M. Richard, W.-W. Tu, Q. Yao, H. Zhao, and I. Guyon, “Codabench: Flexible, easy-to-use, and reproducible meta-benchmark platform,” Patterns, vol. 3, no. 7, p. 100543, 2022. [Online]. Available: https://www.sciencedirect.com/science/article/pii/S2666389922001465

- E. C. Grigore, K. Eder, A. G. Pipe, C. Melhuish, and U. Leonards, “Joint Action Understanding improves Robot-to-Human Object Handover,” in 2013 IEEE/RSJ International Conference on Intelligent Robots and Systems. IEEE, 2013, pp. 4622–4629.

- V. Ortenzi, A. Cosgun, T. Pardi, W. P. Chan, E. Croft, and D. Kulić, “Object Handovers: a Review for Robotics,” IEEE Transactions on Robotics, vol. 37, no. 6, pp. 1855–1873, 2021.

- S. Parastegari, E. Noohi, B. Abbasi, and M. Žefran, “A fail-safe object handover controller,” in 2016 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2016, pp. 2003–2008.

- P. Rosenberger, A. Cosgun, R. Newbury, J. Kwan, V. Ortenzi, P. Corke, and M. Grafinger, “Object-Independent Human-to-Robot Handovers Using Real Time Robotic Vision,” IEEE Robotics and Automation Letters, vol. 6, no. 1, pp. 17–23, 2020.

- Z. Han and H. A. Yanco, “Reasons People Want Explanations After Unrecoverable Pre-Handover Failures,” in ICRA Workshop on Human-Robot Handovers, 2020. [Online]. Available: https://arxiv.org/abs/2010.02278

- C. Meng, T. Zhang, and T. lun Lam, “Fast and Comfortable Interactive Robot-to-Human Object Handover,” in 2022 IEEE/RSJ International Conference on Intelligent Robots and Systems (IROS). IEEE, 2022, pp. 3701–3706.

- I. Mamaev, D. Kretsch, H. Alagi, and B. Hein, “Grasp Detection for Robot to Human Handovers Using Capacitive Sensors,” in 2021 IEEE International Conference on Robotics and Automation (ICRA). IEEE, 2021, pp. 12 552–12 558.

- F. Iori, G. Perovic, F. Cini, A. Mazzeo, E. Falotico, and M. Controzzi, “DMP-Based Reactive Robot-to-Human Handover in Perturbed Scenarios,” International Journal of Social Robotics, pp. 1–16, 2023.

- M. Mohandes, B. Moradi, K. Gupta, and M. Mehrandezh, “Robot to Human Object Handover Using Vision and Joint Torque Sensor Modalities,” in International Conference on Robot Intelligence Technology and Applications. Springer, 2022, pp. 109–124.

- Y.-W. Chao, C. Paxton, Y. Xiang, W. Yang, B. Sundaralingam, T. Chen, A. Murali, M. Cakmak, and D. Fox, “HandoverSim: A Simulation Framework and Benchmark for Human-to-Robot Object Handovers,” in 2022 International Conference on Robotics and Automation (ICRA). IEEE, 2022, pp. 6941–6947.

- J. Carreira and A. Zisserman, “Quo Vadis, Action Recognition? A New Model and the Kinetics Dataset,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2017, pp. 6299–6308.

- C. Lea, M. D. Flynn, R. Vidal, A. Reiter, and G. D. Hager, “Temporal Convolutional Networks for Action Segmentation and Detection,” in Proceedings of the IEEE Conference on Computer Vision and Pattern Recognition, 2017, pp. 156–165.

- Y. A. Farha and J. Gall, “MS-TCN: Multi-Stage Temporal Convolutional Network for Action Segmentation,” in Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, 2019, pp. 3575–3584.

- S. Li, Y. A. Farha, Y. Liu, M.-M. Cheng, and J. Gall, “MS-TCN++: Multi-Stage Temporal Convolutional Network for Action Segmentation,” IEEE Transactions on Pattern Analysis and Machine Intelligence, vol. 45, no. 6, pp. 6647–6658, 2023.

- F. Yi, H. Wen, and T. Jiang, “ASFormer: Transformer for Action Segmentation,” British Machine Vision Conference (BMVC), 2021.

- G. Ding, F. Sener, and A. Yao, “Temporal Action Segmentation: An Analysis of Modern Techniques,” IEEE Transactions on Pattern Analysis and Machine Intelligence, 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.