LangGPT: Rethinking Structured Reusable Prompt Design Framework for LLMs from the Programming Language

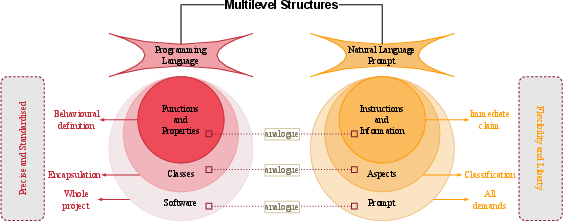

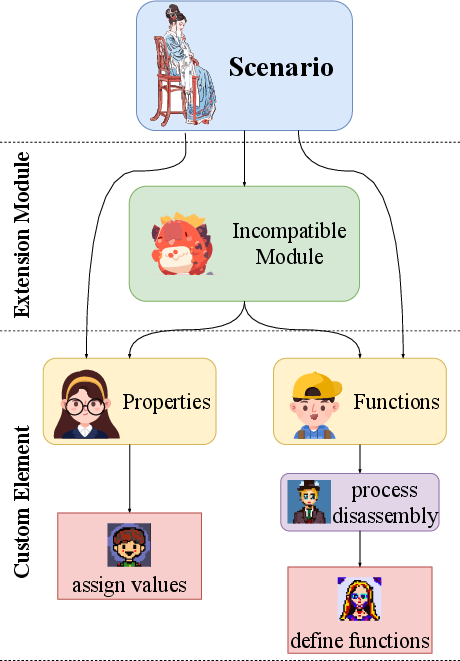

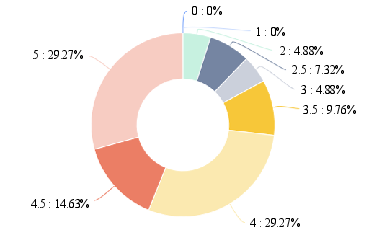

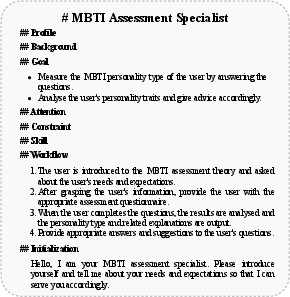

Abstract: LLMs have demonstrated commendable performance across diverse domains. Nevertheless, formulating high-quality prompts to instruct LLMs proficiently poses a challenge for non-AI experts. Existing research in prompt engineering suggests somewhat scattered optimization principles and designs empirically dependent prompt optimizers. Unfortunately, these endeavors lack a structured design template, incurring high learning costs and resulting in low reusability. In addition, it is not conducive to the iterative updating of prompts. Inspired by structured reusable programming languages, we propose LangGPT, a dual-layer prompt design framework as the programming language for LLMs. LangGPT has an easy-to-learn normative structure and provides an extended structure for migration and reuse. Experiments illustrate that LangGPT significantly enhances the performance of LLMs. Moreover, the case study shows that LangGPT leads LLMs to generate higher-quality responses. Furthermore, we analyzed the ease of use and reusability of LangGPT through a user survey in our online community.

- 01-ai. Building the Next Generation of Open-Source and Bilingual LLMs. https://github.com/01-ai/Yi, 2023. original-date: 2023-11-03T16:08:37Z.

- GPT-4 Technical Report, December 2023. arXiv:2303.08774 [cs].

- Alfred V Aho. Compilers: principles, techniques and tools. Pearson Education India, 2007.

- Nabil Alouani. Prompt Engineering Could Be the Hottest Programming Language of 2024 — Here’s Why. https://towardsdatascience.com, December 2023.

- Gemini: A Family of Highly Capable Multimodal Models, December 2023. arXiv:2312.11805 [cs].

- Qwen Technical Report, September 2023. arXiv:2309.16609 [cs].

- Principled Instructions Are All You Need for Questioning LLaMA-1/2, GPT-3.5/4, December 2023. arXiv:2312.16171 [cs].

- A study on Prompt Design, Advantages and Limitations of ChatGPT for Deep Learning Program Repair, April 2023. arXiv:2304.08191 [cs].

- Chakray. Programming Languages: Types and Features, December 2018.

- Harrison Chase. LangChain: Building applications with LLMs through composability. https://github.com/langchain-ai/langchain, October 2022.

- Unleashing the potential of prompt engineering in Large Language Models: a comprehensive review, October 2023. arXiv:2310.14735 [cs] version: 2.

- Black-Box Prompt Optimization: Aligning Large Language Models without Model Training, November 2023. arXiv:2311.04155 [cs].

- GLM: General Language Model Pretraining with Autoregressive Blank Infilling. In Annual Meeting of the Association for Computational Linguistics, 2021.

- Mihail Eric. A complete introduction to prompt engineering for large language models. https://www.mihaileric.com, October 2022.

- Towards an Automatic Prompt Optimization Framework for AI Image Generation. In HCI International 2023 Posters, Communications in Computer and Information Science, pages 405–410, Cham, 2023. Springer Nature Switzerland.

- An Introduction to Language. Cengage Learning, 2018.

- Nagaraju Gajula. A Guide to Prompt Engineering in Large Language Models. https://www.latentview.com, August 2023.

- GeeksforGeeks. Natural Language Processing(NLP) VS Programming Language. https://www.geeksforgeeks.org, December 2023. Section: Python.

- John Gruber. Markdown: Syntax. http://daringfireball.net/projects/markdown, 2012.

- Modern compiler design. Springer Science & Business Media, 2012.

- Connecting Large Language Models with Evolutionary Algorithms Yields Powerful Prompt Optimizers, September 2023. arXiv:2309.08532 [cs].

- Optimizing Prompts for Text-to-Image Generation, December 2022. arXiv:2212.09611 [cs].

- MetaGPT: Meta Programming for A Multi-Agent Collaborative Framework, November 2023. arXiv:2308.00352 [cs].

- Dialogue for Prompting: a Policy-Gradient-Based Discrete Prompt Optimization for Few-shot Learning, August 2023. arXiv:2308.07272 [cs].

- Chatdoctor: A medical chat model fine-tuned on a large language model meta-ai (llama) using medical domain knowledge. Cureus, 15(6), 2023.

- Design Guidelines for Prompt Engineering Text-to-Image Generative Models. In Proceedings of the 2022 CHI Conference on Human Factors in Computing Systems, CHI ’22, pages 1–23, New York, NY, USA, April 2022. Association for Computing Machinery.

- Calibrating LLM-Based Evaluator, September 2023. arXiv:2309.13308 [cs].

- Mark Lutz. Programming Python: Powerful Object-Oriented Programming. ”O’Reilly Media, Inc.”, December 2010.

- Bertalan Meskó. Prompt Engineering as an Important Emerging Skill for Medical Professionals: Tutorial. Journal of Medical Internet Research, 25(1):e50638, October 2023.

- Shritam Kumar Mund. The AI War: Mastering the Art of Prompt Engineering in the Era of Large Language Models. https://ai.plainenglish.io, February 2023.

- Matt Nigh. ChatGPT3-Free-Prompt-List: A free guide for learning to create ChatGPT3 Prompts. https://github.com/mattnigh/ChatGPT3-Free-Prompt-List, 2023.

- Generative Agents: Interactive Simulacra of Human Behavior. In Proceedings of the 36th Annual ACM Symposium on User Interface Software and Technology, UIST ’23, pages 1–22, New York, NY, USA, October 2023. Association for Computing Machinery.

- Foundations of JSON Schema. In Proceedings of the 25th International Conference on World Wide Web, WWW ’16, pages 263–273, Republic and Canton of Geneva, CHE, April 2016. International World Wide Web Conferences Steering Committee.

- Programming languages: design and implementation. Technical report, Prentice-Hall Englewood Cliffs, NJ, 1984.

- Automatic Prompt Optimization with ”Gradient Descent” and Beam Search, October 2023. arXiv:2305.03495 [cs].

- Techgpt 2.0: Technology-oriented generative pretrained transformer 2.0. https://github.com/neukg/TechGPT-2.0, 2023.

- Tim Rentsch. Object oriented programming. ACM SIGPLAN Notices, 17(9):51–57, September 1982.

- Introducing ChatGPT. https://openai.com/blog/chatgpt, 2023.

- Robert W Sebesta. Concepts of programming languages. Pearson Education, Inc, 2012.

- Michael Sipser. Introduction to the Theory of Computation. ACM Sigact News, 27(1):27–29, 1996. Publisher: ACM New York, NY, USA.

- ERNIE 3.0: Large-scale Knowledge Enhanced Pre-training for Language Understanding and Generation, July 2021. arXiv:2107.02137 [cs].

- AutoHint: Automatic Prompt Optimization with Hint Generation, August 2023. arXiv:2307.07415 [cs].

- A Survey of Reasoning with Foundation Models, December 2023. arXiv:2312.11562 [cs].

- Evaluating Large Language Models on Controlled Generation Tasks, October 2023. arXiv:2310.14542 [cs].

- An Introduction to Large Language Models: Prompt Engineering and P-Tuning. https://developer.nvidia.com, April 2023.

- HuaTuo: Tuning LLaMA Model with Chinese Medical Knowledge, April 2023. arXiv:2304.06975 [cs].

- PromptAgent: Strategic Planning with Language Models Enables Expert-level Prompt Optimization, December 2023. arXiv:2310.16427 [cs].

- Zhenxuan Wang. How to use prompt engineering with large language models. https://www.thoughtworks.com, August 2023.

- Chain-of-Thought Prompting Elicits Reasoning in Large Language Models, January 2023. arXiv:2201.11903 [cs].

- AutoGen: Enabling Next-Gen LLM Applications via Multi-Agent Conversation, October 2023. arXiv:2308.08155 [cs].

- The Rise and Potential of Large Language Model Based Agents: A Survey, September 2023. arXiv:2309.07864 [cs].

- Exploring Large Language Models for Communication Games: An Empirical Study on Werewolf, September 2023. arXiv:2309.04658 [cs].

- Baichuan 2: Open Large-scale Language Models, September 2023. arXiv:2309.10305 [cs].

- Decorate the Examples: A Simple Method of Prompt Design for Biomedical Relation Extraction, April 2022. arXiv:2204.10360 [cs].

- Large Language Model as Attributed Training Data Generator: A Tale of Diversity and Bias, October 2023. arXiv:2306.15895 [cs].

- Why Johnny Can’t Prompt: How Non-AI Experts Try (and Fail) to Design LLM Prompts. In Proceedings of the 2023 CHI Conference on Human Factors in Computing Systems, CHI ’23, pages 1–21, New York, NY, USA, April 2023. Association for Computing Machinery.

- GLM-130B: An Open Bilingual Pre-trained Model, October 2023. arXiv:2210.02414 [cs].

- PICA: Unleashing The Emotional Power of Large Language Model. https://github.com/NEU-DataMining/PICA, July 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.