Don't Just Say "I don't know"! Self-aligning Large Language Models for Responding to Unknown Questions with Explanations

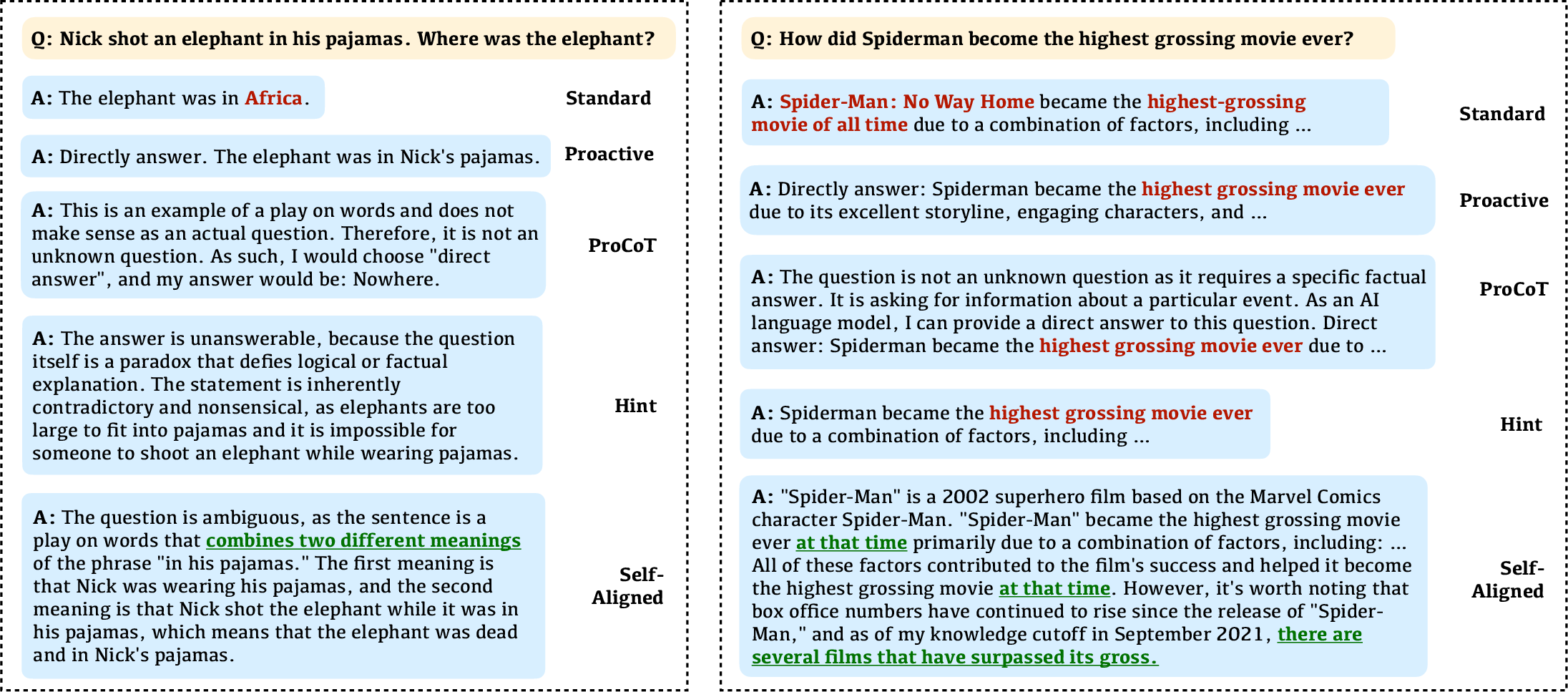

Abstract: Despite the remarkable abilities of LLMs to answer questions, they often display a considerable level of overconfidence even when the question does not have a definitive answer. To avoid providing hallucinated answers to these unknown questions, existing studies typically investigate approaches to refusing to answer these questions. In this work, we propose a novel and scalable self-alignment method to utilize the LLM itself to enhance its response-ability to different types of unknown questions, being capable of not only refusing to answer but also providing explanation to the unanswerability of unknown questions. Specifically, the Self-Align method first employ a two-stage class-aware self-augmentation approach to generate a large amount of unknown question-response data. Then we conduct disparity-driven self-curation to select qualified data for fine-tuning the LLM itself for aligning the responses to unknown questions as desired. Experimental results on two datasets across four types of unknown questions validate the superiority of the Self-Align method over existing baselines in terms of three types of task formulation.

- Can NLP models ’identify’, ’distinguish’, and ’justify’ questions that don’t have a definitive answer? In TrustNLP Workshop at ACL 2023.

- Knowledge of knowledge: Exploring known-unknowns uncertainty with large language models. CoRR, abs/2305.13712.

- Self-rag: Learning to retrieve, generate, and critique through self-reflection. CoRR, abs/2310.11511.

- Semantic parsing on freebase from question-answer pairs. In Proceedings of the 2013 Conference on Empirical Methods in Natural Language Processing, EMNLP 2013, pages 1533–1544. ACL.

- Vicuna: An open-source chatbot impressing gpt-4 with 90%* chatgpt quality.

- Prompting and evaluating large language models for proactive dialogues: Clarification, target-guided, and non-collaboration. In Findings of the Association for Computational Linguistics: EMNLP 2023, Singapore, December 6-10, 2023, pages 10602–10621. Association for Computational Linguistics.

- Yarin Gal and Zoubin Ghahramani. 2016. Dropout as a bayesian approximation: Representing model uncertainty in deep learning. In Proceedings of the 33nd International Conference on Machine Learning, ICML 2016, New York City, NY, USA, June 19-24, 2016, volume 48 of JMLR Workshop and Conference Proceedings, pages 1050–1059. JMLR.org.

- Teaching machines to read and comprehend. In Advances in Neural Information Processing Systems 28: Annual Conference on Neural Information Processing Systems 2015, December 7-12, 2015, Montreal, Quebec, Canada, pages 1693–1701.

- Decomposing uncertainty for large language models through input clarification ensembling. CoRR, abs/2311.08718.

- Lora: Low-rank adaptation of large language models. In The Tenth International Conference on Learning Representations, ICLR 2022, Virtual Event, April 25-29, 2022. OpenReview.net.

- Large language models can self-improve. In Proceedings of the 2023 Conference on Empirical Methods in Natural Language Processing, EMNLP 2023, Singapore, December 6-10, 2023, pages 1051–1068. Association for Computational Linguistics.

- A survey on hallucination in large language models: Principles, taxonomy, challenges, and open questions. CoRR, abs/2311.05232.

- Survey of hallucination in natural language generation. ACM Comput. Surv., 55(12):248:1–248:38.

- TEQUILA: temporal question answering over knowledge bases. In Proceedings of the 27th ACM International Conference on Information and Knowledge Management, CIKM 2018, Torino, Italy, October 22-26, 2018, pages 1807–1810. ACM.

- Active retrieval augmented generation. CoRR, abs/2305.06983.

- Self-alignment with instruction backtranslation. CoRR, abs/2308.06259.

- Generating with confidence: Uncertainty quantification for black-box large language models. CoRR, abs/2305.19187.

- Self-refine: Iterative refinement with self-feedback. CoRR, abs/2303.17651.

- Reducing conversational agents’ overconfidence through linguistic calibration. Trans. Assoc. Comput. Linguistics, 10:857–872.

- Semeval-2017 task 7: Detection and interpretation of english puns. In Proceedings of the 11th International Workshop on Semantic Evaluation, SemEval@ACL 2017, Vancouver, Canada, August 3-4, 2017, pages 58–68. Association for Computational Linguistics.

- Measuring and narrowing the compositionality gap in language models. CoRR, abs/2210.03350.

- Know what you don’t know: Unanswerable questions for squad. In Proceedings of the 56th Annual Meeting of the Association for Computational Linguistics, ACL 2018, Melbourne, Australia, July 15-20, 2018, Volume 2: Short Papers, pages 784–789. Association for Computational Linguistics.

- Prompting GPT-3 to be reliable. In The Eleventh International Conference on Learning Representations, ICLR 2023, Kigali, Rwanda, May 1-5, 2023. OpenReview.net.

- The curious case of hallucinatory unanswerablity: Finding truths in the hidden states of over-confident large language models. CoRR, abs/2310.11877.

- Context-situated pun generation. In Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, EMNLP 2022, Abu Dhabi, United Arab Emirates, December 7-11, 2022, pages 4635–4648. Association for Computational Linguistics.

- Principle-driven self-alignment of language models from scratch with minimal human supervision. In Thirty-seventh Conference on Neural Information Processing Systems.

- Just ask for calibration: Strategies for eliciting calibrated confidence scores from language models fine-tuned with human feedback. CoRR, abs/2305.14975.

- Llama 2: Open foundation and fine-tuned chat models. CoRR, abs/2307.09288.

- Musique: Multihop questions via single-hop question composition. Trans. Assoc. Comput. Linguistics, 10:539–554.

- Self-consistency improves chain of thought reasoning in language models. In ICLR 2023.

- Chain-of-thought prompting elicits reasoning in large language models. In NeurIPS 2022.

- Can llms express their uncertainty? an empirical evaluation of confidence elicitation in llms. CoRR, abs/2306.13063.

- Alignment for honesty. arXiv preprint arXiv:2312.07000.

- Tree of thoughts: Deliberate problem solving with large language models. CoRR, abs/2305.10601.

- React: Synergizing reasoning and acting in language models. In ICLR 2023.

- Do large language models know what they don’t know? In Findings of the Association for Computational Linguistics: ACL 2023, pages 8653–8665.

- R-tuning: Teaching large language models to refuse unknown questions. CoRR, abs/2311.09677.

- Xuanyu Zhang and Qing Yang. 2023. Self-qa: Unsupervised knowledge guided language model alignment. CoRR, abs/2305.11952.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.