Cameras as Rays: Pose Estimation via Ray Diffusion

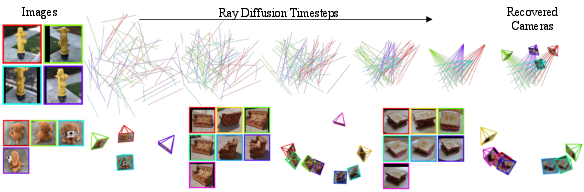

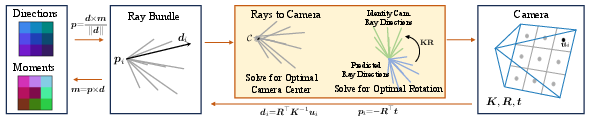

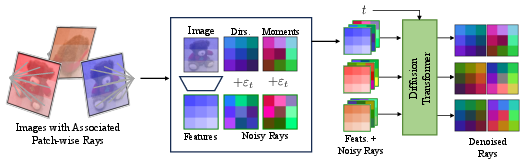

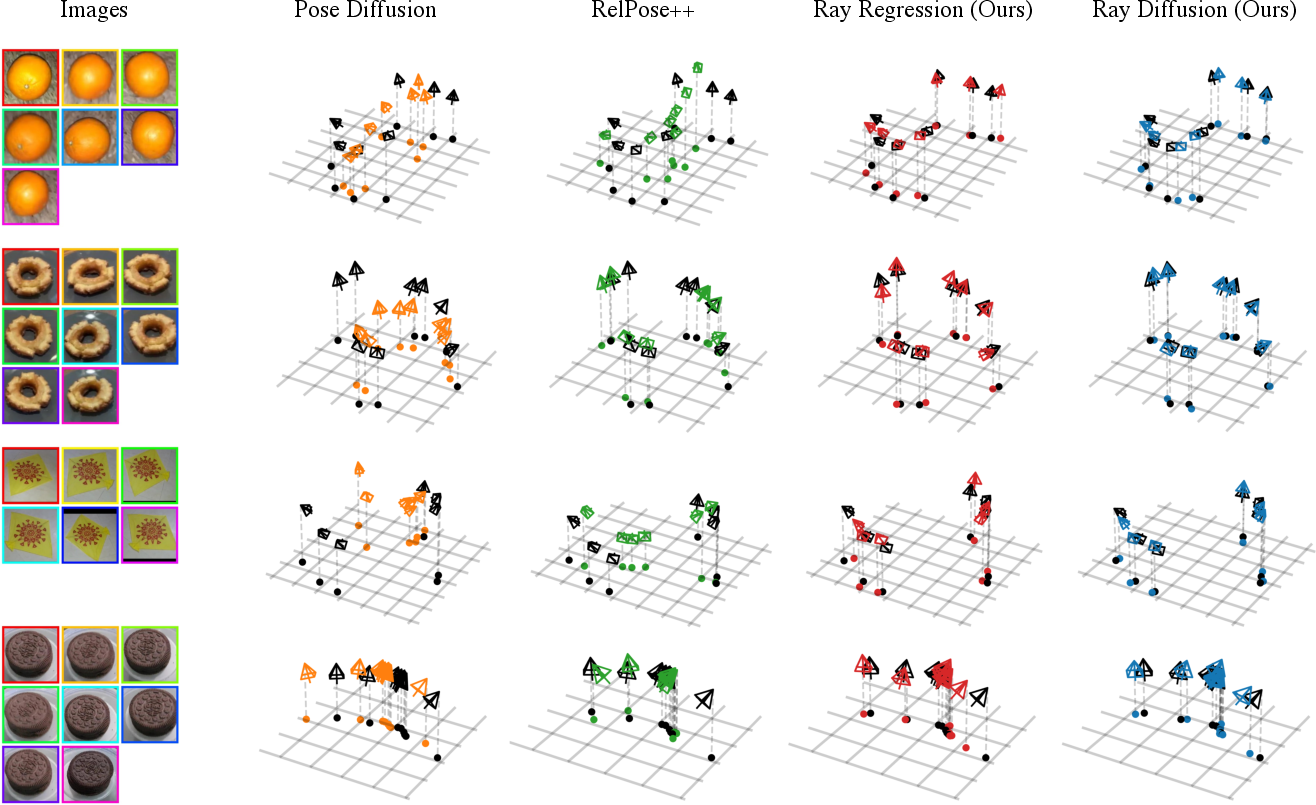

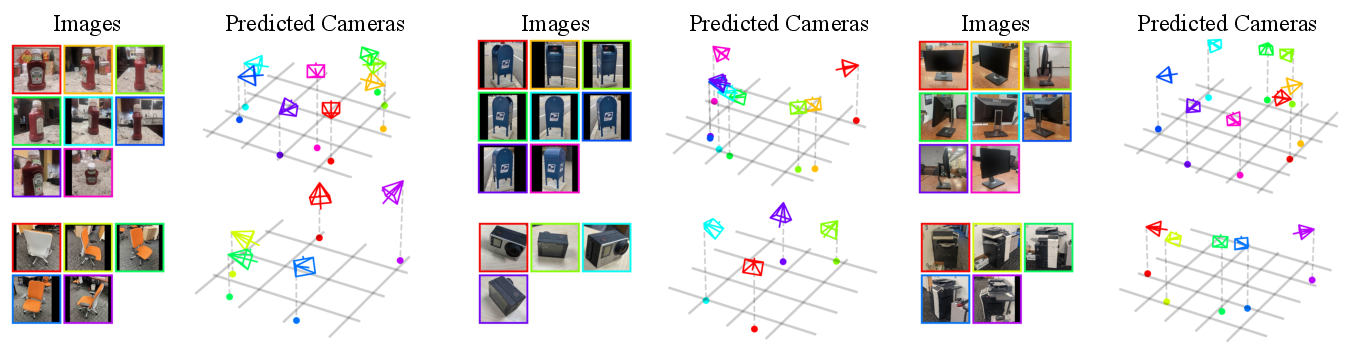

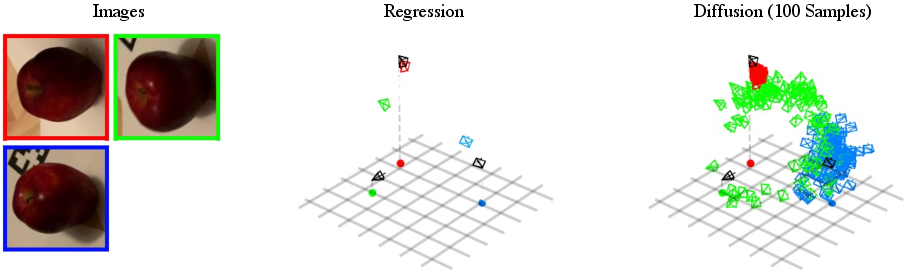

Abstract: Estimating camera poses is a fundamental task for 3D reconstruction and remains challenging given sparsely sampled views (<10). In contrast to existing approaches that pursue top-down prediction of global parametrizations of camera extrinsics, we propose a distributed representation of camera pose that treats a camera as a bundle of rays. This representation allows for a tight coupling with spatial image features improving pose precision. We observe that this representation is naturally suited for set-level transformers and develop a regression-based approach that maps image patches to corresponding rays. To capture the inherent uncertainties in sparse-view pose inference, we adapt this approach to learn a denoising diffusion model which allows us to sample plausible modes while improving performance. Our proposed methods, both regression- and diffusion-based, demonstrate state-of-the-art performance on camera pose estimation on CO3D while generalizing to unseen object categories and in-the-wild captures.

- Direct linear transformation from comparator coordinates into object space coordinates in close-range photogrammetry. Photogrammetric engineering & remote sensing, 81(2):103–107, 2015.

- RelocNet: Continuous Metric Learning Relocalisation using Neural Nets. In ECCV, 2018.

- SURF: Speeded Up Robust Features. In ECCV, 2006.

- Extreme Rotation Estimation using Dense Correlation Volumes. In CVPR, 2021.

- ORB-SLAM3: An Accurate Open-Source Library for Visual, Visual-Inertial and Multi-Map SLAM. T-RO, 2021.

- Sparse 3d reconstruction via object-centric ray sampling. arXiv preprint arXiv:2309.03008, 2023.

- Wide-Baseline Relative Camera Pose Estimation with Directional Learning. In CVPR, 2021.

- MonoSLAM: Real-time Single Camera SLAM. TPAMI, 2007.

- SuperPoint: Self-supervised Interest Point Detection and Description. In CVPR-W, 2018.

- An Image is Worth 16x16 Words: Transformers for Image Recognition at Scale. In ICLR, 2021.

- Efficient Generic Calibration Method for General Cameras with Single Centre of Projection. Computer Vision and Image Understanding, 114(2):220–233, 2010.

- A general imaging model and a method for finding its parameters. In Proceedings Eighth IEEE International Conference on Computer Vision. ICCV 2001, volume 2, pp. 108–115. IEEE, 2001.

- Denoising Diffusion Probabilistic Models. NeurIPS, 2020.

- Few-View Object Reconstruction with Unknown Categories and Camera Poses. ArXiv, 2212.04492, 2022.

- A generic camera model and calibration method for conventional, wide-angle, and fish-eye lenses. TPAMI, 28(8):1335–1340, 2006.

- MegaDepth: Learning Single-View Depth Prediction from Internet Photos. In CVPR, 2018.

- RelPose++: Recovering 6D Poses from Sparse-view Observations. arXiv preprint arXiv:2305.04926, 2023.

- Pixel-Perfect Structure-from-Motion with Featuremetric Refinement. In ICCV, 2021.

- SparseNeuS: Fast Generalizable Neural Surface Reconstruction from Sparse Views. In ECCV, 2022.

- David G Lowe. Distinctive Image Features from Scale-invariant Keypoints. IJCV, 2004.

- An Iterative Image Registration Technique with an Application to Stereo Vision. In IJCAI, 1981.

- ORB-SLAM2: An Open-Source SLAM System for Monocular, Stereo and RGB-D Cameras. T-RO, 2017.

- ORB-SLAM: A Versatile and Accurate Monocular SLAM System. T-RO, 2015.

- DINOv2: Learning Robust Visual Features without Supervision. arXiv preprint arXiv:2304.07193, 2023.

- Scalable Diffusion Models with Transformers. In ICCV, 2023.

- Julius Plücker. Analytisch-geometrische Entwicklungen, volume 2. GD Baedeker, 1828.

- Common Objects in 3D: Large-Scale Learning and Evaluation of Real-life 3D Category Reconstruction. In ICCV, 2021.

- The 8-Point Algorithm as an Inductive Bias for Relative Pose Prediction by ViTs. In 3DV, 2022.

- From Coarse to Fine: Robust Hierarchical Localization at Large Scale. In CVPR, 2019.

- SuperGlue: Learning Feature Matching with Graph Neural Networks. In CVPR, 2020.

- Structure-from-Motion Revisited. In CVPR, 2016.

- Pixelwise View Selection for Unstructured Multi-View Stereo. In ECCV, 2016.

- BAD SLAM: Bundle Adjusted Direct RGB-D SLAM. In CVPR, 2019.

- Why Having 10,000 Parameters in Your Camera Model is Better Than Twelve. In CVPR, 2020.

- RANSAC-Flow: Generic Two-stage Image Alignment. In ECCV, 2020.

- SparsePose: Sparse-View Camera Pose Regression and Refinement. In CVPR, 2023.

- Photo Tourism: Exploring Photo Collections in 3D. In SIGGRAPH. ACM, 2006.

- BA-Net: Dense Bundle Adjustment Network. In ICLR, 2019.

- Bundle Adjustment—A Modern Synthesis. In International workshop on vision algorithms, 1999.

- Attention is All You Need. NeurIPS, 2017.

- PoseDiffusion: Solving Pose Estimation via Diffusion-aided Bundle Adjustment. In ICCV, 2023.

- Volumetric Correspondence Networks for Optical Flow. NeurIPS, 32, 2019.

- NeRS: Neural Reflectance Surfaces for Sparse-view 3D Reconstruction in the Wild. In NeurIPS, 2021.

- RelPose: Predicting Probabilistic Relative Rotation for Single Objects in the Wild. In ECCV, 2022.

- Stereo magnification: Learning view synthesis using multiplane images. SIGGRAPH, 37, 2018.

- SparseFusion: Distilling View-conditioned Diffusion for 3D Reconstruction. In CVPR, 2023.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.