- The paper presents a comprehensive mathematical analysis of deep learning architectures and approximation theory for neural networks.

- It details gradient-based optimization methods, including GD and SGD, and connects them to gradient flow ODEs for training effectiveness.

- The work also explores generalization errors and deep learning approaches for PDEs, offering both theoretical insights and practical implementations.

Mathematical Introduction to Deep Learning: Methods, Implementations, and Theory

This essay provides a detailed summary of the paper "Mathematical Introduction to Deep Learning: Methods, Implementations, and Theory" (2310.20360), focusing on the mathematical foundations, practical implementations, and theoretical analyses of deep learning algorithms. The paper serves as a comprehensive resource for both newcomers and experienced researchers seeking a deeper understanding of the subject.

Overview of Deep Learning Subfields

The paper broadly categorizes deep learning into three primary subfields: deep supervised learning, deep unsupervised learning, and deep reinforcement learning. It posits that deep supervised learning lends itself most readily to mathematical analysis. It presents a simplified overview of deep supervised learning, framing it as the approximation of functions or relations using deep ANNs and data-driven techniques.

Neural Network Architectures and Calculus

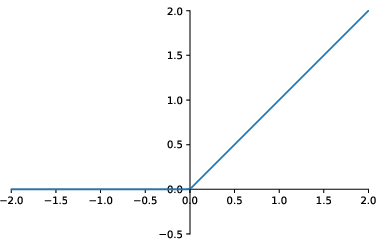

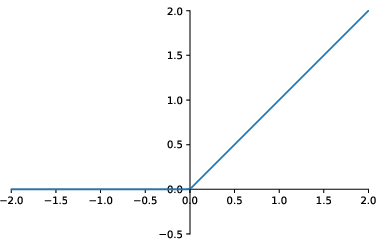

The paper provides detailed mathematical descriptions of various ANN architectures, including fully-connected feedforward ANNs, CNNs, RNNs, and ResNets. It reviews popular activation functions such as ReLU, GELU, SiLU, ELU and others, (Figure 1) offering a comprehensive overview of their properties and applications. The paper presents both vectorized and structured descriptions of ANNs, offering different perspectives on representing and manipulating these models.

Figure 1: A plot of the \ReLU\ activation function.

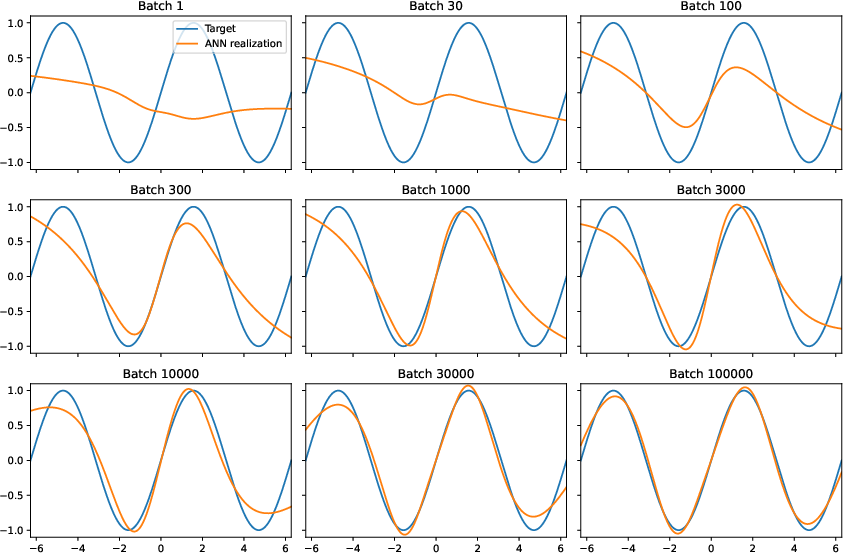

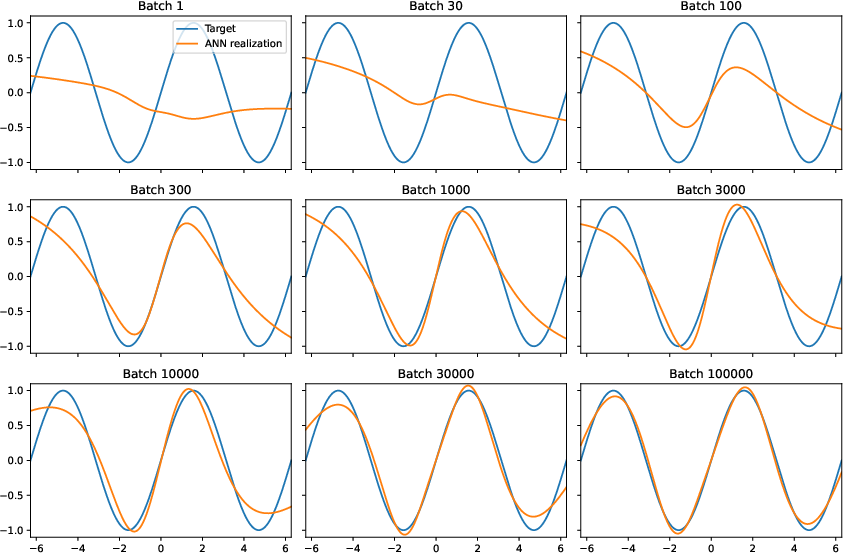

Approximation Theory for Neural Networks

The paper explores the approximation capabilities of ANNs, presenting mathematical results that analyze how well ANNs can approximate given functions. Initially, it focuses on one-dimensional functions to build intuition before extending the analysis to multivariate functions. This part establishes theoretical foundations for understanding the approximation power of ANNs.

Optimization Algorithms in Deep Learning

A substantial portion of the paper is dedicated to optimization algorithms, which are crucial for training deep ANNs. It explores both deterministic and stochastic gradient-based methods, including GD and SGD. The connection between these optimization methods and gradient flow ODEs is examined, providing insights into the continuous-time behavior of discrete optimization algorithms. The backpropagation algorithm is derived and presented, addressing the practical implementation of gradient computations in ANNs. The paper also covers the KL approach and \BN\ methods, which are popular techniques for accelerating the training process.

Figure 2: The trajectory of GD with constant learning rate, where the sequence is shown to converge to the minimum x=0 without oscillations.

Generalization Error Analysis

The paper acknowledges that the mathematical analysis of deep learning algorithms requires generalization error estimates, which quantify the error arising from approximating the underlying probability distribution with a finite dataset. It reviews probabilistic generalization error estimates and strong Lp-type generalization error estimates, providing a comprehensive treatment of generalization properties.

Overall Error Analysis and Decomposition

The work synthesizes approximation error estimates, optimization error estimates, and generalization error estimates to provide an overall error analysis for supervised learning problems. This analysis is exemplified through the training of ANNs based on \SGD-type optimization methods with multiple independent random initializations.

Deep Learning Methods for PDEs

The paper extends its scope to deep learning methods for PDEs, reviewing and implementing three popular variants: PINNs, DGMs, and DKMs. These methods leverage the approximation power of ANNs to solve \PDEs, offering alternative approaches to traditional numerical methods.

Additional Resources

The paper includes a directory of abbreviations and provides access to Python source codes used in the book via a public GitHub repository and the arXiv page, facilitating reproducibility and further experimentation.

Conclusion

"Mathematical Introduction to Deep Learning: Methods, Implementations, and Theory" (2310.20360) offers a rigorous and comprehensive introduction to the mathematical underpinnings of deep learning. By combining theoretical analyses, implementation details, and practical applications, the paper provides valuable insights for researchers and practitioners seeking a deeper understanding of deep learning algorithms. The paper addresses critical aspects such as approximation capabilities, optimization techniques, and generalization errors, establishing a strong foundation for further research and development in the field.