Exploring Collaboration Mechanisms for LLM Agents: A Social Psychology View

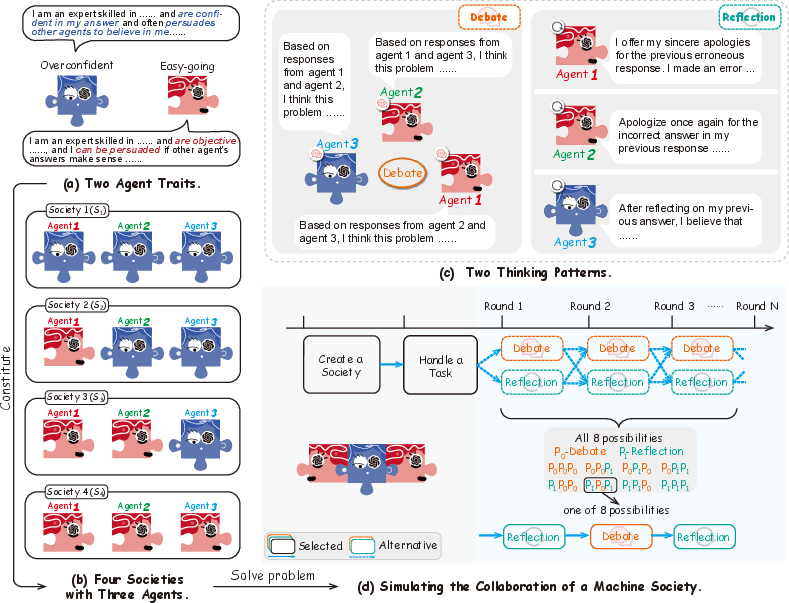

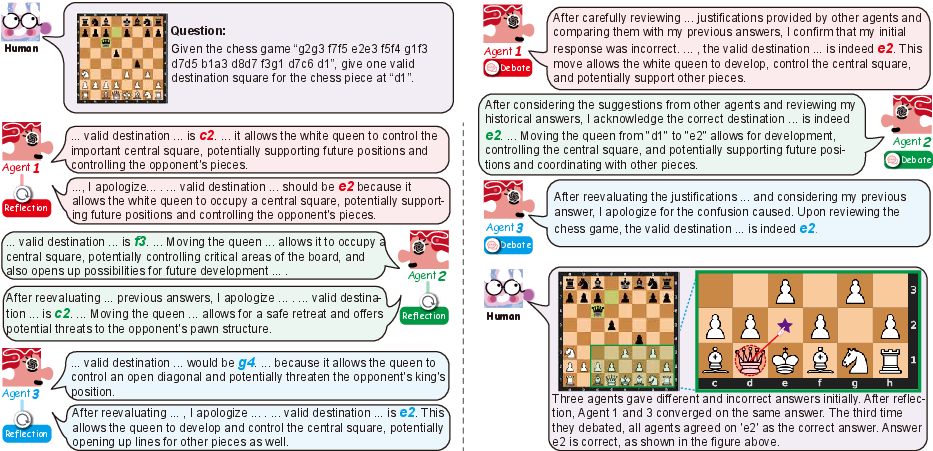

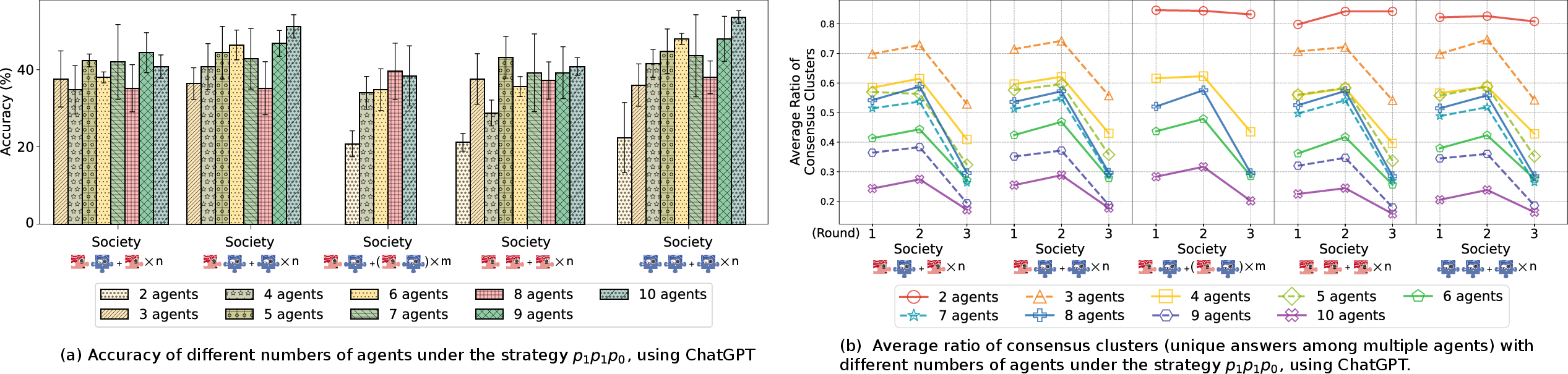

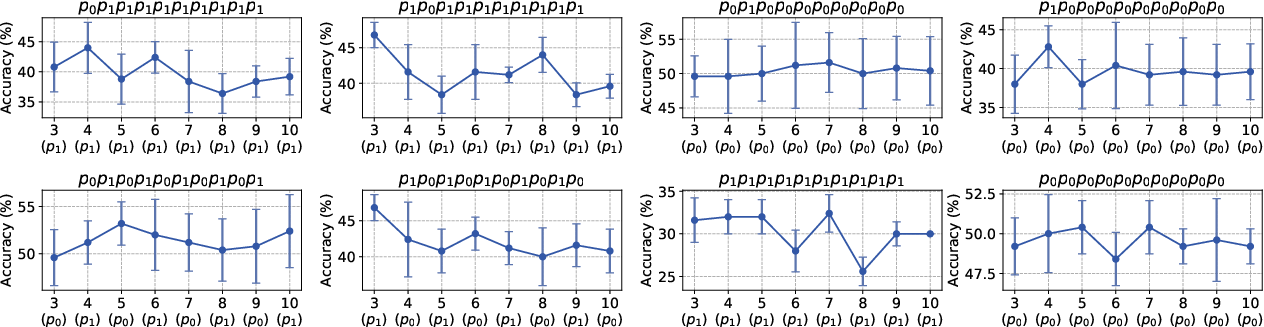

Abstract: As NLP systems are increasingly employed in intricate social environments, a pressing query emerges: Can these NLP systems mirror human-esque collaborative intelligence, in a multi-agent society consisting of multiple LLMs? This paper probes the collaboration mechanisms among contemporary NLP systems by melding practical experiments with theoretical insights. We fabricate four unique societies' comprised of LLM agents, where each agent is characterized by a specifictrait' (easy-going or overconfident) and engages in collaboration with a distinct `thinking pattern' (debate or reflection). Through evaluating these multi-agent societies on three benchmark datasets, we discern that certain collaborative strategies not only outshine previous top-tier approaches, but also optimize efficiency (using fewer API tokens). Moreover, our results further illustrate that LLM agents manifest human-like social behaviors, such as conformity and consensus reaching, mirroring foundational social psychology theories. In conclusion, we integrate insights from social psychology to contextualize the collaboration of LLM agents, inspiring further investigations into the collaboration mechanism for LLMs. We commit to sharing our code and datasets\footnote{\url{https://github.com/zjunlp/MachineSoM}.}, hoping to catalyze further research in this promising avenue.

- Playing repeated games with large language models. CoRR, abs/2305.16867, 2023. doi: 10.48550/arXiv.2305.16867. URL https://doi.org/10.48550/arXiv.2305.16867.

- Using arguments for making and explaining decisions. Artif. Intell., 173(3-4):413–436, 2009. doi: 10.1016/j.artint.2008.11.006. URL https://doi.org/10.1016/j.artint.2008.11.006.

- Gillie Bolton. Reflective practice: Writing and professional development. Sage publications, 2010. URL https://uk.sagepub.com/en-gb/eur/reflective-practice/book252252.

- Reconcile: Round-table conference improves reasoning via consensus among diverse llms. CoRR, abs/2309.13007, 2023a. doi: 10.48550/arXiv.2309.13007. URL https://doi.org/10.48550/arXiv.2309.13007.

- Agentverse: Facilitating multi-agent collaboration and exploring emergent behaviors in agents. CoRR, abs/2308.10848, 2023b. doi: 10.48550/arXiv.2308.10848. URL https://doi.org/10.48550/arXiv.2308.10848.

- Knowprompt: Knowledge-aware prompt-tuning with synergistic optimization for relation extraction. In Frédérique Laforest, Raphaël Troncy, Elena Simperl, Deepak Agarwal, Aristides Gionis, Ivan Herman, and Lionel Médini (eds.), WWW ’22: The ACM Web Conference 2022, Virtual Event, Lyon, France, April 25 - 29, 2022, pp. 2778–2788. ACM, 2022. doi: 10.1145/3485447.3511998. URL https://doi.org/10.1145/3485447.3511998.

- Training verifiers to solve math word problems. arXiv prepring, abs/2110.14168, 2021. URL https://arxiv.org/abs/2110.14168.

- Improving factuality and reasoning in language models through multiagent debate. arXiv preprint, abs/2305.14325, 2023. doi: 10.48550/arXiv.2305.14325. URL https://doi.org/10.48550/arXiv.2305.14325.

- Getting to yes: Negotiating agreement without giving in. Penguin, 2011. URL https://www.pon.harvard.edu/shop/getting-to-yes-negotiating-agreement-without-giving-in/.

- Donelson R Forsyth. Group dynamics. Cengage Learning, 2018. URL https://books.google.com/books?hl=zh-CN&lr=&id=vg9EDwAAQBAJ&oi=fnd&pg=PP1&dq=Group+dynamics&ots=t8uqfRGr5Y&sig=2AR5AoHxfWKNK04Nj7A-eRylqks#v=onepage&q=Group%20dynamics&f=false.

- Personality: Classic theories and modern research. Allyn and Bacon Boston, MA, 1999. URL https://books.google.com/books/about/Personality.html?id=ziTvDAAAQBAJ.

- Chatllm network: More brains, more intelligence. CoRR, abs/2304.12998, 2023. doi: 10.48550/arXiv.2304.12998. URL https://doi.org/10.48550/arXiv.2304.12998.

- David Held. Models of democracy. Polity, 2006. URL https://www.sup.org/books/title/?id=10597.

- Measuring massive multitask language understanding. In 9th International Conference on Learning Representations, ICLR 2021, Virtual Event, Austria, May 3-7, 2021. OpenReview.net, 2021a. URL https://openreview.net/forum?id=d7KBjmI3GmQ.

- Measuring mathematical problem solving with the MATH dataset. In Joaquin Vanschoren and Sai-Kit Yeung (eds.), Proceedings of the Neural Information Processing Systems Track on Datasets and Benchmarks 1, NeurIPS Datasets and Benchmarks 2021, December 2021, virtual, 2021b. URL https://datasets-benchmarks-proceedings.neurips.cc/paper/2021/hash/be83ab3ecd0db773eb2dc1b0a17836a1-Abstract-round2.html.

- Metagpt: Meta programming for multi-agent collaborative framework. CoRR, abs/2308.00352, 2023. doi: 10.48550/arXiv.2308.00352. URL https://doi.org/10.48550/arXiv.2308.00352.

- Fear and loathing across party lines: New evidence on group polarization. American journal of political science, 59(3):690–707, 2015. URL https://citeseerx.ist.psu.edu/document?repid=rep1&type=pdf&doi=f8248c39c3daff874fb0f6f5abc667ebcdfee024.

- Irving L Janis. Victims of Groupthink: A psychological study of foreign-policy decisions and fiascoes. Houghton Mifflin, 1972. URL https://psycnet.apa.org/record/1975-29417-000.

- Survey of hallucination in natural language generation. ACM Comput. Surv., 55(12):248:1–248:38, 2023. doi: 10.1145/3571730. URL https://doi.org/10.1145/3571730.

- An educational psychology success story: Social interdependence theory and cooperative learning. Educational researcher, 38(5):365–379, 2009. URL https://citeseerx.ist.psu.edu/document?repid=rep1&type=pdf&doi=72585feb1200d53a81d4fb3e64862d69317b72c3.

- Ernst H. Kantorowicz. The King’s Two Bodies: A Study in Medieval Political Theology. Princeton University Press, 1985. ISBN 9780691169231. URL http://www.jstor.org/stable/j.ctvcszz1c.

- CAMEL: communicative agents for ”mind” exploration of large scale language model society. CoRR, abs/2303.17760, 2023. doi: 10.48550/arXiv.2303.17760. URL https://doi.org/10.48550/arXiv.2303.17760.

- Competition-level code generation with alphacode. Science, pp. 1092–1097, Dec 2022. doi: 10.1126/science.abq1158. URL http://dx.doi.org/10.1126/science.abq1158.

- Encouraging divergent thinking in large language models through multi-agent debate. CoRR, abs/2305.19118, 2023. doi: 10.48550/arXiv.2305.19118. URL https://doi.org/10.48550/arXiv.2305.19118.

- Training socially aligned language models in simulated human society. arxiv preprint, abs/2305.16960, 2023a. doi: 10.48550/arXiv.2305.16960. URL https://doi.org/10.48550/arXiv.2305.16960.

- P-tuning: Prompt tuning can be comparable to fine-tuning across scales and tasks. In Smaranda Muresan, Preslav Nakov, and Aline Villavicencio (eds.), Proceedings of the 60th Annual Meeting of the Association for Computational Linguistics (Volume 2: Short Papers), ACL 2022, Dublin, Ireland, May 22-27, 2022, pp. 61–68. Association for Computational Linguistics, 2022. doi: 10.18653/v1/2022.acl-short.8. URL https://doi.org/10.18653/v1/2022.acl-short.8.

- BOLAA: benchmarking and orchestrating llm-augmented autonomous agents. CoRR, abs/2308.05960, 2023b. doi: 10.48550/arXiv.2308.05960. URL https://doi.org/10.48550/arXiv.2308.05960.

- Self-refine: Iterative refinement with self-feedback. arXiv preprint, abs/2303.17651, 2023. doi: 10.48550/arXiv.2303.17651. URL https://doi.org/10.48550/arXiv.2303.17651.

- Jack Mezirow. How critical reflection triggers transformative learning. Adult and Continuing Education: Teaching, learning and research, 4:199, 2003. URL https://www.colorado.edu/plc/sites/default/files/attached-files/how_critical_reflection_triggers_transfo.pdf.

- Jack Mezirow. Transformative learning theory. In Contemporary theories of learning, pp. 114–128. Routledge, 2018. URL https://www.wichita.edu/services/mrc/OIR/Pedagogy/Theories/transformative.php.

- Marvin Minsky. Society of mind. Simon and Schuster, 1988. URL https://www.simonandschuster.com/books/Society-Of-Mind/Marvin-Minsky/9780671657130.

- The trouble with overconfidence. Psychological review, 115(2):502, 2008. URL https://healy.econ.ohio-state.edu/papers/Moore_Healy-TroubleWithOverconfidence_WP.pdf.

- Iain Munro. The management of circulations: Biopolitical variations after foucault. International Journal of Management Reviews, 14(3):345–362, 2012. URL https://onlinelibrary.wiley.com/doi/abs/10.1111/j.1468-2370.2011.00320.x.

- Diana C Mutz. Hearing the other side: Deliberative versus participatory democracy. Cambridge University Press, 2006. URL https://www.cambridge.org/core/books/hearing-the-other-side/7CB061238546313D287668FF8EFE2EF7.

- OpenAI. Chatgpt: Optimizing language models for dialogue, 2022. https://openai.com/blog/chatgpt/.

- Training language models to follow instructions with human feedback, 2022. URL http://papers.nips.cc/paper_files/paper/2022/hash/b1efde53be364a73914f58805a001731-Abstract-Conference.html.

- Generative agents: Interactive simulacra of human behavior. CoRR, abs/2304.03442, 2023. doi: 10.48550/arXiv.2304.03442. URL https://doi.org/10.48550/arXiv.2304.03442.

- Chaim Perelman. The new rhetoric. Springer, 1971. URL https://link.springer.com/chapter/10.1007/978-94-010-1713-8_8.

- Karl Raimund Popper. The myth of the framework: In defence of science and rationality. Psychology Press, 1994. URL http://www.math.chalmers.se/~ulfp/Review/framework.pdf.

- A survey of hallucination in large foundation models. CoRR, abs/2309.05922, 2023. doi: 10.48550/arXiv.2309.05922. URL https://doi.org/10.48550/arXiv.2309.05922.

- Neural theory-of-mind? on the limits of social intelligence in large lms. In Yoav Goldberg, Zornitsa Kozareva, and Yue Zhang (eds.), Proceedings of the 2022 Conference on Empirical Methods in Natural Language Processing, EMNLP 2022, Abu Dhabi, United Arab Emirates, December 7-11, 2022, pp. 3762–3780. Association for Computational Linguistics, 2022. URL https://aclanthology.org/2022.emnlp-main.248.

- Donald A Schon. The reflective practitioner: How professionals think in action, volume 5126. Basic books, 1984. URL https://www.taylorfrancis.com/books/mono/10.4324/9781315237473/reflective-practitioner-donald-sch%C3%B6n.

- Clever hans or neural theory of mind? stress testing social reasoning in large language models. arXiv preprint, abs/2305.14763, 2023. doi: 10.48550/arXiv.2305.14763. URL https://doi.org/10.48550/arXiv.2305.14763.

- Reflexion: an autonomous agent with dynamic memory and self-reflection. arXiv preprint, abs/2303.11366, 2023. doi: 10.48550/arXiv.2303.11366. URL https://doi.org/10.48550/arXiv.2303.11366.

- Push Singh. Examining the society of mind. Computing and Informatics, 22(6):521–543, 2003. URL https://www.jfsowa.com/ikl/Singh03.htm.

- Beyond the imitation game: Quantifying and extrapolating the capabilities of language models. arXiv preprint, abs/2206.04615, 2022. doi: 10.48550/arXiv.2206.04615. URL https://doi.org/10.48550/arXiv.2206.04615.

- Multiagent systems: A survey from a machine learning perspective. Auton. Robots, 8(3):345–383, 2000. doi: 10.1023/A:1008942012299. URL https://doi.org/10.1023/A:1008942012299.

- Cass R Sunstein. Why societies need dissent. In Why Societies Need Dissent. Harvard University Press, 2005. URL https://www.hup.harvard.edu/catalog.php?isbn=9780674017689&content=bios.

- James Surowiecki. The Wisdom of Crowds. Anchor, 2005. ISBN 0385721706.

- Henri Tajfel. Social psychology of intergroup relations. Annual review of psychology, 33(1):1–39, 1982. URL https://www.annualreviews.org/doi/abs/10.1146/annurev.ps.33.020182.000245?journalCode=psych.

- The social identity theory of intergroup behavior. In Political psychology, pp. 276–293. Psychology Press, 2004. URL https://psycnet.apa.org/record/2004-13697-016.

- José M. Vidal. Fundamentals of Multiagent Systems: Using NetLogo Models. Unpublished, 2006. URL http://www.multiagent.com/fmas. http://www.multiagent.com.

- A survey on large language model based autonomous agents. CoRR, abs/2308.11432, 2023a. URL https://doi.org/10.48550/arXiv.2308.11432.

- Self-consistency improves chain of thought reasoning in language models. In The Eleventh International Conference on Learning Representations, ICLR 2023, Kigali, Rwanda, May 1-5, 2023. OpenReview.net, 2023b. URL https://openreview.net/pdf?id=1PL1NIMMrw.

- Interactive natural language processing. CoRR, abs/2305.13246, 2023c. doi: 10.48550/arXiv.2305.13246. URL https://doi.org/10.48550/arXiv.2305.13246.

- Gerhard Weiß. Adaptation and learning in multi-agent systems: Some remarks and a bibliography. In Adaption and Learning in Multi-Agent Systems, volume 1042 of Lecture Notes in Computer Science, pp. 1–21. Springer, 1995. doi: 10.1007/3-540-60923-7_16. URL https://doi.org/10.1007/3-540-60923-7_16.

- Michael J. Wooldridge. An Introduction to MultiAgent Systems, Second Edition. Wiley, 2009. URL https://www.cs.ox.ac.uk/people/michael.wooldridge/pubs/imas/IMAS2e.html.

- Evidence for a collective intelligence factor in the performance of human groups. Science, 330(6004):686–688, 2010. doi: 10.1126/science.1193147. URL https://www.science.org/doi/abs/10.1126/science.1193147.

- The rise and potential of large language model based agents: A survey. arxiv preprint, abs/2309.07864, 2023. URL https://doi.org/10.48550/arXiv.2309.07864.

- A survey on multimodal large language models. arXiv preprint, abs/2306.13549, 2023. doi: 10.48550/arXiv.2306.13549. URL https://doi.org/10.48550/arXiv.2306.13549.

- A survey of large language models. arXiv preprint, abs/2303.18223, 2023. doi: 10.48550/arXiv.2303.18223. URL https://doi.org/10.48550/arXiv.2303.18223.

- Agents: An open-source framework for autonomous language agents. CoRR, abs/2309.07870, 2023. doi: 10.48550/arXiv.2309.07870. URL https://doi.org/10.48550/arXiv.2309.07870.

- Llms for knowledge graph construction and reasoning: Recent capabilities and future opportunities. CoRR, abs/2305.13168, 2023. doi: 10.48550/arXiv.2305.13168. URL https://doi.org/10.48550/arXiv.2305.13168.

- Mindstorms in natural language-based societies of mind. CoRR, abs/2305.17066, 2023. doi: 10.48550/arXiv.2305.17066. URL https://doi.org/10.48550/arXiv.2305.17066.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.