- The paper presents a novel framework for evaluating the stability of semantic concept representations in CNNs by combining supervised and unsupervised techniques.

- Experiments demonstrate that 1D-CAVs offer superior stability through enhanced concept separation and resistance to gradient instability compared to higher-dimensional representations.

- The study recommends using at least 40-60 concept samples per concept and applying gradient smoothing in shallow layers to improve concept attribution stability.

Evaluating Stability of Concept Representations for Explainability

This paper (2304.14864) introduces a framework and metrics for evaluating the stability of semantic concept representations in CNNs, particularly within the context of XAI for safety-critical systems such as those used in automated driving. The focus is on both the stability of concept retrieval and concept attribution, addressing the adaptation of concept analysis methods for object detection CNNs.

Proposed Methodology

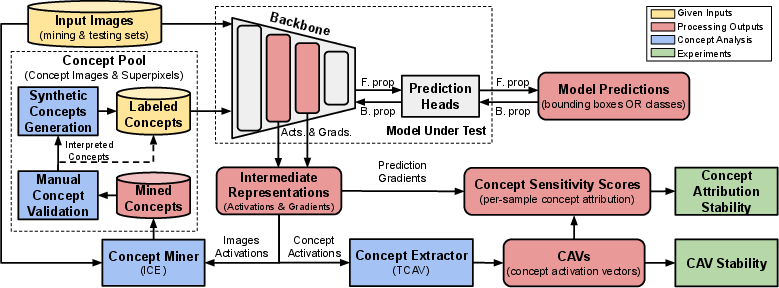

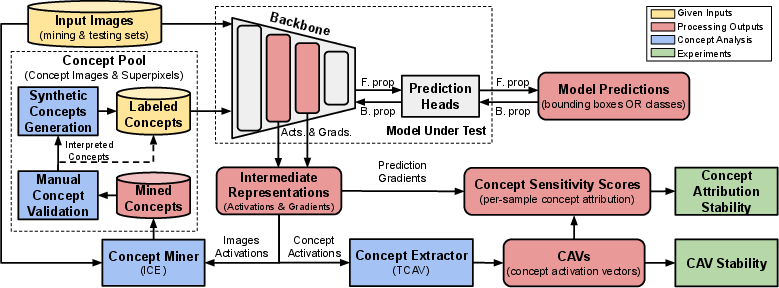

The framework leverages a combination of supervised and unsupervised CA techniques to achieve label-efficient retrieval and utilization of interpretable concepts for explaining both classification and object detection backbones. The overall framework for CAV stability and concept attribution stability is shown in (Figure 1).

Figure 1: The framework for estimation of CAV stability and concept attribution stability. The proposed solution utilizes unsupervised ICE to aid concept discovery and labeling, while supervised TCAV is used for the generation of concept representations.

Concept Pool

The methodology involves building an extensible concept pool containing human-validated mined concepts extracted from the trained model under test, alongside existing manually labeled concepts. Unsupervised concept mining is employed to streamline the manual annotation process and accelerate concept labeling. The paper utilizes ICE to extract image patches that cause common patterns in the CNN image activations. The sets of mined image patches then undergo manual concept validation, where a human annotator assigns a label to each mined concept. Interpreted concepts, if meaningful, can either be directly added to the set of labeled concepts or be utilized in synthetic concept generation to obtain more complex synthetic concept samples.

Concept Stability Analysis

Supervised CA is performed to obtain CAVs for the concepts in the pool. CAV training is conducted on concept activations, i.e., CNN activations of concept images from the labeled concepts in the concept pool. Per-sample concept attribution is then calculated using backpropagation-based sensitivity methods. The resulting CAVs and concept sensitivity scores are used for local and global explanation purposes. The paper uses TCAV as the baseline global concept vector representation for stability estimation. A binary linear classifier is trained to predict the presence of a concept from the intermediate neuron activations in the selected CNN layer. The classifier weights serve as the CAV. For concept attribution, the sensitivity score calculation from \cite{kim2018interpretability} is adopted.

Object Detection Adaptation

The paper adapts CA for object detection (OD) by addressing challenges such as multiple predictions and suppressive post-processing. For multiple predictions, the concept weights and importance estimation component of ICE require adjustments. The pipeline assesses the effect of small modifications to each concept on the final class prediction on a per-bounding box approach. For TCAV, concept sensitivity is computed for each bounding box by starting the backpropagation from the desired class neuron of the bounding box. To evaluate concept sensitivity for FNs, the list of raw unprocessed bounding boxes is compared with the desired object bounding boxes, and IoU is used to select the best raw bounding boxes that match the desired ones.

Concept Stability Metrics

The paper introduces a generalized concept stability metric SLkC(X) for a concept C in layer Lk applicable to a test set X as

SLkC(X):=separabilityLkC(X)×consistencyLkC

where separabilityLkC(X) represents how well-tested concepts are separated from each other in the feature space, and consistencyLkC denotes how similar the representations are for the same concept when obtained with different initialization conditions. Separability is quantified using the mean of relative F1-scores on X for $\CAV_{L_k,i}^C$ of C in layer Lk for N runs i with different initialization conditions for CAV training:

separabilityLkC(X):=f1LkC(X):=N1i=1∑Nf1(CAVLk,iC;X)∈[0,1]

Consistency is measured as the mean cosine similarity between the CAVs in layer Lk of N runs:

$consistency_{L_{k}^C} \coloneqq \cos_{L_{k}^C} \coloneqq \tfrac{2}{N(N-1)}\sum_{i=1}^{N} \sum_{j=1}^{i-1} {\cos(\CAV_{L_k,i}^C, \CAV_{L_k,j}^C)}$

Concept attribution stability is evaluated by comparing the vanilla gradient approach against a stabilized version using SmoothGrad. The paper introduces the Concept Attribution Deviation (CAD) metric to qualitatively evaluate the difference between the concept attribution of SmoothGrad and the vanilla gradient in the tested layer:

$\text{CAD}(x) \coloneqq \frac{

\sum_{C}\sum_{i}^{N} \left |\attr_{C,i}^\text{grad}(x) - \attr_{C,i}^\text{SG}(x) \right |}{

\sum_{C}\sum_{i}^{N} \left |\attr_{C,i}^\text{grad}(x) \right |}$

where $\attr_{C,i}^\text{grad}(x)$ and $\attr_{C,i}^\text{SG}(x)$ are the attributions of concept C in layer Lk for vanilla gradient and SmoothGrad, respectively, for a single prediction for sample x.

CAV Dimensionality

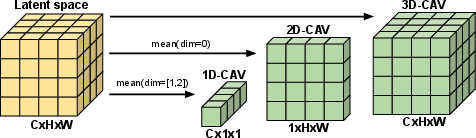

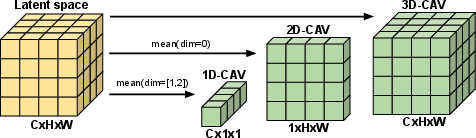

The paper explores the impact of CAV dimensionality on stability, considering 1D, 2D, and 3D CAV representations (Figure 2).

Figure 2: Concept activation vectors (CAVs) of different dimensions.

Experimental Design and Results

The proposed framework is used to conduct experiments for OD and classification models to evaluate concept representation stability via the selection of representation dimensionality and inspect the impact of gradient instability in CNNs on concept attribution. The MS COCO 2017 dataset is used for unsupervised concept mining in object detectors, while the BRODEN and CycleGAN Zebras datasets are used for classification model experiments. The paper selects three object detectors (YOLOv5s, FasterRCNN, and SSD) and three classification models (ResNet50, SqueezeNet1.1, and EfficientNet-B0) with various backbones to evaluate the stability of semantic representations.

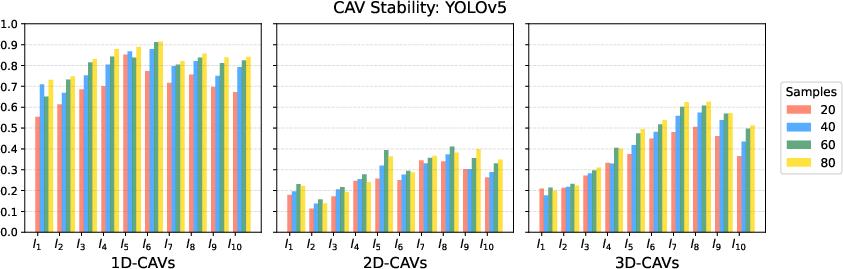

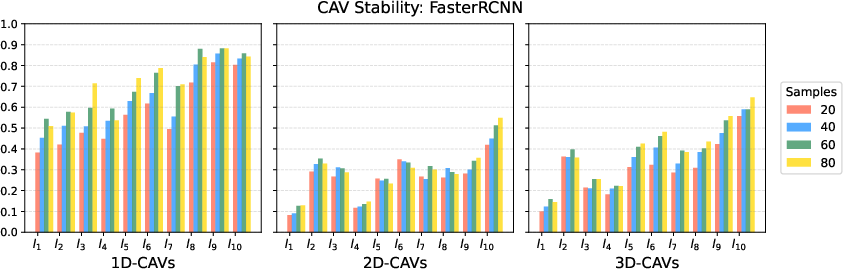

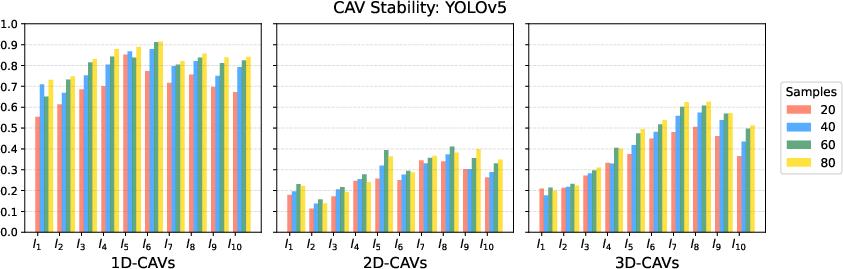

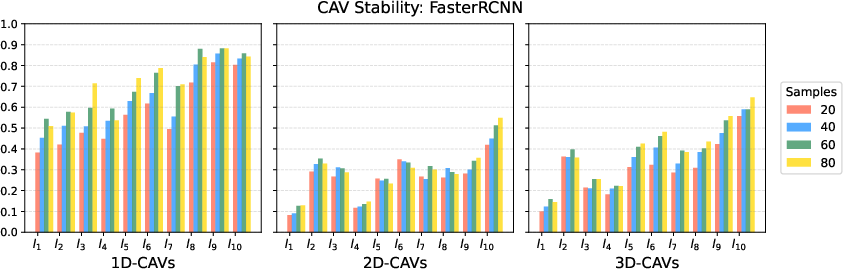

Experiments demonstrate that 1D-CAVs achieve the best overall CAV stability due to their consistency and good concept separation. Increasing the number of training concept images has a positive impact on CAV stability, with a recommendation of at least 40 to 60 concept-related samples for training each CAV. The negative impact of gradient instability on concept analysis using TCAV is found to be minimal. SmoothGrad can have a higher impact on concept attribution values in shallow layers, where gradient instability is higher. The use of 1D-CAV representations generally results in lower CAD values than 3D-CAVs.

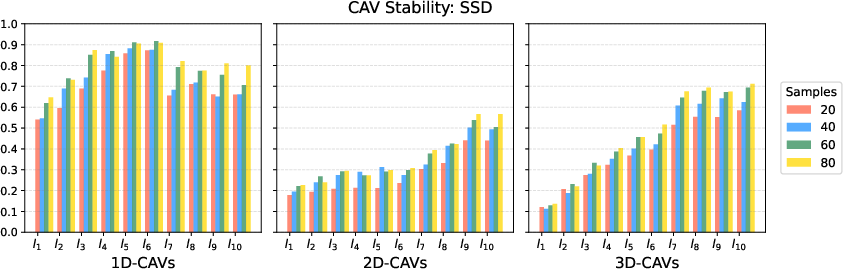

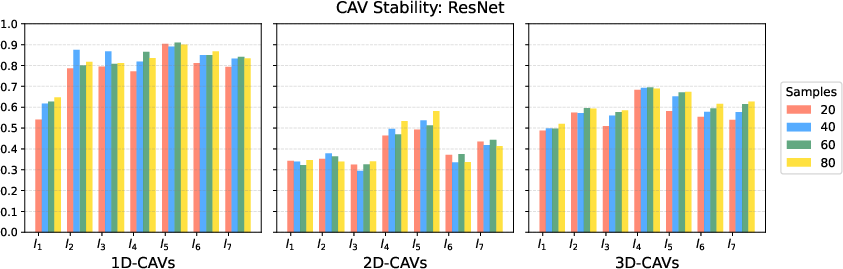

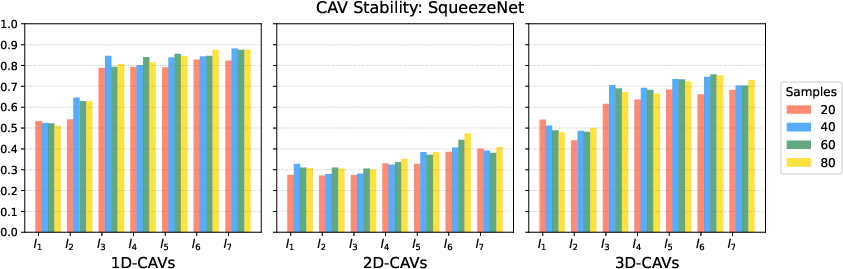

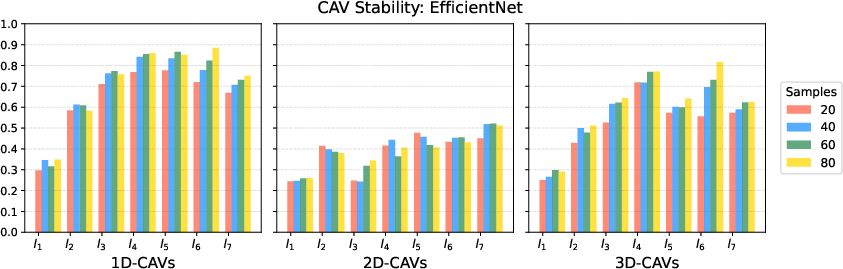

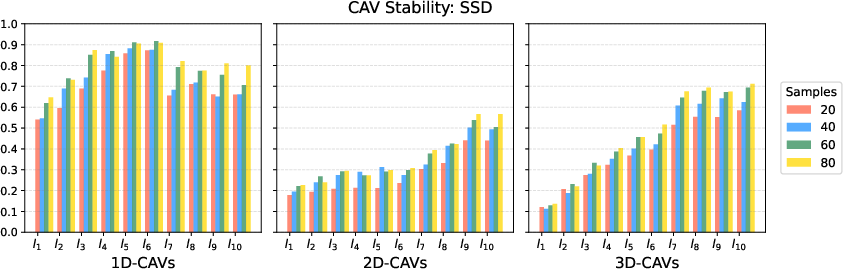

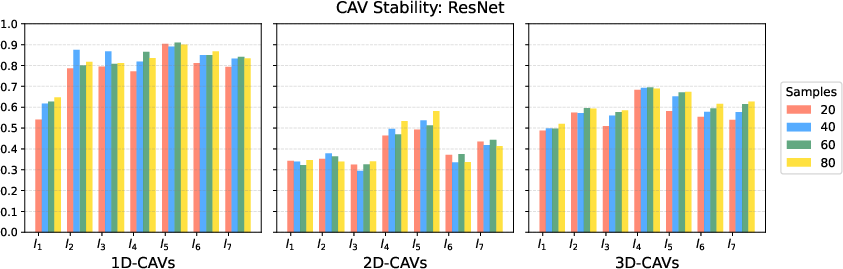

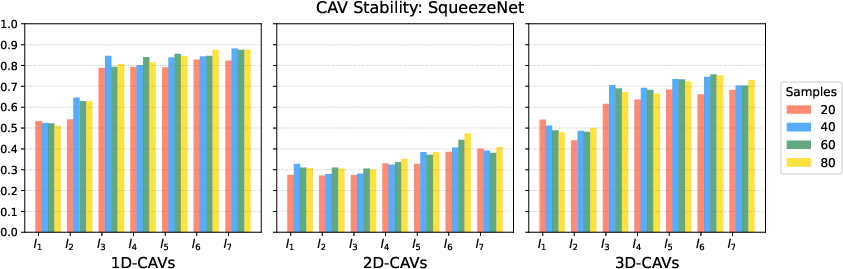

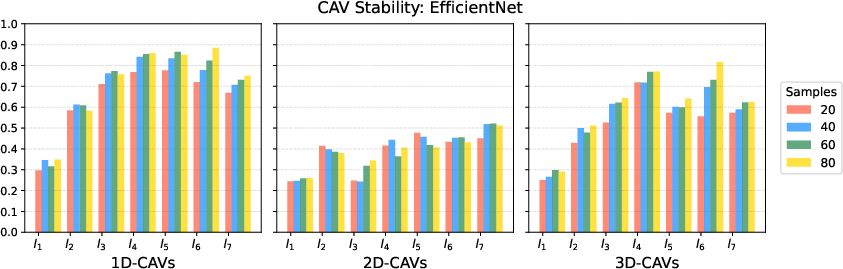

The impact of the number of concept samples on CAV stability for YOLO5, RCNN, SSD, ResNet, SqueezeNet, and EfficientNet is shown in (Figure 3), (Figure 4), (Figure 5), (Figure 6), (Figure 7), and (Figure 8), respectively.

Figure 3: Impact of number of concept samples on CAVs stability for YOLO5

Figure 4: Impact of number of concept samples on CAVs stability for RCNN

Figure 5: Impact of number of concept samples on CAVs stability for SSD

Figure 6: Impact of number of concept samples on CAVs stability for ResNet

Figure 7: Impact of number of concept samples on CAVs stability for SqueezeNet

Figure 8: Impact of number of concept samples on CAVs stability for EfficientNet

Conclusion

This study provides a framework and metrics for evaluating the layer-wise stability of global vector representations in object detection and classification CNN models for explainability purposes. The concept retrieval stability metric jointly evaluates the consistency and separation in the feature space of concept semantic concept representations. Aggregated 1D-CAV representations offer the best performance. A minimum of 60 training samples per concept is necessary to ensure high stability in most cases. The concept attribution stability metric assesses the impact of gradient smoothing techniques on the stability of concept attribution. 1D-CAVs are more resistant to gradient instability, particularly in deep layers, and the paper recommends using gradient smoothing in shallow layers of deep network backbones. The work provides valuable quantitative insights into the robustness of concept representation, which can inform the selection of network layers and concept representations for CA in safety-critical applications.