Overview of "ParroT: Translating during Chat using LLMs tuned with Human Translation and Feedback"

The paper presents "ParroT," a sophisticated framework enhancing and regulating translation capabilities of LLMs in chat scenarios. The approach builds upon open-source LLMs like LLaMA, introducing an innovative method leveraging human-written translations and feedback. In essence, ParroT takes advantage of human annotation and feedback to refine the machine translation (MT) process through a structured instruction-following format.

Contributions and Methodology

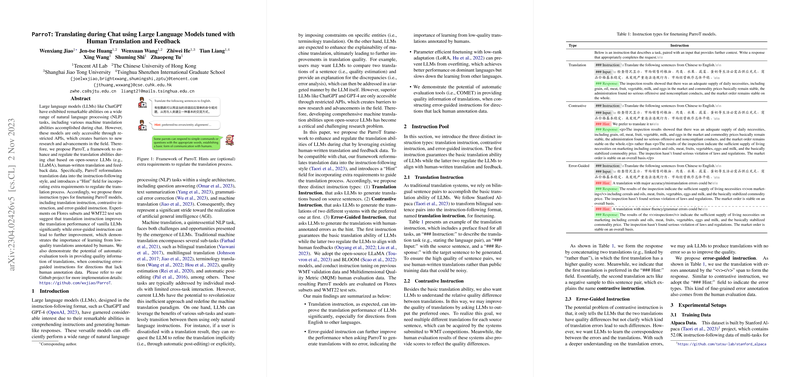

ParroT employs three types of instruction forms to finetune the LLMs: translation instruction, contrastive instruction, and error-guided instruction. These instructions are vital in aligning the model's output with human-sensible translations:

- Translation Instruction: This form uses high-quality human-written translations to ensure the model's translation proficiency. Such instructions define the language pair and utilize bilingual sentence pairs to guide the model's translation generation.

- Contrastive Instruction: This instruction type distinguishes between translations of varied quality from different systems, allowing the model to recognize preferred outputs. The framework uses a hint to indicate the more desirable translation, directing the LLM to prioritize certain qualities over others.

- Error-Guided Instruction: Leveraging human feedback data, this type focuses on translation errors, highlighting mistranslations and grammatical issues. It guides the model in associating errors with translations, hence improving upon low-quality translations and striving for accuracy in the generated output.

Experimental Findings

The experimentation involved subsets of Flores and WMT22 test sets. Numerical results underscore the effectiveness of translation instructions in significantly enhancing vanilla LLM translation performance. Notably, error-guided instruction offers additional improvements, stressing the importance of learning from annotated low-quality translations. The paper further indicates that parameter-efficient finetuning via low-rank adaptation, such as LoRA, can mitigate overfitting and achieve better outcomes for dominant language translations, albeit with trade-offs in learning efficacy for lower-resource languages.

Implications and Future Directions

ParroT's framework demonstrates substantial potential in advancing both theoretical understanding and practical applications of machine translation utilizing LLMs. The results suggest that guided translation methodologies can significantly align models' behaviors with human expectations, fostering more accurate and reliable textual translations during chats.

Moving forward, extending the instruction set to include more nuanced hints, such as specific entity alignments, is a plausible path to further refine the translation capability of LLMs in practical settings. Moreover, enhancing configurability and adaptability of the LoRA technique to balance parameter efficiency and translation quality could yield promising results in multilingual and multi-directional translation tasks.

Overall, this research provides a compelling blueprint for augmenting the translation capacities of LLMs, leveraging structured human insights to bridge existing gaps in machine comprehension and translation accuracy during real-time chat interactions.