Less is More: Selective Layer Finetuning with SubTuning

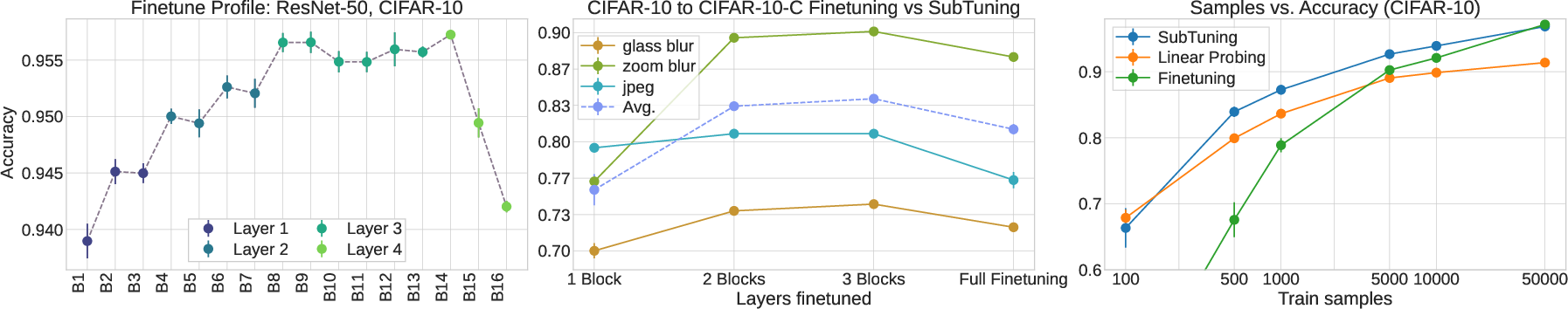

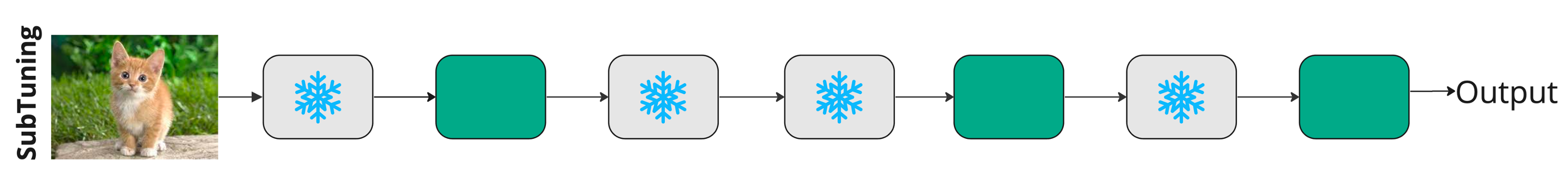

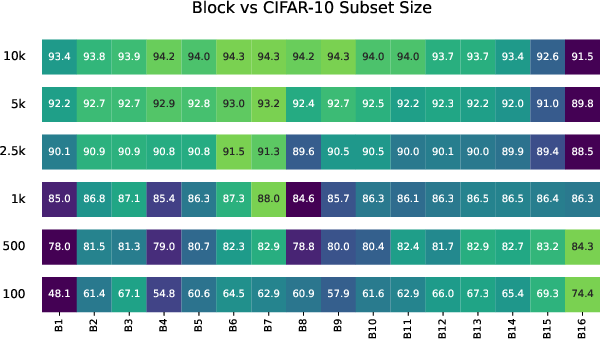

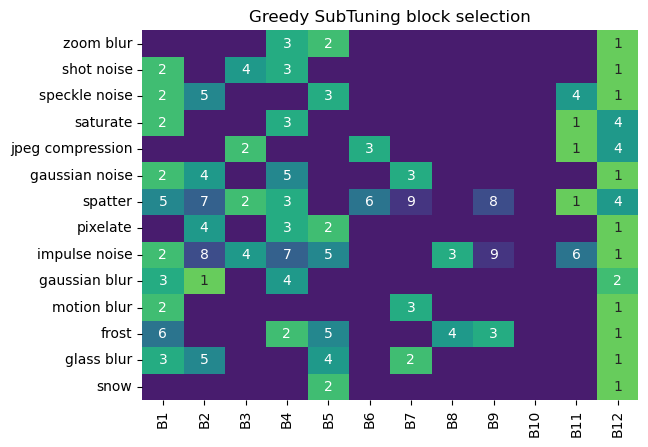

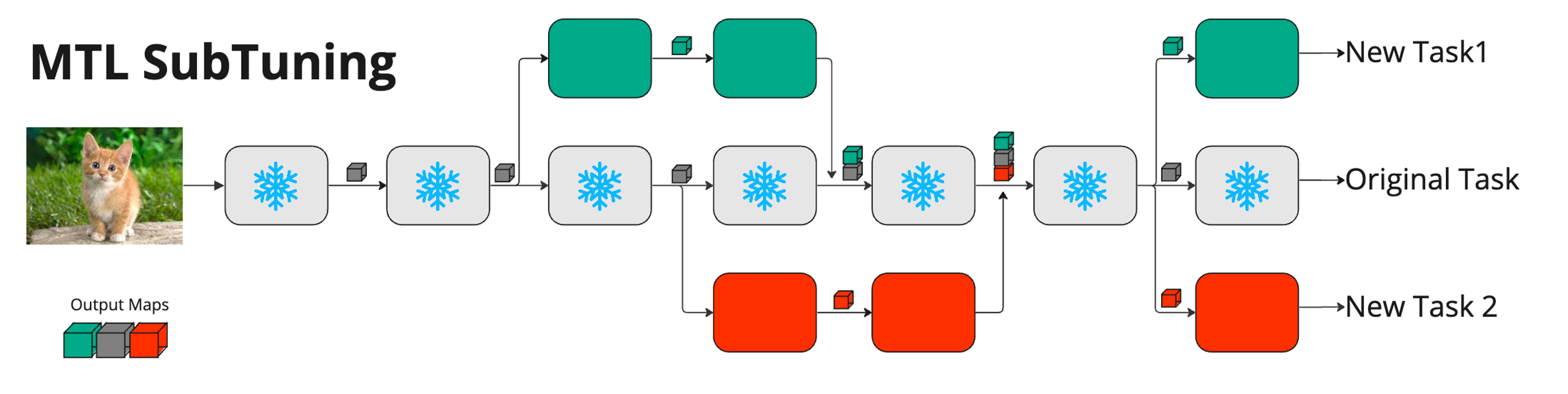

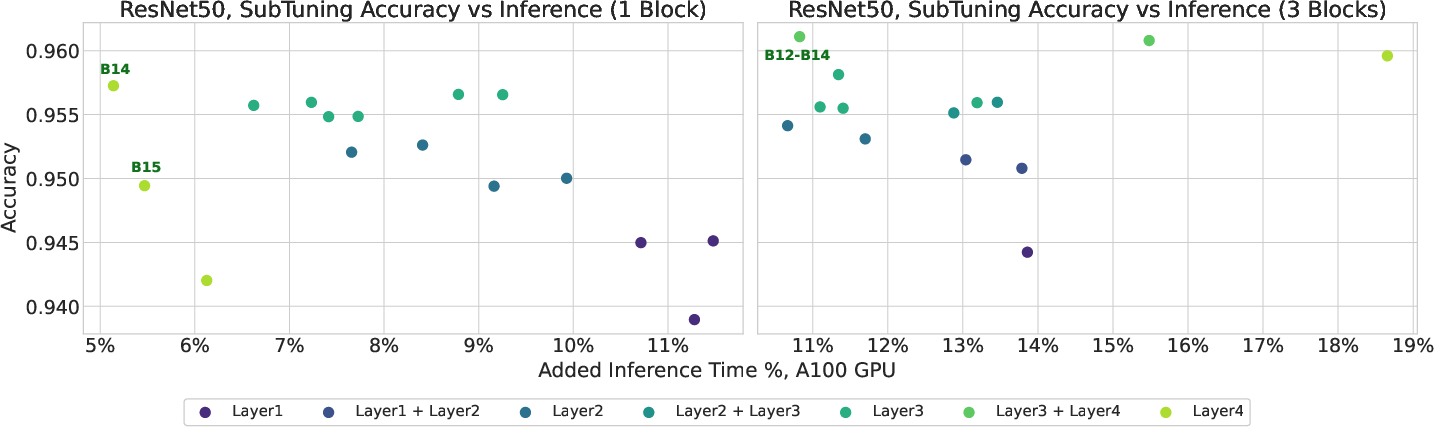

Abstract: Finetuning a pretrained model has become a standard approach for training neural networks on novel tasks, resulting in fast convergence and improved performance. In this work, we study an alternative finetuning method, where instead of finetuning all the weights of the network, we only train a carefully chosen subset of layers, keeping the rest of the weights frozen at their initial (pretrained) values. We demonstrate that \emph{subset finetuning} (or SubTuning) often achieves accuracy comparable to full finetuning of the model, and even surpasses the performance of full finetuning when training data is scarce. Therefore, SubTuning allows deploying new tasks at minimal computational cost, while enjoying the benefits of finetuning the entire model. This yields a simple and effective method for multi-task learning, where different tasks do not interfere with one another, and yet share most of the resources at inference time. We demonstrate the efficiency of SubTuning across multiple tasks, using different network architectures and pretraining methods.

- Margin based active learning. In International Conference on Computational Learning Theory, pages 35–50. Springer, 2007.

- Language models are few-shot learners. CoRR, abs/2005.14165, 2020.

- Query learning with large margin classifiers. In ICML, volume 20, page 0, 2000.

- Emerging properties in self-supervised vision transformers, 2021.

- Rich Caruana. Multitask learning. Machine learning, 28(1):41–75, 1997.

- Conv-adapter: Exploring parameter efficient transfer learning for convnets, 2022.

- Gradnorm: Gradient normalization for adaptive loss balancing in deep multitask networks. In International conference on machine learning, pages 794–803. PMLR, 2018.

- Palm: Scaling language modeling with pathways, 2022.

- Robustbench: a standardized adversarial robustness benchmark. arXiv preprint arXiv:2010.09670, 2020.

- Imagenet: A large-scale hierarchical image database. In 2009 IEEE Conference on Computer Vision and Pattern Recognition, pages 248–255, 2009.

- BERT: pre-training of deep bidirectional transformers for language understanding. CoRR, abs/1810.04805, 2018.

- An image is worth 16x16 words: Transformers for image recognition at scale. CoRR, abs/2010.11929, 2020.

- Head2toe: Utilizing intermediate representations for better transfer learning. CoRR, abs/2201.03529, 2022.

- Gongfan Fang. Torch-Pruning, 7 2022.

- On the effectiveness of parameter-efficient fine-tuning. arXiv preprint arXiv:2211.15583, 2022.

- Towards a unified view of parameter-efficient transfer learning. arXiv preprint arXiv:2110.04366, 2021.

- Masked autoencoders are scalable vision learners. CoRR, abs/2111.06377, 2021.

- Deep residual learning for image recognition. CoRR, abs/1512.03385, 2015.

- Parameter-efficient model adaptation for vision transformers, 2022.

- Benchmarking neural network robustness to common corruptions and perturbations. arXiv preprint arXiv:1903.12261, 2019.

- Parameter-efficient transfer learning for NLP. CoRR, abs/1902.00751, 2019.

- Lora: Low-rank adaptation of large language models. CoRR, abs/2106.09685, 2021.

- Neural tangent kernel: Convergence and generalization in neural networks. Advances in neural information processing systems, 31, 2018.

- Visual prompt tuning, 2022.

- Multi-class active learning for image classification. In 2009 ieee conference on computer vision and pattern recognition, pages 2372–2379. IEEE, 2009.

- Multi-class active learning for image classification. In 2009 IEEE Conference on Computer Vision and Pattern Recognition, pages 2372–2379, 2009.

- Class-incremental learning by knowledge distillation with adaptive feature consolidation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 16071–16080, 2022.

- Not all samples are created equal: Deep learning with importance sampling. In International conference on machine learning, pages 2525–2534. PMLR, 2018.

- Overcoming catastrophic forgetting in neural networks. Proceedings of the national academy of sciences, 114(13):3521–3526, 2017.

- Wilds: A benchmark of in-the-wild distribution shifts. In International Conference on Machine Learning, pages 5637–5664. PMLR, 2021.

- 3d object representations for fine-grained categorization. In 4th International IEEE Workshop on 3D Representation and Recognition (3dRR-13), Sydney, Australia, 2013.

- Learning multiple layers of features from tiny images, 2009.

- Surgical fine-tuning improves adaptation to distribution shifts, 2022.

- Surgical fine-tuning improves adaptation to distribution shifts. arXiv preprint arXiv:2210.11466, 2022.

- The power of scale for parameter-efficient prompt tuning. CoRR, abs/2104.08691, 2021.

- A sequential algorithm for training text classifiers. In Proceedings of the 17th Annual International ACM SIGIR Conference on Research and Development in Information Retrieval, SIGIR ’94, page 3–12, Berlin, Heidelberg, 1994. Springer-Verlag.

- Pruning filters for efficient convnets, 2016.

- Searching for fast model families on datacenter accelerators. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 8085–8095, 2021.

- Prefix-tuning: Optimizing continuous prompts for generation. CoRR, abs/2101.00190, 2021.

- A closer look at loss weighting in multi-task learning, 2022.

- Loss-balanced task weighting to reduce negative transfer in multi-task learning. In Proceedings of the AAAI conference on artificial intelligence, volume 33, pages 9977–9978, 2019.

- Decoupled weight decay regularization, 2017.

- Piggyback: Adding multiple tasks to a single, fixed network by learning to mask. CoRR, abs/1801.06519, 2018.

- Torchvision the machine-vision package of torch. In Proceedings of the 18th ACM international conference on Multimedia, pages 1485–1488, 2010.

- Catastrophic interference in connectionist networks: The sequential learning problem. In Psychology of learning and motivation, volume 24, pages 109–165. Elsevier, 1989.

- Balancing average and worst-case accuracy in multitask learning, 2022.

- Automated flower classification over a large number of classes. In Indian Conference on Computer Vision, Graphics and Image Processing, Dec 2008.

- Optimization strategies in multi-task learning: Averaged or separated losses? CoRR, abs/2109.11678, 2021.

- The challenges of continuous self-supervised learning. arXiv preprint arXiv:2203.12710, 2022.

- Learning transferable visual models from natural language supervision. CoRR, abs/2103.00020, 2021.

- How fine can fine-tuning be? learning efficient language models. CoRR, abs/2004.14129, 2020.

- Learning multiple visual domains with residual adapters. CoRR, abs/1705.08045, 2017.

- Efficient parametrization of multi-domain deep neural networks. CoRR, abs/1803.10082, 2018.

- icarl: Incremental classifier and representation learning. In Proceedings of the IEEE conference on Computer Vision and Pattern Recognition, pages 2001–2010, 2017.

- Do ImageNet classifiers generalize to ImageNet? In Kamalika Chaudhuri and Ruslan Salakhutdinov, editors, Proceedings of the 36th International Conference on Machine Learning, volume 97 of Proceedings of Machine Learning Research, pages 5389–5400. PMLR, 09–15 Jun 2019.

- Imagenet-21k pretraining for the masses. arXiv preprint arXiv:2104.10972, 2021.

- Sebastian Ruder. An overview of multi-task learning in deep neural networks. arXiv preprint arXiv:1706.05098, 2017.

- Progressive neural networks. CoRR, abs/1606.04671, 2016.

- Progressive neural networks. arXiv preprint arXiv:1606.04671, 2016.

- Multi-task learning as multi-objective optimization. Advances in neural information processing systems, 31, 2018.

- Understanding machine learning: From theory to algorithms. Cambridge university press, 2014.

- Adashare: Learning what to share for efficient deep multi-task learning. Advances in Neural Information Processing Systems, 33:8728–8740, 2020.

- Lst: Ladder side-tuning for parameter and memory efficient transfer learning, 2022.

- Image as a foreign language: Beit pretraining for all vision and vision-language tasks, 2022.

- Multi-task learning for natural language processing in the 2020s: where are we going? Pattern Recognition Letters, 136:120–126, 2020.

- Coca: Contrastive captioners are image-text foundation models, 2022.

- Bitfit: Simple parameter-efficient fine-tuning for transformer-based masked language-models. CoRR, abs/2106.10199, 2021.

- Side-tuning: Network adaptation via additive side networks. CoRR, abs/1912.13503, 2019.

- Masking as an efficient alternative to finetuning for pretrained language models. CoRR, abs/2004.12406, 2020.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.