Deep Subspace Encoders for Nonlinear System Identification

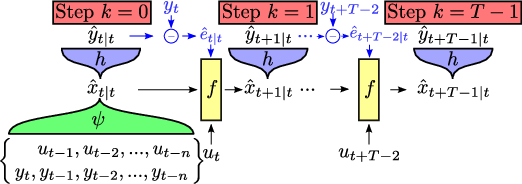

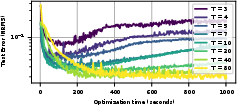

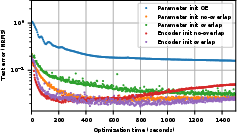

Abstract: Using Artificial Neural Networks (ANN) for nonlinear system identification has proven to be a promising approach, but despite of all recent research efforts, many practical and theoretical problems still remain open. Specifically, noise handling and models, issues of consistency and reliable estimation under minimisation of the prediction error are the most severe problems. The latter comes with numerous practical challenges such as explosion of the computational cost in terms of the number of data samples and the occurrence of instabilities during optimization. In this paper, we aim to overcome these issues by proposing a method which uses a truncated prediction loss and a subspace encoder for state estimation. The truncated prediction loss is computed by selecting multiple truncated subsections from the time series and computing the average prediction loss. To obtain a computationally efficient estimation method that minimizes the truncated prediction loss, a subspace encoder represented by an artificial neural network is introduced. This encoder aims to approximate the state reconstructability map of the estimated model to provide an initial state for each truncated subsection given past inputs and outputs. By theoretical analysis, we show that, under mild conditions, the proposed method is locally consistent, increases optimization stability, and achieves increased data efficiency by allowing for overlap between the subsections. Lastly, we provide practical insights and user guidelines employing a numerical example and state-of-the-art benchmark results.

- J. Schoukens and L. Ljung, “Nonlinear system identification: A user-oriented road map,” IEEE Control Systems Magazine, vol. 39, no. 6, pp. 28–99, 2019.

- L. H. Lee and K. Poolla, “Identification of linear parameter-varying systems using nonlinear programming,” Journal of Dynamic Systems, Measurement, and Control, vol. 121, pp. 71–78, 03 1999.

- Springer, 2010.

- P. Sliwiński, A. Marconato, P. Wachel, and G. Birpoutsoukis, “Non-linear system modelling based on constrained Volterra series estimates,” IET Control Theory & Applications, vol. 11, no. 15, pp. 2623–2629, 2017.

- G. Birpoutsoukis, A. Marconato, J. Lataire, and J. Schoukens, “Regularized nonparametric volterra kernel estimation,” Automatica, vol. 82, pp. 324–327, 2017.

- Wiley, 2013.

- M. Schoukens and K. Tiels, “Identification of block-oriented nonlinear systems starting from linear approximations: A survey,” Automatica, vol. 85, pp. 272–292, 2017.

- D. Gedon, N. Wahlström, T. B. Schön, and L. Ljung, “Deep state space models for nonlinear system identification,” IFAC-PapersOnLine, vol. 54, no. 7, pp. 481–486, 2021.

- J. Paduart, L. Lauwers, J. Swevers, K. Smolders, J. Schoukens, and R. Pintelon, “Identification of nonlinear systems using polynomial nonlinear state space models,” Automatica, vol. 46, no. 4, pp. 647–656, 2010.

- T. B. Schön, A. Wills, and B. Ninness, “System identification of nonlinear state-space models,” Automatica, vol. 47, no. 1, pp. 39–49, 2011.

- M. Schoukens, “Improved initialization of state-space artificial neural networks,” in In the Proc. of the European Control Conference, pp. 1913–1918, 2021.

- D. Masti and A. Bemporad, “Learning nonlinear state–space models using autoencoders,” Automatica, vol. 129, p. 109666, 2021.

- G. I. Beintema, R. Tóth, and M. Schoukens, “Nonlinear state-space identification using deep encoder networks,” in In the Proc. of Machine learning Research (3rd Annual Learning for Dynamics & Control Conference), vol. 144, pp. 241–250, 2021.

- G. I. Beintema, R. Tóth, and M. Schoukens, “Non-linear state-space model identification from video data using deep encoders,” IFAC-PapersOnLine, vol. 54, no. 7, pp. 697–701, 2021.

- M. Forgione, M. Mejari, and D. Piga, “Learning neural state-space models: do we need a state estimator?,” arXiv preprint arXiv:2206.12928, 2022.

- J. Decuyper, M. Runacres, J. Schoukens, and K. Tiels, “Tuning nonlinear state-space models using unconstrained multiple shooting,” IFAC-PapersOnLine, vol. 53, no. 2, pp. 334–340, 2020.

- J. Decuyper, P. Dreesen, J. Schoukens, M. C. Runacres, and K. Tiels, “Decoupling multivariate polynomials for nonlinear state-space models,” IEEE Control Systems Letters, vol. 3, no. 3, pp. 745–750, 2019.

- J. A. K. Suykens, B. L. R. D. Moor, and J. Vandewalle, “Nonlinear system identification using neural state space models, applicable to robust control design,” International Journal of Control, vol. 62, no. 1, pp. 129–152, 1995.

- A. H. Ribeiro, K. Tiels, J. Umenberger, T. B. Schön, and L. A. Aguirre, “On the smoothness of nonlinear system identification,” Automatica, vol. 121, p. 109158, 2020.

- M. Forgione and D. Piga, “Continuous-time system identification with neural networks: Model structures and fitting criteria,” European Journal of Control, vol. 59, pp. 69–81, 2021.

- M. Jansson, “Subspace identification and ARX modeling,” IFAC Proceedings Volumes, vol. 36, no. 16, pp. 1585–1590, 2003.

- D. P. Kingma and J. Ba, “Adam: A method for stochastic optimization,” International Conference on Learning Representations, 2015.

- A. Isidori, Nonlinear control systems: an introduction. Springer, 1985.

- Springer, 2005.

- Springer, 1995.

- M. Darouach and M. Zasadzinski, “Unbiased minimum variance estimation for systems with unknown exogenous inputs,” Automatica, vol. 33, no. 4, pp. 717–719, 1997.

- X. Glorot and Y. Bengio, “Understanding the difficulty of training deep feedforward neural networks,” in In the Proc. of the thirteenth international conference on artificial intelligence and statistics, pp. 249–256, JMLR Workshop and Conference Proceedings, 2010.

- H. G. Bock, “Numerical treatment of inverse problems in chemical reaction kinetics,” in In the Proc. of Modelling of Chemical Reaction Systems, pp. 102–125, Springer, 1981.

- J. Decuyper, M. C. Runacres, J. Schoukens, and K. Tiels, “Tuning nonlinear state-space models using unconstrained multiple shooting,” IFAC-PapersOnLine, vol. 53, no. 2, pp. 334–340, 2020.

- C. Tallec and Y. Ollivier, “Unbiasing truncated backpropagation through time,” arXiv preprint arXiv:1705.08209, 2017.

- Birkhäuser, 2012.

- L. Ljung, “Convergence analysis of parametric identification methods,” IEEE Transactions on Automatic Control, vol. 23, no. 5, pp. 770–783, 1978.

- J. C. Willems, “Open stochastic systems,” IEEE Transactions on Automatic Control, vol. 58, no. 2, pp. 406–421, 2013.

- M. B. Priestley, “Non-linear and non-stationary time series analysis,” London: Academic Press, pp. 59–63, 1988.

- S. Boyd and L. Chua, “Fading memory and the problem of approximating nonlinear operators with Volterra series,” IEEE Transactions on Circuits and Systems, vol. 32, no. 11, pp. 1150–1161, 1985.

- T. Ohtsuka, “Model structure simplification of nonlinear systems via immersion,” IEEE Transactions on Automatic Control, vol. 50, no. 5, pp. 607–618, 2005.

- H.-G. Lee and S. Marcus, “Immersion and immersion by nonsingular feedback of a discrete-time nonlinear system into a linear system,” IEEE Transactions on Automatic Control, vol. 33, no. 5, pp. 479–483, 1988.

- M. O. Williams, M. S. Hemati, S. T. Dawson, I. G. Kevrekidis, and C. W. Rowley, “Extending data-driven Koopman analysis to actuated systems,” IFAC-PapersOnLine, vol. 49, no. 18, pp. 704–709, 2016.

- J. Schoukens and L. Ljung, “Wiener–Hammerstein benchmark,” in LiTH-ISY-R, Linköping University Electronic Press, 2009.

- M. Schoukens, R. Pintelon, and Y. Rolain, “Identification of Wiener–Hammerstein systems by a nonparametric separation of the best linear approximation,” Automatica, vol. 50, no. 2, pp. 628–634, 2014.

- J. Sjöberg, L. Lauwers, and J. Schoukens, “Identification of Wiener–Hammerstein models: Two algorithms based on the best split of a linear model applied to the SYSID’09 benchmark problem,” Control Engineering Practice, vol. 20, no. 11, pp. 1119–1125, 2012.

- M. Schoukens and R. Tóth, “On the initialization of nonlinear LFR model identification with the best linear approximation,” IFAC-PapersOnLine, vol. 53, no. 2, pp. 310–315, 2020.

- J. Paduart, L. Lauwers, R. Pintelon, and J. Schoukens, “Identification of a Wiener–Hammerstein system using the polynomial nonlinear state space approach,” Control Engineering Practice, vol. 20, no. 11, pp. 1133–1139, 2012.

- A. Wills and B. Ninness, “Estimation of generalised Hammerstein–Wiener systems,” IFAC Proceedings Volumes, vol. 42, no. 10, pp. 1104–1109, 2009.

- T. Falck, K. Pelckmans, J. A. Suykens, and B. De Moor, “Identification of Wiener–Hammerstein systems using LS-SVMs,” IFAC Proceedings Volumes, vol. 42, no. 10, pp. 820–825, 2009.

- A. Naitali and F. Giri, “Wiener–Hammerstein system identification – an evolutionary approach,” International Journal of Systems Science, vol. 47, no. 1, pp. 45–61, 2016.

- D. Khandelwal, Automating data-driven modelling of dynamical systems: an evolutionary computation approach. Springer Theses, Springer, 2022.

- A. Marconato and J. Schoukens, “Identification of Wiener–Hammerstein benchmark data by means of support vector machines,” IFAC Proceedings Volumes, vol. 42, no. 10, pp. 816–819, 2009.

- L. Lauwers, R. Pintelon, and J. Schoukens, “Modelling of Wiener–Hammerstein systems via the best linear approximation,” IFAC Proceedings Volumes, vol. 42, no. 10, pp. 1098–1103, 2009.

Paper Prompts

Sign up for free to create and run prompts on this paper using GPT-5.

Top Community Prompts

Collections

Sign up for free to add this paper to one or more collections.