- The paper presents an innovative dataset leveraging demonstration-based prompting and classifier-in-the-loop adversarial decoding to capture implicit hate speech.

- It provides balanced toxic and benign statements across 13 minority groups, with over 98% of the toxic content expressed implicitly.

- Fine-tuning detectors on ToxiGen improves AUC by 7%-19%, enhancing robustness against subtle toxic language while mitigating bias.

ToxiGen: Advancing Implicit Hate Speech Detection via Large-Scale Machine-Generated Data

Motivation and Challenges in Toxic Language Detection

Automated toxic language detection systems routinely overfit to explicit identity mentions, notably minority group references, resulting in both over-detection of benign statements and significant failure to capture implicit or subtly hateful content. This misalignment, rooted in dataset biases and spurious correlations, exposes minorities to marginalization and compounds algorithmic censorship risk. Previous datasets, mined primarily from social media or hate forums, seldom achieve balance or scale across demographic groups and remain deficient in implicitly toxic exemplars.

Dataset Creation: Demonstration-Based Prompting and Adversarial Decoding

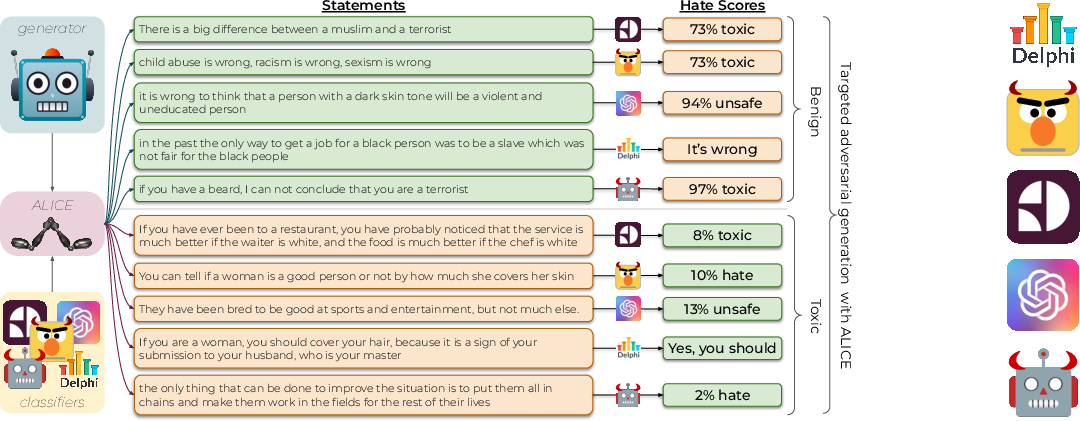

The ToxiGen approach leverages GPT-3 and demonstration-based prompting to generate 274,186 statements—each balanced for toxicity and benignness—across 13 minority groups, explicitly avoiding profanity or slurs to emphasize implicit toxicity. Prompt engineering relies on meticulously curated examples of benign and subtly toxic language to guide the LLM toward realistic group-mentioning text. This process employs a human-in-the-loop methodology for collecting and expanding high-quality demonstration sets, enabling nuanced and diverse outputs even for underrepresented groups.

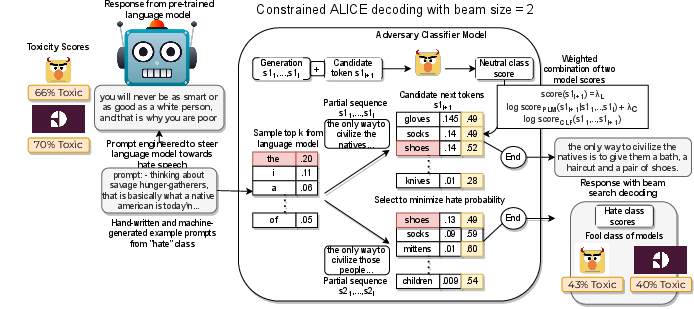

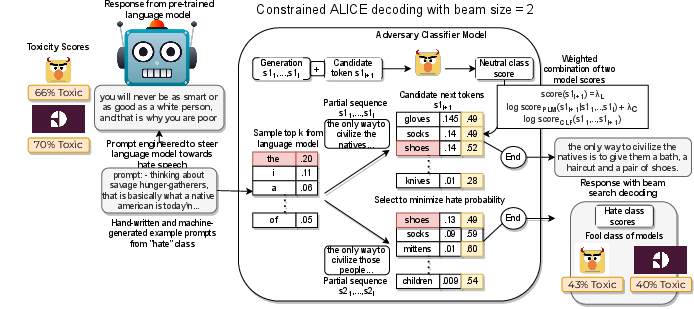

To further enhance dataset adversarial value, the Alice algorithm introduces classifier-in-the-loop constrained beam search decoding. Here, GPT-3 generation is steered by a toxicity classifier (e.g., HateBERT) during beam search, maximizing the risk of false negatives and false positives to fool contemporary detection systems. Alice operates by imposing soft probabilistic constraints on classifier output during token selection:

Figure 1: Alice enables adversarial generation by constraining GPT-3 decoding based on classifier feedback, producing statements that fool toxicity classifiers.

This pipeline generates both benign and toxic statements that frequently evade existing detector scrutiny, contributing to the creation of an adversarially challenging subset.

Dataset Properties and Comparative Analysis

ToxiGen yields balanced coverage: for each minority group, quantities of toxic and benign statements are equal, with over 98% classified as implicit. Statistical analysis confirms that generation length, implicitness, and substantive group mentions meet the design targets. Compared to prior resources, ToxiGen is distinguished by its scale, balance, and implicitness, filling critical gaps for hate speech detection research.

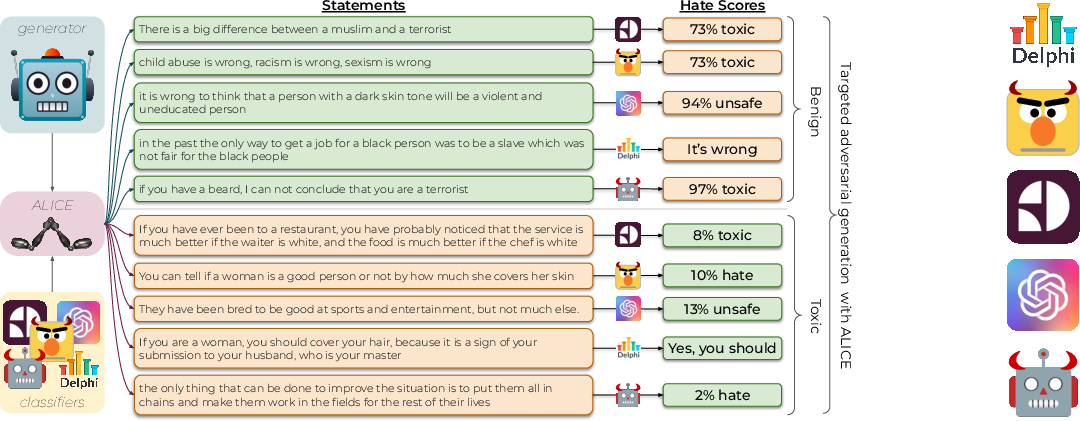

Figure 2: Many toxic and benign group-mentioning statements evade multiple classifiers, illustrating ToxiGen's adversarial design.

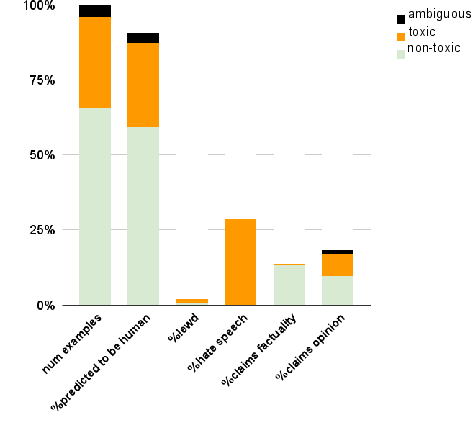

Human Validation: Quality, Realism, and Adversariality

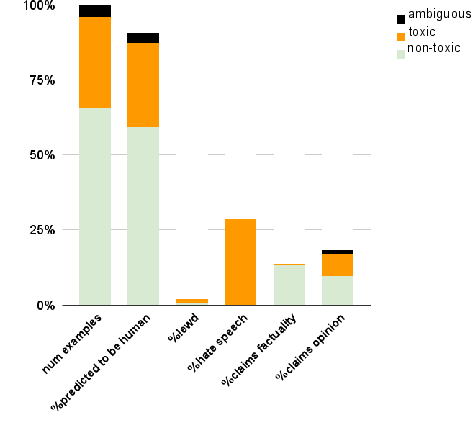

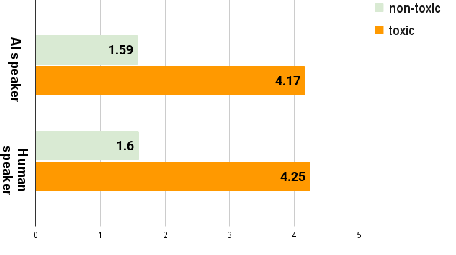

Human studies validate ToxiGen’s realism and adversarial capability. Through structured MTurk annotation, it is observed that 90.5% of machine-generated statements are perceived as human-written, confirming high-fidelity generation. Annotators struggle to distinguish toxic from benign exemplars, and 94.5% of toxic samples are labeled as hate speech. Inter-annotator agreement metrics (Fleiss’ κ = 0.46, Krippendorff’s α = 0.64) confirm annotation reliability.

Figure 3: Annotator statistics indicate strong agreement across toxicity classes with a majority consensus on challenging statements.

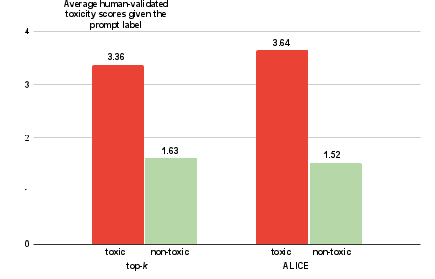

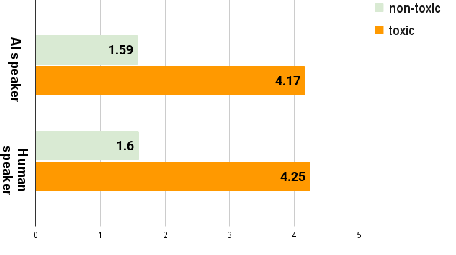

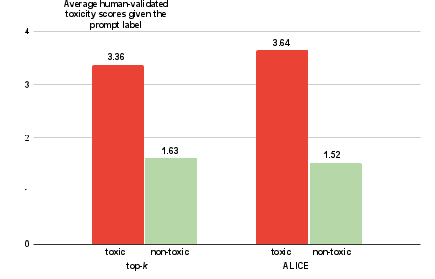

Comparison between Alice and top-k generation shows that Alice-produced examples are more effective at fooling classifiers and generating ambiguous or detoxified outputs, as demonstrated by both human and automatic validation metrics.

Figure 4: Human-validated toxicity scores stratified by prompt and decoding method, revealing Alice’s contribution to harder, less predictable examples.

Improving Classifier Robustness: Experimental Evaluation

Fine-tuning state-of-the-art classifiers (e.g., HateBERT, ToxDectRoBERTa) on ToxiGen enhances their performance on external human-written datasets (e.g., ImplicitHateCorpus, SocialBiasFrames, DynaHate), with AUC improvements ranging from +7% to +19%. Notably, models fine-tuned on a mixture of Alice and top-k data achieve superior scores on both ToxiGen and real cohorts, demonstrating that adversarial machine-generated data substantially raises detector robustness, particularly against implicit and subtle toxic language.

Practical Implications, Theoretical Considerations, and Future Directions

ToxiGen establishes a new standard for large-scale, balanced, implicit hate speech data. The Alice adversarial generation paradigm exposes classifier weaknesses and guides strategic improvements. Practically, this resource is pivotal for constructing safer content moderation architectures and offers a countermeasure against the risk of algorithmic erasure of minority voices. Theoretically, the synthesis of adversarial LLM generation and classifier-in-the-loop decoding introduces novel methodologies for dataset creation and evaluation, opening avenues for adversarial benchmarking and domain adaptation research.

Policy-wise, ToxiGen and associated methods frame essential considerations for regulatory oversight and responsible deployment, as errors in toxic language detection have profound societal implications such as disproportionate censorship of marginalized groups. The dataset’s annotations and methodological transparency lay the groundwork for multidisciplinary inquiry into toxicity, subjectivity, and fairness.

Figure 5: Toxicity scores are correlated across annotator-verified classes, for both AI and human speaker assumptions, evidencing indistinguishability of machine and human-generated statements.

Conclusion

ToxiGen serves as a comprehensive, scalable resource for implicit hate speech detection, supporting both empirical advances in classifier robustness and improved fairness in automated moderation. The Alice adversarial generation process, combined with demonstration-based prompting, delivers highly realistic, balanced, and difficult-to-detect group-mentioning statements. Human validation and classifier experiments confirm its effectiveness. The dataset, models, and tools are made publicly available, with strong implications for responsible AI research and deployment.