- The paper presents a novel continuous 6D rotation matrix representation that overcomes discontinuities in Euler angles and quaternions for accurate head pose estimation.

- It employs a geodesic loss function aligned with the SO(3) geometry, leading to improved convergence and performance gains of up to 20% on benchmarks.

- The method simplifies network architecture by eliminating the need for landmark detection and discretization, demonstrating robust results with both RepVGG and ResNet backbones.

6D Rotation Representation for Unconstrained Head Pose Estimation

Introduction

The research presented in "6D Rotation Representation For Unconstrained Head Pose Estimation" (2202.12555) addresses a fundamental challenge in the domain of facial analysis: the estimation of head pose from a single image. This task, vital for applications like driver assistance, augmented reality, and human-robot interaction, traditionally faces hurdles associated with landmark-based and landmark-free methodologies. Landmark-based methods rely heavily on precise landmark localization, often compromised by occlusion or extreme rotations. Conversely, landmark-free approaches utilize deep learning for direct pose estimation, yet are frequently constrained by suboptimal rotation representations such as Euler angles and quaternions.

This paper proposes a novel approach leveraging a continuous 6D rotation matrix representation to enhance direct regression accuracy. Unlike prior methods, which suffer from the discontinuities inherent in Euler angles and quaternions, the proposed technique circumvents these limitations, offering robust head orientation predictions across a full rotation range.

Methodology

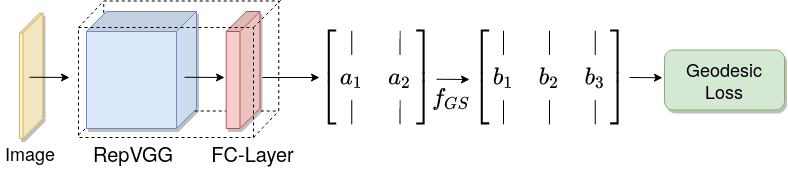

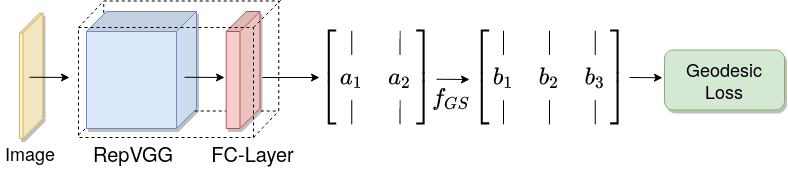

The central innovation of this research lies in the employment of a 6D rotation matrix representation coupled with a geodesic distance-based loss function. This combination addresses the limitations of traditional rotation representations. The proposed method simplifies network architecture by eliminating the necessity of discretizing rotations into classification problems.

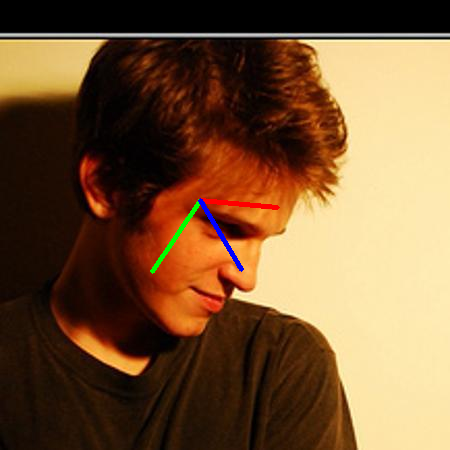

Figure 1: Overview of the proposed method.

Continuous 6D Rotation Matrix Representation

The research leverages a subset of the full rotation matrix, reducing the nine-parameter matrix to six parameters by omitting the last column vector. This strategy, inspired by Zhou et al., simplifies the regression task and adheres to the orthogonality constraint through a subsequent transformation. The conversion back to a 3x3 matrix is achieved with the Gram-Schmidt process incorporated into the representation, thus ensuring the satisfaction of orthogonality constraints.

Geodesic Loss Function

The geodesic loss is proposed as a replacement for the traditionally used l2-norm to better encapsulate the SO(3) manifold geometry. The loss computes the shortest path between two rotations, providing more meaningful penalization during training. This results in improved convergence and accuracy in predicting head orientations.

Experimental Results

The experimental setup utilizes the AFLW2000 and BIWI datasets for testing, employing a model trained on the 300W-LP dataset. The results demonstrate a performance exceeding state-of-the-art methods by up to 20% on the AFLW2000 dataset, with notable improvements across all rotational angles (yaw, pitch, and roll).

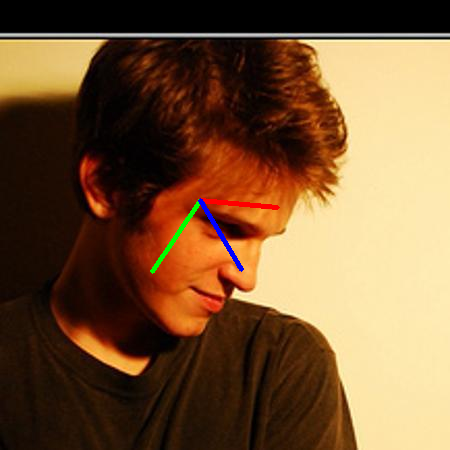

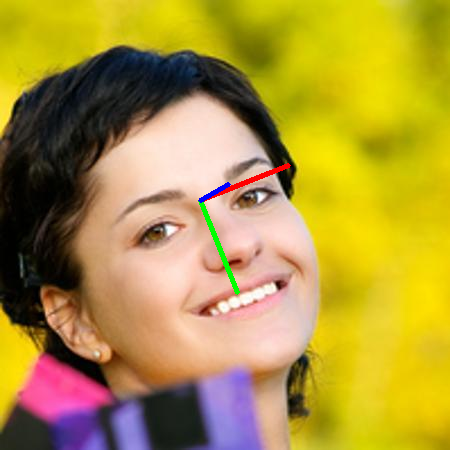

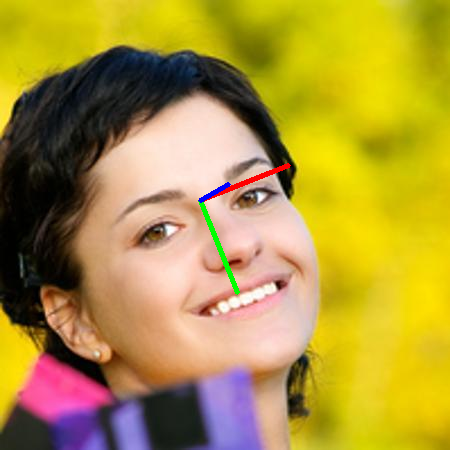

Figure 2: Example images with converted Euler angle visualization from the AFLW2000 dataset.

The research further explores the impact of different training conditions, network backbones, and loss functions. Results indicate that the geodesic loss consistently outperforms the ℓ2 norm across diverse datasets, corroborating its efficacy. The RepVGG backbone was also shown to yield superior outcomes compared to the traditional ResNet models.

Conclusion

This study presents a pivotal advancement in head pose estimation, providing a more reliable and accurate method for orientation prediction. By introducing a continuous 6D rotation matrix representation and a geodesic loss function, the research effectively addresses the discontinuities and limitations of previous representations. These innovations not only demonstrate improved performance but also enhance the network's generalization capabilities across different data sources.

The implications of this work extend to various AI and machine learning applications, particularly those necessitating robust and precise spatial orientation tracking. Future research could explore the integration of these methodologies with larger datasets encompassing full rotation samples, further expanding the potential applications and impact of this approach.