- The paper demonstrates that Conditional GANs can generate synthetic fruit images, boosting CNN accuracy from 83.77% to 88.75%.

- It employs a hybrid methodology of fine-tuning, transfer learning, and data augmentation to mitigate data scarcity in agricultural imaging.

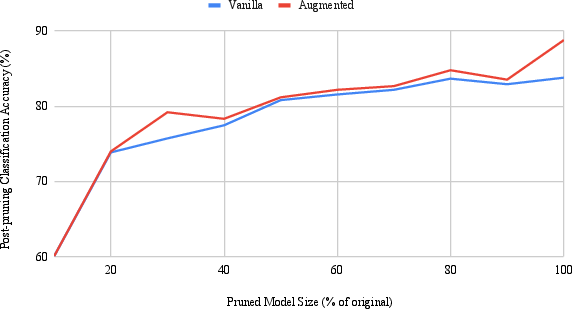

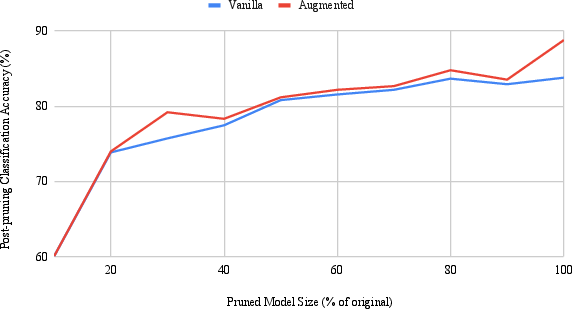

- The approach remains effective under model pruning, retaining 81.16% accuracy at 50% model size, highlighting its practical deployment.

Fruit Quality and Defect Image Classification with Conditional GAN Data Augmentation

Introduction

The paper "Fruit Quality and Defect Image Classification with Conditional GAN Data Augmentation" (2104.05647) explores the application of Conditional Generative Adversarial Networks (Conditional GANs) for improving fruit quality classification through data augmentation. This research addresses data scarcity, a significant challenge in machine learning models, especially in agriculture where large, representative datasets are difficult to obtain. Conditional GANs are leveraged to generate synthetic images that mimic real-world fruit conditions, enabling better generalization and robustness in image classification tasks.

Methodology

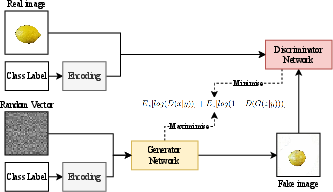

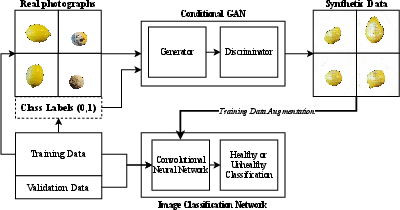

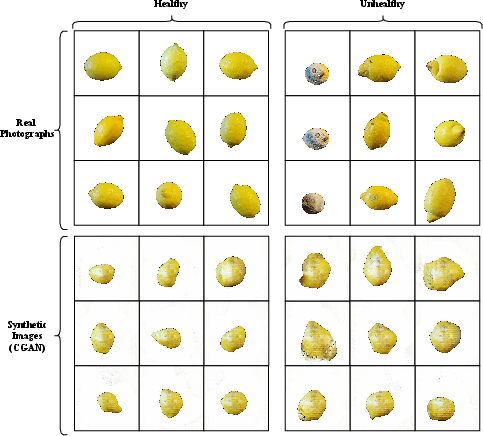

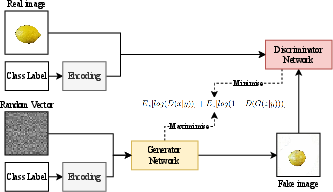

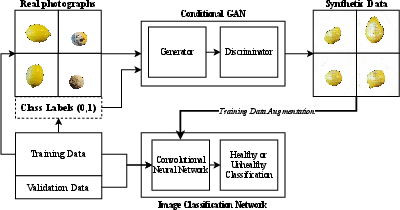

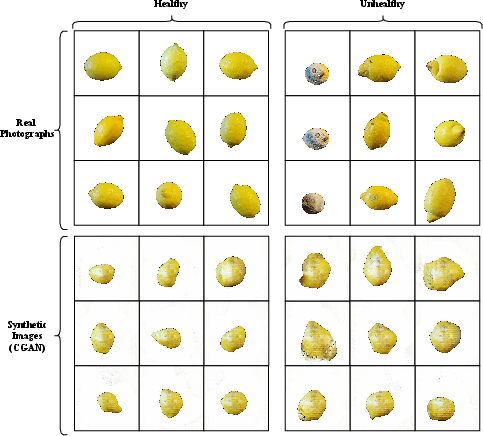

The study proposes a machine learning pipeline that integrates fine-tuning, transfer learning, and data augmentation through Conditional GANs for classifying fruit images based on quality. The data augmentation process involves generating synthetic images of healthy and unhealthy lemons, which are subsequently used to train a Convolutional Neural Network (CNN). The Conditional GAN is trained with 2690 images of lemons, producing both realistic images of healthy lemons and those with defects such as mould and gangrene.

Figure 1: Generalisation of a Conditional GAN topology.

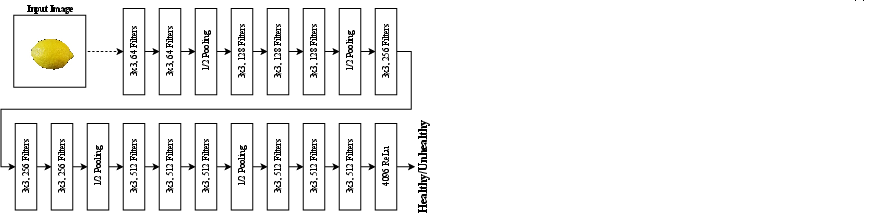

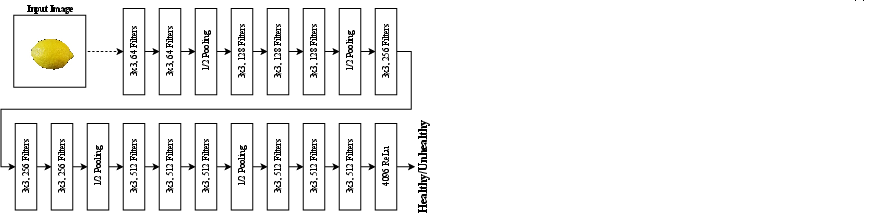

The CNN is constructed using the VGG16 architecture, enhanced with a fully connected layer containing 4096 neurons. The accuracy achieved with this setup is 83.77%. Upon augmenting the dataset with synthetic images generated by the Conditional GAN, the classification accuracy improves to 88.75%.

Figure 2: A general overview of our proposed approach for data augmentation towards fruit quality image classification.

Results

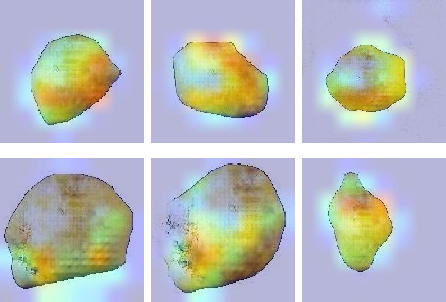

The impact of data augmentation using Conditional GANs is significant, as demonstrated by improved classification accuracy. Additionally, Grad-CAM analysis reveals that the synthetic images contain distinguishable and classifiable features. The model pruning experiments further showcase that the Conditional GAN-augmented model retains 81.16% classification accuracy even when reduced to 50% of its original size.

Figure 3: Overview of the VGG16 topology and custom interpretation and output layer which is used for binary image classification.

The Conditional GAN approach not only addresses the problem of data scarcity but also enhances the CNN’s ability to generalize, providing a practical solution for real-world applications in smart agriculture. Figures from the research illustrate the progressive training improvements and the quality output of synthetic images generated by the GAN.

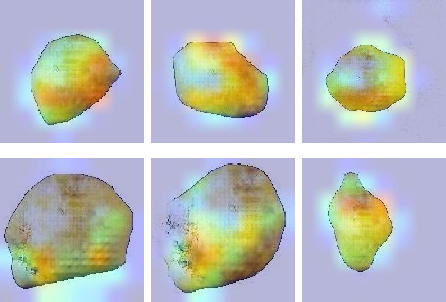

Figure 4: Examples of both real photographs and Conditional GAN outputs (Epoch 2000) for the two classes of healthy and unhealthy lemons.

Discussion and Implications

The research posits that Conditional GANs are capable of generating synthetic data that significantly improves fruit image classification tasks by providing additional, realistic data points. This advancement has both practical and theoretical implications. Practically, it can lead to automated sorting systems in the agricultural industry, improving efficiency and reducing labor costs. Theoretically, the study paves the way for further exploration in using GANs for other fine-grained image classification tasks.

Figure 5: Grad-CAM analysis of six outputs from the Conditional GAN. Top row shows images belonging to the "healthy" class and bottom row shows images belonging to the "unhealthy" class. This Grad-CAM VGG16 CNN is trained only on real photographs, and no training has been performed on synthetic images.

Conclusion

The application of Conditional GANs for data augmentation presents a promising direction for overcoming data scarcity in fruit quality classification, thereby enhancing the performance and applicability of CNNs in agriculture automation. The research demonstrates how integrating synthetic data can lead to substantial improvements in model accuracy and robustness, making it feasible for real-world deployment. Future work should explore the fine-tuning of GAN models across multiple stages to optimize synthetic image quality and investigate additional pruning techniques to further enhance model efficiency.

Figure 6: Comparison of the polynomial decay pruning accuracies for both vanilla and data augmentation models.