- The paper demonstrates a novel multi-modal approach integrating CNN-based audio-visual features with EEG data to enhance ad emotion recognition.

- It shows that CNN-extracted features outperform handcrafted methods, particularly in detecting the valence of emotions.

- User-centric EEG analysis combined with multi-task learning significantly improves ad insertion strategies and personalized advertising.

"Recognition of Advertisement Emotions with Application to Computational Advertising" (1904.01778)

Introduction

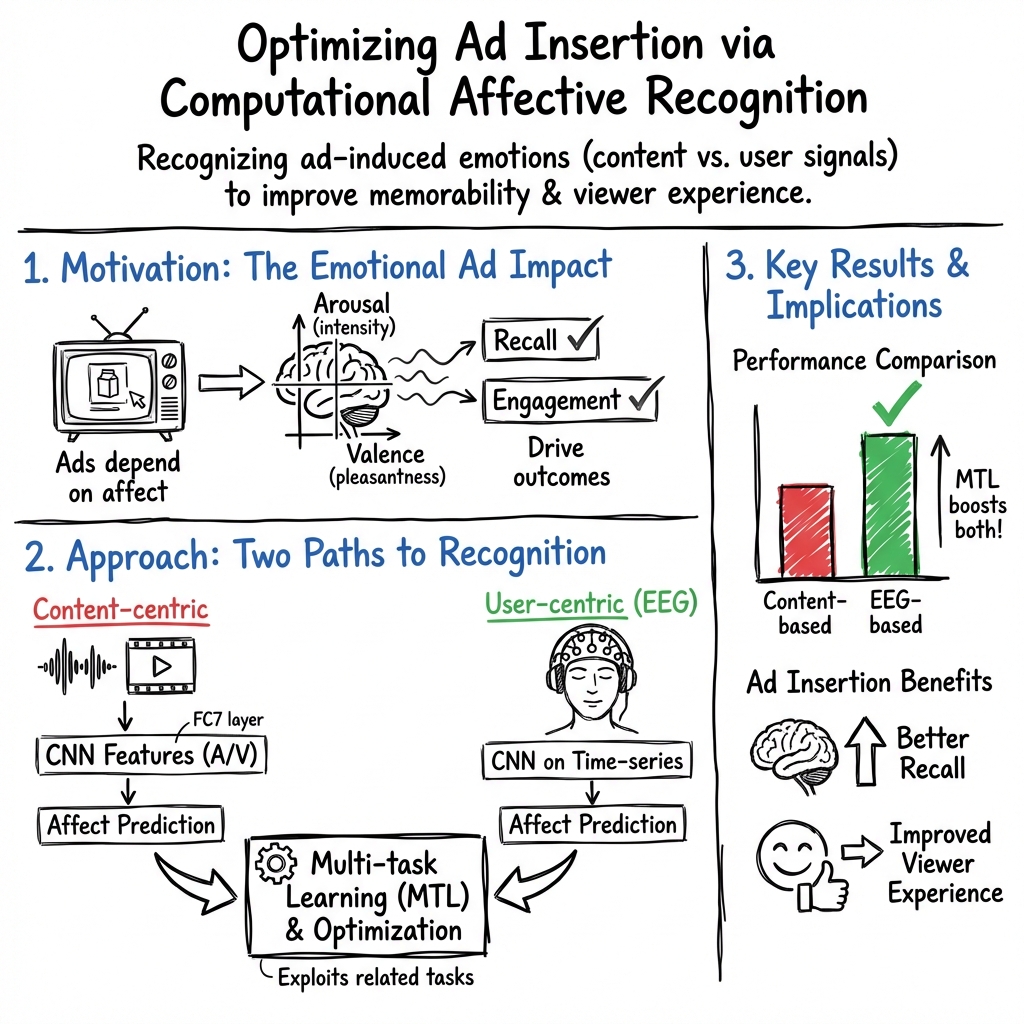

The paper "Recognition of Advertisement Emotions with Application to Computational Advertising" addresses a significant gap in the field of Affective Computing by exploring the recognition of emotions conveyed by advertisements (ads) through various modalities. The study integrates content-centric and user-centric approaches to affect recognition (AR) and evaluates their efficacy in facilitating computational advertising. By employing Convolutional Neural Networks (CNNs) and user-centric Electroencephalogram (EEG) responses, it aims to enhance ad insertion strategies in video streaming services.

Affective Ad Dataset Compilation

The authors compiled a novel dataset consisting of 100 diverse advertisements, meticulously curated to evoke coherent emotional responses across different viewers. Affective impressions are garnered from expert and novice annotators, ensuring the dataset's capability to induce emotions consistently. The dataset addresses the emotional dimensions of valence (val) and arousal (asl) based on the circumplex model of affect.

Content-Centric AR Approaches

The study leverages CNNs for extracting audio-visual features critical for decoding emotions from ads. By employing domain adaptation strategies, the study fine-tunes pre-trained models using a large-scale movie dataset, thus creating content-centric emotion predictors. Results demonstrate that CNN features surpass handcrafted audio-visual features for predicting both asl and val, with substantial improvement noted for valence recognition.

User-Centric AR via EEG

In contrast, user-centric AR is achieved using EEG responses recorded during ad viewings. A deep learning architecture, specifically a three-layer CNN, is trained to encode emotional attributes from EEG data. The analysis reveals that EEG-based features outperform content-based predictors, capturing emotions more effectively. The use of multi-task learning (MTL) further enhances AR performance, exploiting feature similarities among ads with similar emotional attributes.

Integration and Comparative Analysis

The study's findings underscore the complementary nature of content-centric and user-centric AR approaches. By probabilistically fusing classifier outputs from both modalities, the paper achieves superior AR performance compared to unimodal approaches. This integration highlights the potential for leveraging combined insights from content and user data to optimize AR outcomes.

Application to Computational Advertising

The research demonstrates the practical implications of improved AR in computational advertising. By optimizing ad insertion strategies in video streams based on affective relevance, the study enhances ad memorability and viewing experience. A user study confirms that EEG-based strategies achieve the most favorable results, underscoring the importance of user data in personalized advertising applications.

Conclusion

The paper presents a comprehensive evaluation of content-centric and user-centric approaches to AR in advertisements, proposing robust methodologies that significantly enhance computational advertising applications. By demonstrating the superiority of multi-modal AR systems, it lays the groundwork for future research in dynamic emotion recognition and intelligent ad placement. The study's methods offer promising avenues for exploring user experiences in digital advertising, highlighting the potential for AI-driven personalization in multimedia environments.