- The paper introduces the CrowdTruth methodology that leverages inter-annotator disagreement to generate high-quality semantic annotations.

- The methodology demonstrates improved recall and F1 scores in tasks like Medical Relation Extraction and yields richer results in open-ended annotations.

- The research underscores the value of diverse annotations and scalable, ambiguity-aware metrics, paving the way for future crowdsourcing innovations.

Empirical Methodology for Crowdsourcing Ground Truth

Introduction

The challenge of gathering ground truth data through human annotation is a critical bottleneck in the development of information extraction methods for populating the Semantic Web. Traditional approaches rely heavily on expert annotators, a process that is often costly, time-consuming, and not scalable across diverse domains and tasks. This paper presents an empirically derived methodology aimed at addressing these limitations through the implementation of CrowdTruth metrics, which focus on capturing inter-annotator disagreement to improve the quality of ground truth data across various domains and annotation tasks.

Methodology

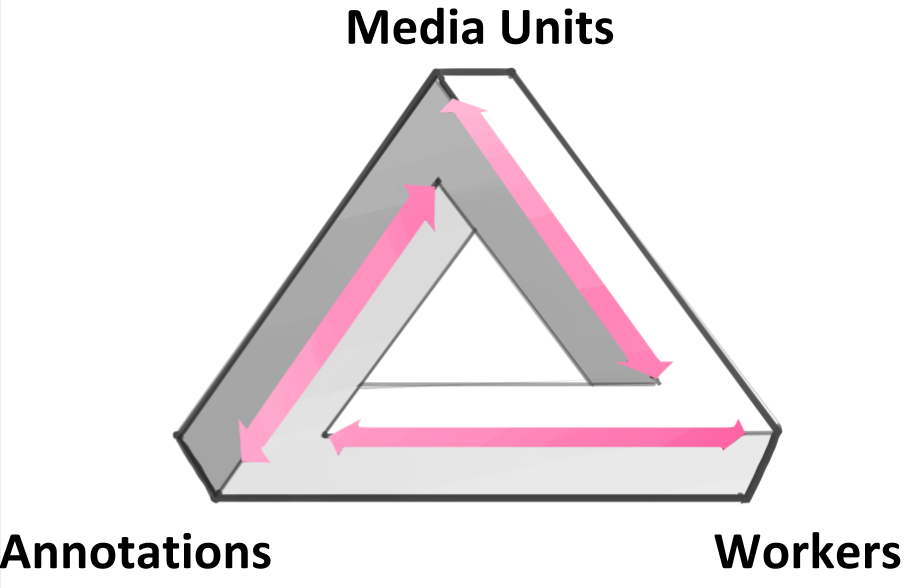

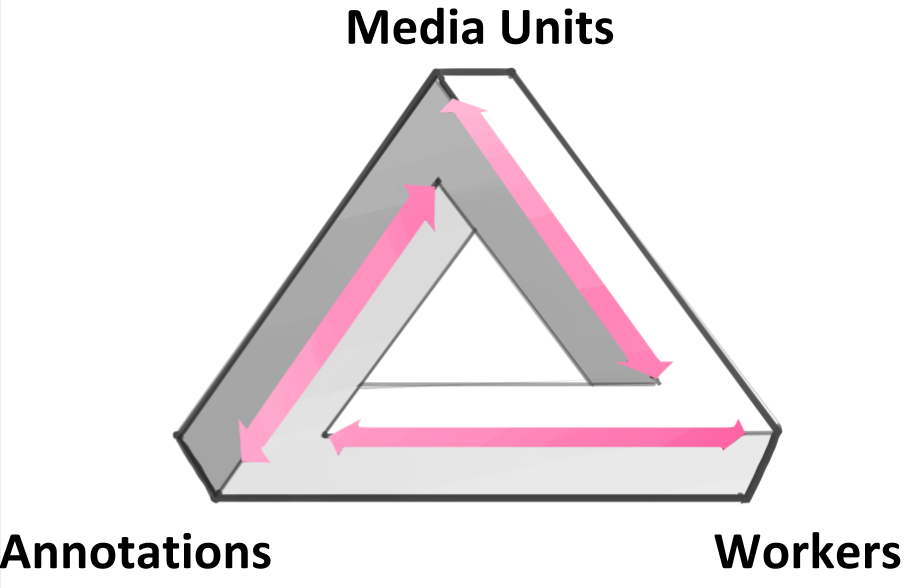

Central to the proposed methodology is the CrowdTruth model, which emphasizes measuring disagreement among annotators as a critical component for acquiring high-quality semantic annotations. Unlike conventional approaches that strive for consensus, this methodology acknowledges the inherent ambiguity present in many annotation tasks. It proposes that disagreement in annotations often reflects the complexity and diversity of interpretations possible in the data. The CrowdTruth approach is applied across a range of tasks, including Medical Relation Extraction, Twitter Event Identification, News Event Extraction, and Sound Interpretation, demonstrating its adaptability.

Results

The empirical evaluation reveals that the CrowdTruth methodology outperforms traditional consensus-based methods like majority vote across all tested annotation tasks. For closed tasks such as Medical Relation Extraction, CrowdTruth achieves notable improvements in recall and F1 scores, indicating a broader capture of accurate annotations without sacrificing precision. In open-ended tasks, such as Sound Interpretation, the methodology's ability to embrace a wider range of annotations is particularly advantageous, yielding higher quality ground truths that reflect the underlying ambiguity of the task.

Figure 1: Triangle of Disagreement

The integration of disagreement-aware metrics also demonstrates that increasing the number of annotators can significantly enhance annotation quality. This finding contrasts with the prevailing practice of employing a minimal number of annotators and highlights the importance of capturing a diverse range of perspectives to stabilize annotation quality.

Implications and Future Directions

The implications of this research are substantial for both practical applications and theoretical advancements in crowdsourcing methodologies. By effectively modeling and leveraging disagreement among annotators, the CrowdTruth methodology provides a robust framework for generating high-quality semantic annotations. This approach not only reduces reliance on expert annotators but also offers a cost-effective, scalable solution that can be extended to various content modalities, including text, images, and sounds.

Future research directions include expanding the methodology to accommodate more complex annotation tasks, such as those requiring multiple combined input types. Additionally, integrating state-of-the-art models for crowd worker and data feature analysis presents an opportunity to further enhance the effectiveness of ambiguity-aware metrics. Ultimately, the continuous development of models that express ground truth on a non-binary, continuous scale will pave the way for more nuanced and precise semantic annotation frameworks.

Conclusion

The proposed methodology presents a significant advancement in the field of crowdsourcing for Semantic Web applications. By recognizing and effectively harnessing inter-annotator disagreement, the methodology not only delivers high-quality ground truth annotations but also underscores the value of diverse perspectives in semantic interpretation tasks. This research lays a strong foundation for future innovations that will further bridge the gap between human annotations and automated information extraction systems.