- The paper presents a novel density-based NBV planning approach using frontier detection and local surface geometry estimation.

- It employs eigendecomposition to estimate planar surfaces and guides view generation for optimal sensor coverage.

- SEE demonstrates improved surface coverage and reduced computational time compared to traditional volumetric methods in large-scale scenes.

Surface Edge Explorer (SEE): Planning Next Best Views Directly from 3D Observations

Introduction

The paper introduces a novel scene-model-free approach to Next Best View (NBV) planning known as Surface Edge Explorer (SEE). SEE utilizes a density representation to enhance the observation of 3D scenes autonomously, improving surface coverage efficiently in less computation time compared to traditional volumetric approaches. It focuses on overcoming the limitations of volumetric and surface-based NBV planning methods, providing a scalable solution suitable for large-scale scene modeling.

Methodology

Frontier Detection

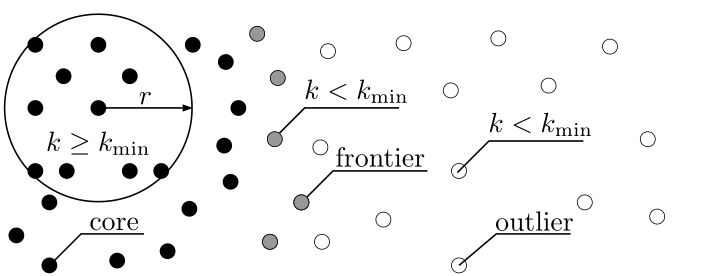

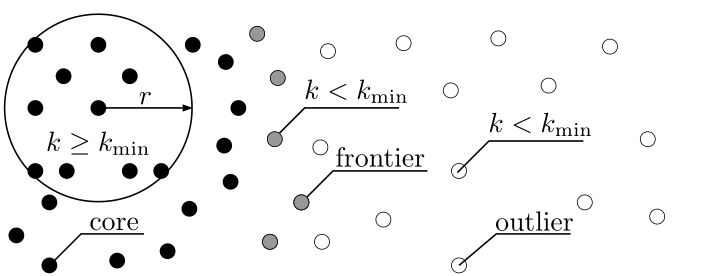

SEE's approach classifies points into core, frontier, or outlier categories based on local density, utilizing a method inspired by DBSCAN. This classification isolates frontiers, representing boundaries between observed and partially observed surfaces. By relying on measurement density and resolution, SEE avoids the extensive computational requirements of volumetric methods.

Figure 1: An illustration of SEE's density-based classification. Points with a sufficient number of neighbours are classified as core points (black) while those without are outlier points (white). Points with both core points and outlier points in their neighbourhood are frontier points (grey).

Surface Geometry Estimation

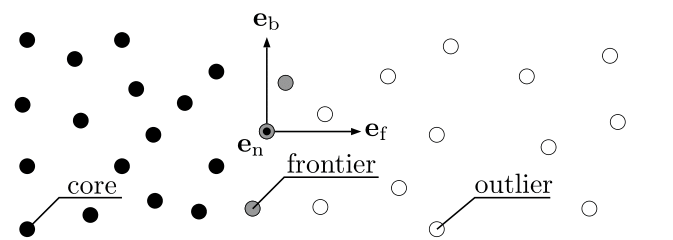

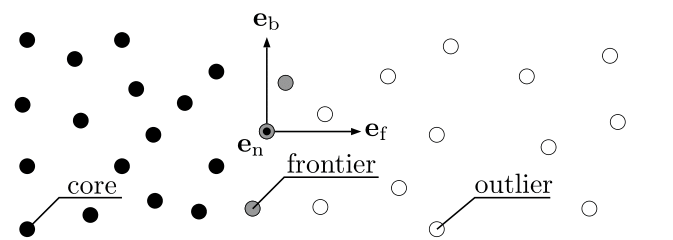

The local surface geometry around each frontier point is approximated as planar through eigendecomposition, allowing the estimation of the normal, boundary, and frontier vectors. These vectors guide view generation to ensure optimal sensor coverage.

Figure 2: An illustration of SEE's local surface geometry estimation. The geometry of the surface at the frontier points (grey) is estimated from nearby points with an orthogonal set of vectors. These vectors are orientated normal to the surface, e (out of the page), parallel to the boundary line, e and perpendicular to the boundary line (i.e., into the frontier), e.

View Generation

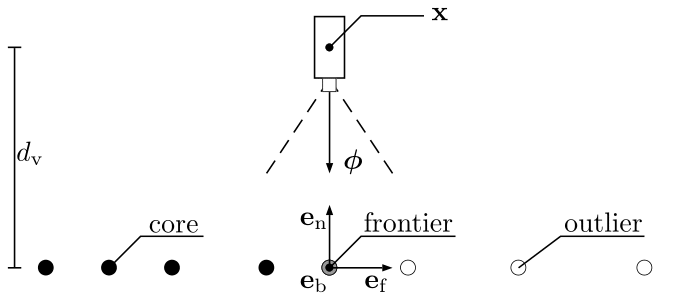

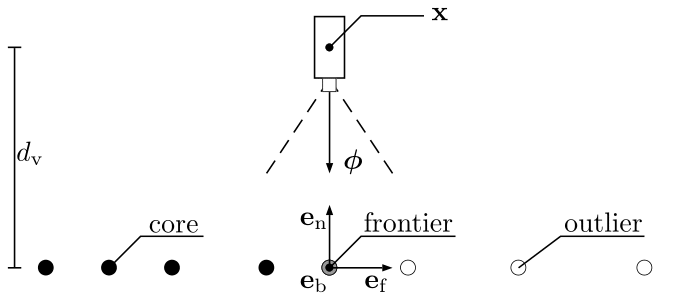

View positions are generated orthogonally to the estimated surface, aiming to maximize coverage of the boundary surfaces. The views are iteratively adjusted to accommodate surface discontinuities, optimizing observation fidelity.

Figure 3: An illustration of SEE's initial view proposal generation. Initial view proposals, (x, ),aregeneratedaroundeachfrontierpoint(grey)fromtheestimatedlocalsurfacegeometry,e,eande.Thevieworientation,, is given by the inverse sign of the normal vector, =−e. The view position, x, is set at a view distance, dv.

Evaluation

Experimental Setup

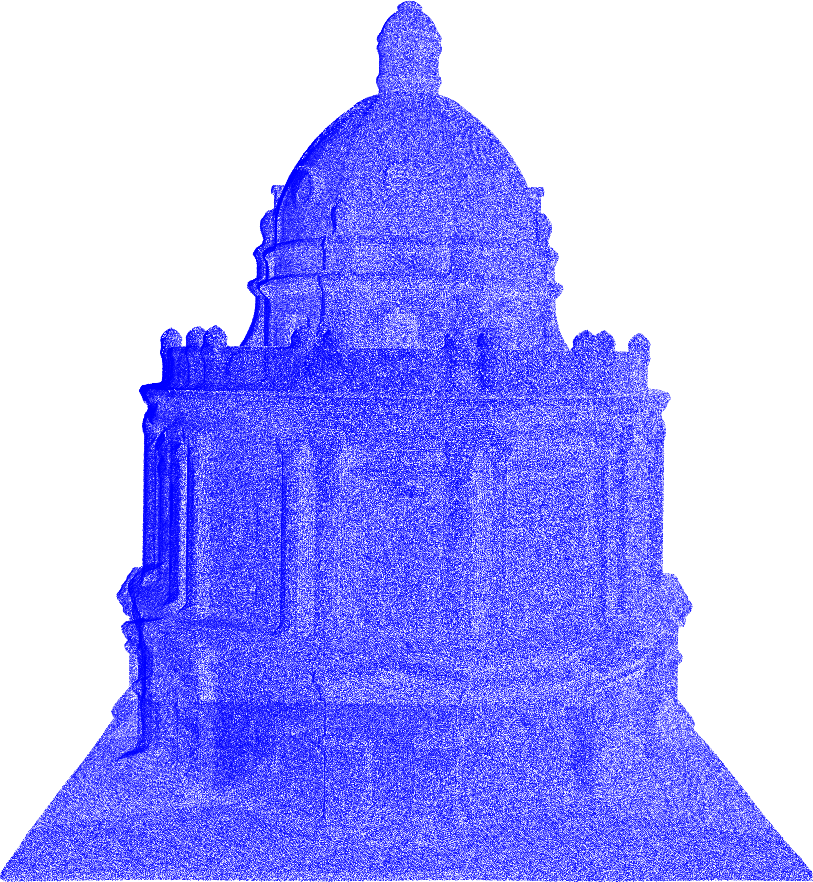

SEE was evaluated using simulations of standard 3D models, including the Stanford Bunny and Radcliffe Camera. The observed diffraction and occlusion were assessed, comparing SEE against volumetric approaches such as AF, AE, and OA. Measurements were simulated with Gaussian noise to approximate real-world scenarios.

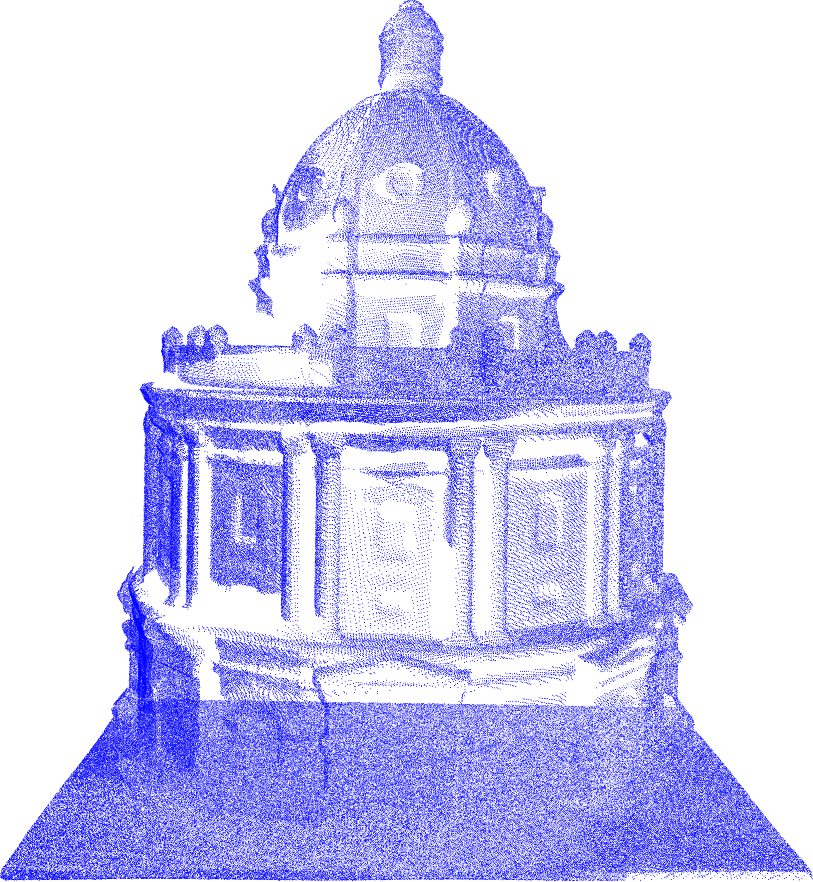

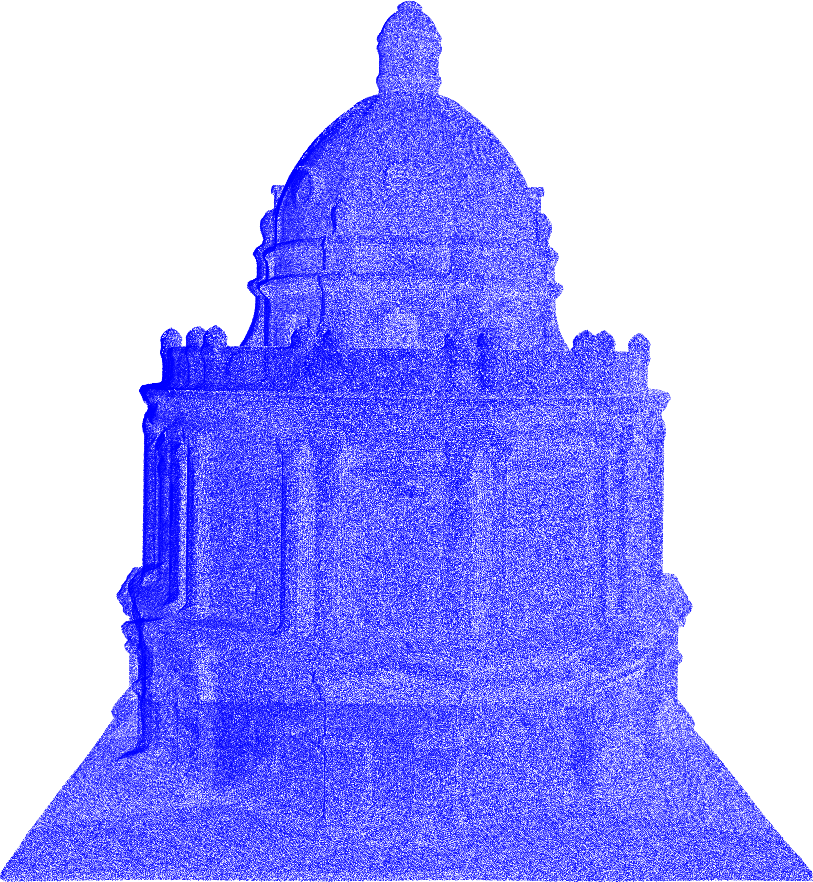

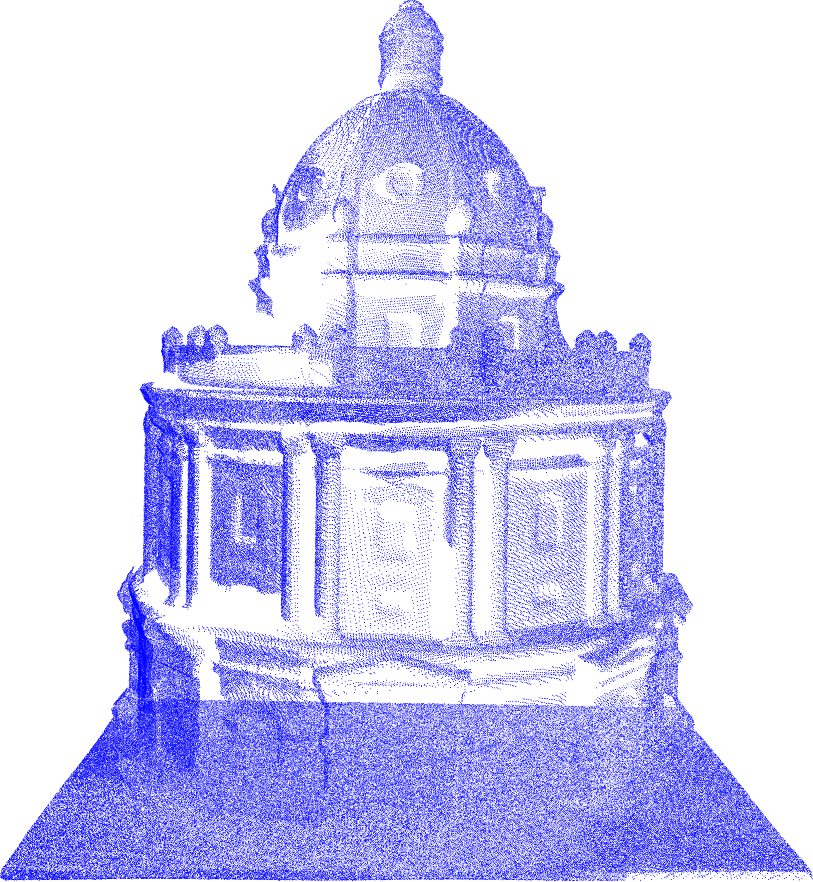

Figure 4: A comparison of the point cloud resulting from running SEE (a) and AE {Kriegel2015} (b) on a full-scale model of the Radcliffe Camera in Oxford.

Results

SEE demonstrated superior surface coverage with reduced computation time, effectively scaling to complex and large scenes. It maintained equivalent travel distances when compared to the evaluated volumetric approaches, showcasing its adaptability and efficiency.

Discussion

SEE's density-based NBV planning significantly reduces computational complexity, especially for large-scale scenes like architectural models where volumetric approaches become inefficient. Its intuitive parameterization avoids the cumbersome tuning associated with traditional surface methods.

Conclusion

SEE presents a robust and efficient scene-model-free NBV planning method capable of achieving high-resolution 3D scene models. Its density representation offers practical advantages over existing volumetric and surface approaches. Ongoing development aims to expand the comparison to surface approaches and real-world deployment with aerial platforms.

In summary, SEE provides a powerful tool for automated 3D scene observation, enhancing model resolution while minimizing computational burden, making it particularly beneficial for applications requiring detailed and large-scale 3D mapping.